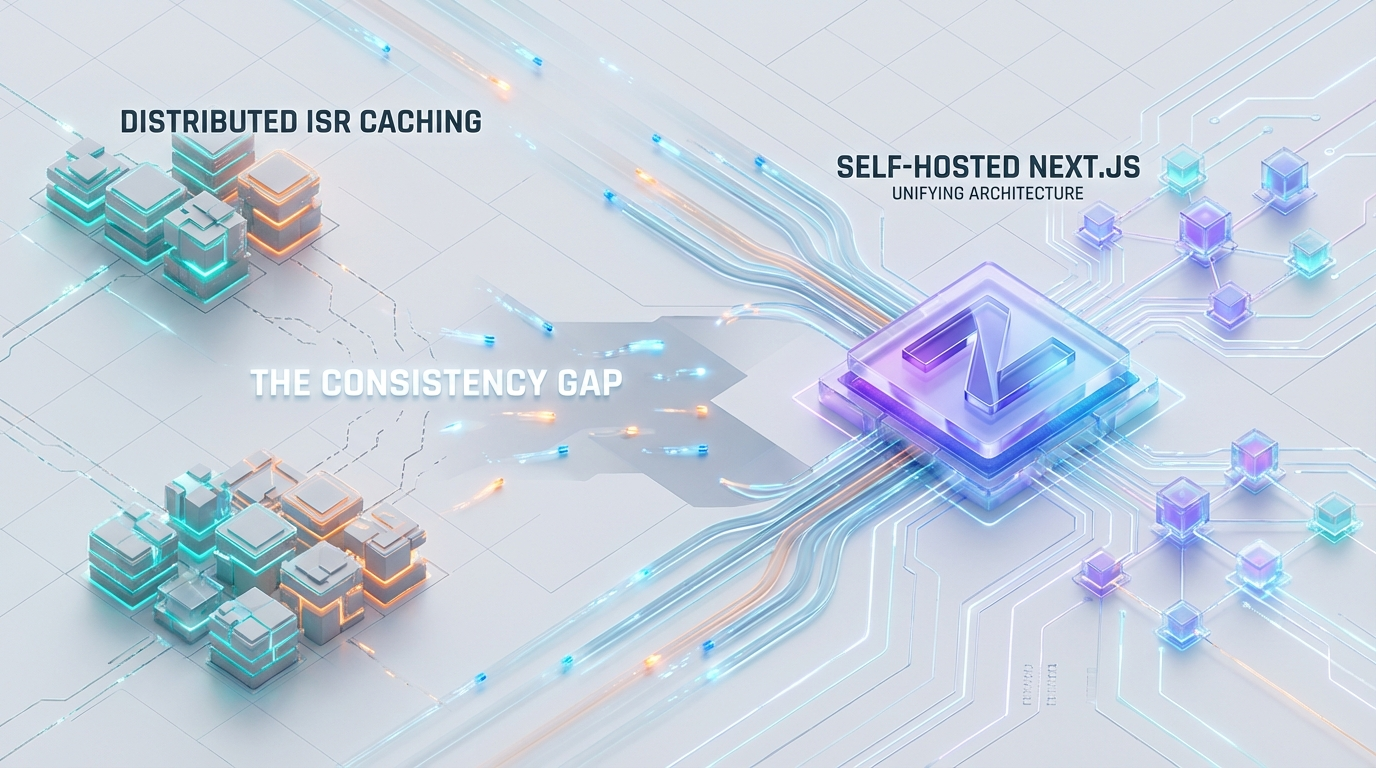

The Consistency Gap: Unifying Distributed ISR Caching in Self-Hosted Next.js

By the Azguards Engineering Team

When migrating Next.js from a monolithic deployment or a managed platform (like Vercel) to a self-hosted Kubernetes or Docker cluster, engineering teams often encounter a critical architectural blind spot: the “Split-Brain” cache scenario.

For Principal Engineers architecting high-scale frontend platforms, Incremental Static Regeneration (ISR) is the gold standard—offering the speed of static generation with the flexibility of server-side rendering. However, in a containerized environment, the default ISR implementation fails to scale horizontally.

This article dissects the consistency anomalies inherent in self-hosted Next.js, analyzes the cacheHandler API (specifically in v15), and details the engineering required to implement a strictly consistent, distributed L2 caching layer using Redis.

1. The Anatomy of the "Split-Brain" Anomaly

In a standard Next.js build, the framework defaults to the FileSystemCache. This writes ISR pages (HTML) and React Server Component (RSC) payloads (JSON) directly to the .next/cache directory on the local disk.

In a single-instance environment, this is performant and correct. In a distributed environment—such as a Kubernetes Deployment with NN replicas—this creates a fractured state.

The Failure Mode

Consider a cluster with three pods: Pod-A, Pod-B, and Pod-C.

- Initial State: All pods serve “Version 1” of a Product Page.

- The Trigger: A CMS webhook hits your revalidation API route (

/api/revalidate?tag=products). The load balancer routes this request toPod-A. - Local Revalidation:

Pod-AcallsrevalidateTag('products'). It regenerates the page, updates its local.next/cache, and now serves “Version 2”. - The Split-Brain:

- User X hits

Pod-A: Sees “Version 2” (Fresh). - User Y hits

Pod-B: Sees “Version 1” (Stale). - User Z hits

Pod-C: Sees “Version 1” (Stale).

The Resource Leak

The problem is not just consistency; it is computational waste. Pod-B and Pod-C will continue to serve stale data until they independently trigger their own revalidation cycles based on the revalidate time interval.

When they eventually do expire, the database takes a hit for every pod that needs to rebuild the page. In a cluster with 50 pods, a single content update triggers 50 separate build processes and 50 separate database queries for the same entity. This is an O(N)O(N) resource leak relative to your replica count.

The Solution: You must bypass the file system entirely and offload the ISR state to a shared L2 backend.

2. Architecture: The Externalized ISR State

To solve this, we utilize the cacheHandler API. This API allows us to intercept the get, set, and revalidateTag lifecycle methods of the Next.js Data Cache.

2.1 The Stack

The architecture moves the “Source of Truth” from the container file system to a distributed memory store.

Orchestration: Kubernetes / AWS ECS.

L1 Cache (Optional): LRU Memory (Node.js Heap). This offers the fastest access but reintroduces consistency risks (discussed in Section 4).

L2 Cache (Authoritative): Redis Cluster or Valkey. This provides shared consistency across all pods.

Synchronization: Redis Pub/Sub (for invalidating L1 caches across the cluster).

2.2 Next.js Version Matrix

The implementation details shift significantly between versions. Next.js 15 has stabilized the API, moving it out of experimental flags.

| Feature | Next.js v14 | Next.js v15 (Stable) |

|---|---|---|

| Config Key | experimental.incrementalCacheHandlerPath |

cacheHandler |

| Default Fetch Strategy | force-cache (Cached by default) |

no-store (Uncached by default) |

| Recommended Library | @neshca/cache-handler (v1.x) |

@fortedigital/nextjs-cache-handler (v2.x+) |

| Constraint | 2MB max JSON body (hard limit) | Configurable (subject to Redis 512MB limit) |

3. Engineering Implementation (Next.js 15)

The following implementation focuses on Next.js 15, using @fortedigital/nextjs-cache-handler as a foundation, but extending it with custom locking logic to solve the “Thundering Herd” problem.

3.1 Configuration (next.config.js)

In Next.js 15, the handler is a top-level configuration. Note the cacheMaxMemorySize: 0. This is critical. By setting this to zero, we disable the default in-memory L1 cache, forcing every read request to hit our custom handler (and subsequently Redis). This guarantees immediate consistency at the cost of slight network latency (~1-2ms).

3.2 The Thundering Herd Problem

A naive Redis implementation creates a race condition known as the “Thundering Herd” or Cache Stampede.

The Scenario: A high-traffic page expires. 50 concurrent requests hit the server. All 50 requests check Redis. All 50 receive null (MISS). Next.js immediately triggers 50 simultaneous builds for the same page. This spikes CPU usage and can exhaust database connection pools.

The Fix: A Distributed Lock with Spin-Wait.

We implement a mechanism where the first request acquires a lock (via Redis SETNX) to become the “Builder.” All other concurrent requests become “Waiters,” polling Redis until the data appears or the lock releases.

3.3 The Handler Logic

Below is the architectural model for a RedisCacheHandler that implements this locking strategy.

4. Critical Trade-offs & Engineering Limits

Implementing a custom cache handler is not merely a configuration change; it requires accepting specific trade-offs regarding eviction strategies and payload sizes.

4.1 The “Stale-While-Revalidate” Gap

One of the most jarring differences between Vercel’s managed edge and self-hosted Redis is the behavior of revalidateTag.

Managed Behavior: Serving stale content is handled by a global control plane. When revalidation occurs, the edge continues serving the stale version until the new version is ready (background revalidation).

Self-Hosted Behavior: As shown in the code above, revalidateTag typically performs a DEL command. This is a Hard Eviction.

The Consequence: The very next request after a revalidation event results in a CACHE MISS. The user experiences a blocking wait while the server regenerates the page. This negates the “Stale” part of SWR. Mitigation: To restore SWR behavior, you must modify the revalidateTag logic. Instead of DEL, you should set the cache entry’s metadata to expired: true (Soft Eviction). In the get method, if you encounter an expired entry, you return it (so the user sees content instantly) but trigger a background regeneration. Note: The Next.js cacheHandler API contract is strict. Returning null from get forces a blocking rebuild. There is currently no native “Return Stale & Rebuild Background” signal in the API method signature. Replicating true SWR often requires a complex sidecar process.

4.2 L1 Memory vs. Consistency

If you remove cacheMaxMemorySize: 0 and allow a 50MB local buffer (L1), you reintroduce the Split-Brain problem. Pod-A might hold a stale version in RAM even after Pod-B has updated Redis.

Strict Consistency: Keep cacheMaxMemorySize: 0. Every request hits Redis.

High Performance: Use @fortedigital/nextjs-cache-handler with an in-memory L1 enabled. However, this requires implementing Redis Pub/Sub. When revalidateTag runs, you must broadcast a message to all pods: “Clear local cache for tag: products”. Without this, your L1 cache will serve stale data until the pod restarts.

4.3 Large Payload Failures

Redis is not an object store. While it can handle strings up to 512MB, performance degrades rapidly with values over 1MB due to network bandwidth and single-threaded blocking during I/O.

The Danger: Large ISR pages often contain massive JSON blobs in pageProps or RSC payloads. A 2MB payload across 1000 requests/sec is 2GB/s of network throughput—likely saturating your Redis or K8s network interface.

5. Performance Benchmarks: The Impact

The following data compares the behavior of a default FileSystem cache versus the Redis Architecture under load (50 concurrent users requesting a “Cold” page).

| Metric | Default FileSystem Cache (3 Pods) | Distributed Redis Cache (Locked) |

|---|---|---|

| Total Builds Triggered | 3 (1 per pod) | 1 (Cluster-wide) |

| Database Queries | 150 (3 builds × 50 queries) | 50 (1 build × 50 queries) |

| Consistency | Fail (Split-brain possible) | Pass (Atomic consistency) |

| Time to First Byte (Cold) | ~250ms (Variable) | ~260ms (Redis overhead) |

| Time to First Byte (Warm) | ~5ms | ~15ms (Network RTT) |

While the Redis approach introduces a minor latency penalty (~10ms) on warm reads due to network round-trips, it completely eliminates the O(N) computational waste and guarantees that users never see conflicting data versions.

6. Azguards Analysis: Specialized Engineering

At Azguards Technolabs, we view Next.js not just as a frontend framework, but as a distributed system that requires rigorous backend architecture.

The “out-of-the-box” configuration for Next.js is optimized for hobbyists or managed platforms. Enterprise self-hosting requires a fundamental reimagining of the caching layer. Moving from file-system reliance to a distributed, locked, and compressed Redis architecture is the only way to ensure reliability at scale.

We partner with engineering teams to perform Performance Audits and Infrastructure Re-platforming. If your team is struggling with cache inconsistency, high database loads from ISR revalidation, or Kubernetes resource contention, we can help engineer the solution.

Conclusion

The “Split-Brain” cache is a silent performance killer in self-hosted Next.js applications. By adopting the cacheHandler API in Next.js 15 and implementing a Redis-backed strategy with stampede protection, you unify your application state.

Would you like to share this article?

All Categories

Latest Post

- Race Conditions in Make.com: Eliminating the Dirty Write Cliff with Distributed Mutexes

- Solving WooCommerce Checkout Race Conditions with Redis Redlock

- Eliminate the LLM Padding Tax: Optimizing Triton & TRT-LLM

- The TOAST Bloat: Mitigating Postgres Write Degradation in High-Volume N8N Execution Logging

- HPOS Migration Under Fire: Eliminating WooCommerce Dual-Write IOPS Bottlenecks at Scale