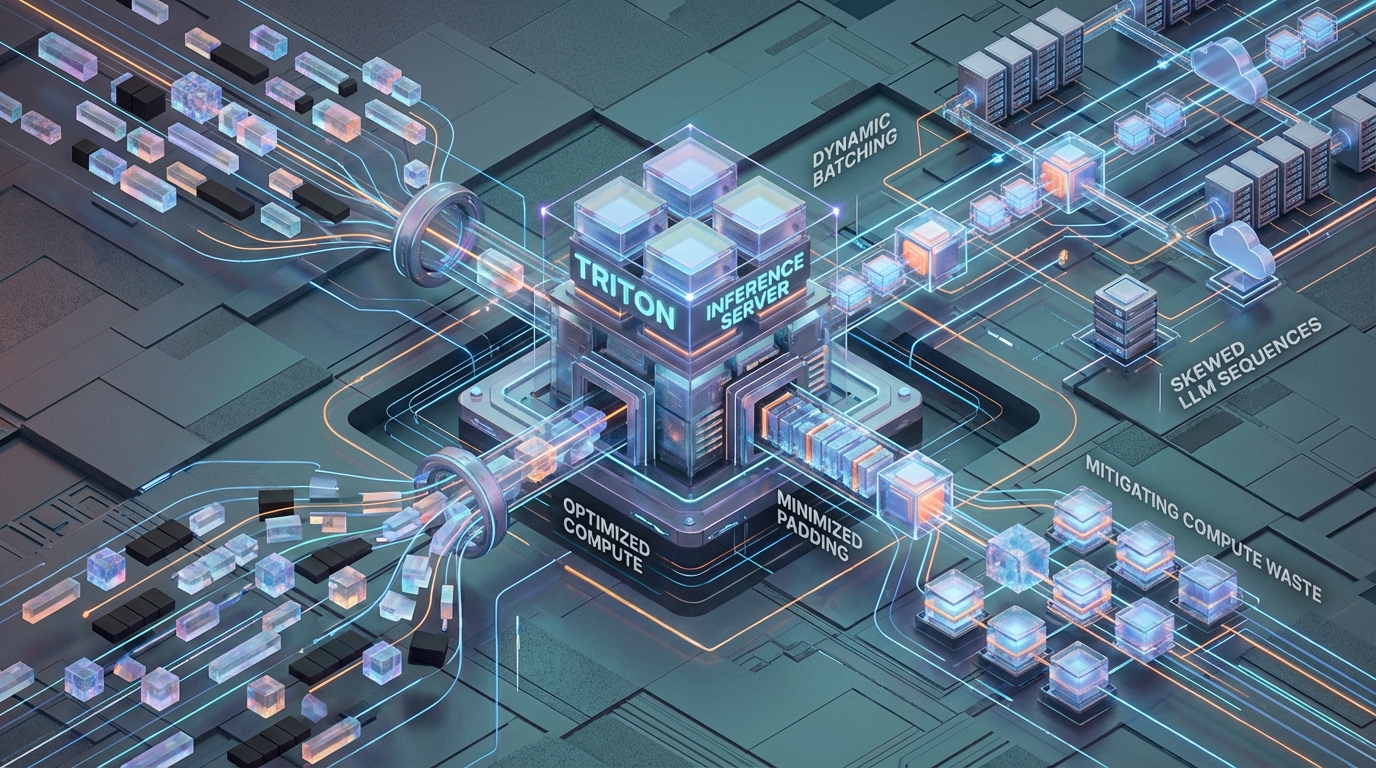

Eliminate the LLM Padding Tax: Optimizing Triton & TRT-LLM

Architectural Diagnostics: Quantifying the Padding Tax

efore deploying the mitigation, it is critical to diagnose the mathematical boundaries of the problem state. The Padding Tax manifests across two distinct hardware bottlenecks: VRAM allocation and Compute (FLOP) execution.

Memory Waste Limits (The VRAM Bound)

In standard dynamic batching, theoretical memory allocation for the Key-Value (KV) cache per batch scales strictly according to the maximum sequence length:

O(N×Lmax×nlayers×dmodel×precision_bytes)O(N×Lmax×nlayers×dmodel×precision_bytes)

This contiguous memory requirement creates massive artificial inflation of the VRAM footprint. We quantify this inefficiency using the Memory Waste Ratio (WW):

W=1−∑LiN⋅LmaxW=1−N⋅Lmax∑Li

Consider a highly skewed production edge case: an ingress queue simultaneously receives a 4,096-token RAG context prompt and a 16-token conversational query. To batch these requests, the inference server pads the 16-token sequence to 4,096 tokens. For the shorter sequence, this yields a memory waste ratio of W≈99.6%W≈99.6%.

At an infrastructure level, this artificial VRAM inflation forces the GPU to prematurely hit hard Out-Of-Memory (OOM) thresholds. The server drops its maximum concurrent request capacity not because the physical compute limit has been reached, but because contiguous memory allocation is saturated with zeros.

FLOP Degradation in Batched GEMM Operations

The secondary penalty of the Padding Tax is compute degradation. Attention mechanisms rely on computing Q×KTQ×KT. Unoptimized batched GEMM operations force the Streaming Multiprocessors (SMs) to execute intermediate calculations for these padded tokens before applying attention masks.

In our theoretical engineering model for a highly skewed batch, attention FLOPs scale as O(N⋅Lmax2)O(N⋅Lmax2). Even if the framework is optimized to skip the mathematical attention calculation for padded tokens, the contiguous memory allocation forces the GPU to execute unnecessary HBM reads and writes. Because LLM inference is fundamentally memory-bandwidth bound, reading padded blocks from HBM heavily degrades Time-To-First-Token (TTFT) and suppresses global throughput.

Core Mitigation: Iteration-Level Scheduling with TensorRT-LLM

To eliminate zero-padding constraints, the architecture must abandon Triton’s default request-level batching. The solution requires iteration-level scheduling—also known as continuous or in-flight batching—facilitated via the tensorrtllm C++ backend.

1. Engine Compilation and Kernel Selection

The mitigation begins at the engine compilation phase. Standard TRT engines still default to padded operations unless explicitly instructed otherwise. When building the engine via trtllm-build, you must enable the --use_paged_context_fmha (Paged Context Flash Attention) flag.

This flag fundamentally alters the engine architecture. It instructs TensorRT to implement Variable Sequence Length (VSL) kernel operations directly. By leveraging Flash Attention optimized for paged memory, the engine natively executes tensor operations without requiring sequence padding at the mathematical layer.

2. Backend Migration and Decoupled Execution

Within the Triton configuration, standard backends must be explicitly deprecated. The configuration must declare triton_backend: "tensorrtllm".

Furthermore, executing continuous batching requires untethering the response generation from the request grouping. In request-level batching, the batch is only as fast as its longest sequence. In continuous batching, finished sequences are ejected at the iteration level, and new sequences are injected immediately. This requires explicitly setting decoupled_mode: true.

The Protocol Shift: Activating decoupled mode breaks standard HTTP/REST endpoints. Because REST relies on a synchronous request-response cycle, it cannot handle the asynchronous token stream generated by decoupled execution. The client routing layers must be migrated to bi-directional gRPC streaming, utilizing the ModelInfer API via stream. Attempting to route HTTP traffic to a decoupled Triton backend will result in immediate head-of-line blocking and connection failure.

3. Defining the Batching Strategy

Finally, the backend must be instructed to abandon static groupings. The configuration parameter must be explicitly set to batching_strategy: inflight_fused_batching. This hands control of tensor execution over to the TRT-LLM continuous scheduler.

Overcoming VRAM Fragmentation: Paged KV Caching Limits

Operating on decoupled, unpadded sequences eliminates the compute waste, but it shifts the primary infrastructure bottleneck directly to memory fragmentation. Standard contiguous memory allocation for KV caches fails entirely in VSL workloads because dynamic ejection and injection of sequences leave fragmented “holes” in VRAM.

The tensorrtllm backend solves this via Paged KV Caching, borrowing the concept of virtual memory paging from operating systems. Memory is pre-allocated into fixed-size blocks, and tokens are mapped non-contiguously.

Hard Limits and Memory Constraints

Managing the Paged KV Cache pool is highly sensitive and dictates the stability of the entire inference node.

kv_cache_free_gpu_mem_fraction: This parameter dictates the strict fraction of VRAM allocated to the Paged KV cache pool after the engine weights have been loaded. The default is 0.9 (90%). However, tuning this requires precise environmental awareness. Pushing this threshold to 0.95+ severely risks OOM panics during context switching or multi-tenant traffic spikes. Conversely, when utilizing lower precision formats, lowering the threshold to 0.8 is mathematically safer for FP8 quantizations, ensuring sufficient overhead for alignment and temporary tensor buffers.

max_tokens_in_paged_kv_cache: This is an explicit token cap on the cache allocator. Lead Engineers must set this boundary when dealing with multi-model Triton instances. Without this hard cap, a high-skew RAG workload on Model A can effortlessly starve Model B of shared VRAM.

KV Cache Reuse (Prefix Caching) and Security Bounds

To maximize throughput for shared system prompts, enable the backend to reuse memory blocks via enable_kv_cache_reuse: true. This optimization transforms the Paged Cache into a Radix Tree. Instead of recomputing the prefill phase for recurring system instructions, the engine maps the pointers to the existing KV blocks, drastically reducing TTFT.

Security Edge Case: In multi-tenant environments, Prefix Caching introduces a severe vulnerability. If Tenant A and Tenant B utilize the exact same system prompt, the Radix Tree will share the blocks. However, if an attacker attempts prompt injection, or if isolated tenant state is somehow leaked through shared cache bounds, it compromises isolation. To prevent this, MLOps engineers must inject a unique cache_salt string into the client request payload. The TRT-LLM scheduler calculates Radix Tree block reuse based strictly on isolated salt boundaries, enforcing cryptographic separation between tenants at the KV block level.

Scheduler Dynamics: Delaying Queues vs. Token Latency

In an iteration-level scheduling paradigm, the behavior of the ingress queue directly dictates GPU SM saturation. Tuning the scheduler requires a calculated tradeoff between latency and global throughput.

max_queue_delay_microseconds: This parameter controls the coalescing window for request ingress before the scheduler dispatches the iteration.

- Strict Latency SLAs (

0–1,000µs): If the primary metric is strictly TTFT, configuring a sub-millisecond delay forces the scheduler to process the queue almost immediately. This minimizes latency but risks lower SM saturation if traffic is sporadic. - High Throughput (

5,000–20,000µs): By delaying dispatch, you mathematically increase the probability that multiple VSL requests enter the same TRT-LLM iteration specifically for the compute-heavy Prefill/Context phase. Packing the prefill phase maximizes batched GEMM efficiency. The Batching Illusion: Under this architecture,triton_max_batch_sizeacts merely as a frontend ingress throttle. The actual runtime execution batch size is no longer static; it is determined dynamically on a per-iteration basis by the TRT-LLM scheduler, constrained purely by available KV cache blocks and engine definition limits (max_num_tokens,max_seq_len).

Production Blueprint: Decoupled Sequence Processing

Implementing a zero-padding architecture requires strict configuration. Below is the production blueprint for the all_models/tensorrt_llm/config.pbtxt to enable high-throughput, In-Flight Batching execution.

Architectural Deployment Topology

A critical deployment constraint involves multi-GPU orchestration. To prevent Message Passing Interface (MPI) communication bottlenecks at the Triton frontend, Triton must be operated in Leader Mode (num_nodes: 1) utilizing an explicit participant_ids map.

Standard multi-process deployments force cross-GPU coordination over PCIe or Ethernet, crippling throughput. Leader Mode allows the single Triton frontend node to directly map the tensor_parallel_size: 4 execution and distribute the Paged KV cache blocks symmetrically over NVLink. This topology keeps tensor parallel communication latency strictly inside the high-speed GPU interconnect, fully mitigating the memory footprint limitations of high-skew enterprise workloads.

The 'Before vs After': Benchmarking the Architecture

Migrating from standard dynamic batching to TensorRT-LLM with In-Flight Batching yields deterministic improvements in resource utilization. The following benchmark table illustrates the structural shift derived from mitigating the Padding Tax.

| Architectural Vector | Standard Dynamic Batching | In-Flight Fused Batching (TRT-LLM) |

|---|---|---|

| Sequence Aggregation | Zero-padded to maximum length (Lmax) | Native Variable Sequence Length (Unpadded) |

| High-Skew Memory Waste | W ≈ 99.6% (16-token query in 4K batch) | Effectively 0% (Paged Block Memory) |

| Attention FLOP Complexity | O(N · Lmax2) (HBM Read Penalties) | Bound strictly to native sequence compute |

| Max Batch Size Bottleneck | VRAM-bound Out-Of-Memory (OOM) via padding | Bounded dynamically by KV Cache Block availability |

| KV Cache Memory Topology | Contiguous Allocation (High internal fragmentation) | Paged Memory Allocation (Negligible fragmentation) |

| Shared System Prompt TTFT | Unoptimized parallel compute repetition | Minimized via Radix Tree Prefix Cache Reuse |

| Client Comm. Protocol | Synchronous HTTP/REST (Head-of-line blocking) | Bi-directional gRPC Streaming (ModelInfer) |

Azguards Technolabs: Performance Audit and Specialized Engineering

Migrating an enterprise Triton deployment to utilize continuous batching, distributed tensor parallelism, and Paged KV Caching is not a standard DevOps task. It requires low-level kernel awareness and strict mathematical tuning of VRAM allocators.

At Azguards Technolabs, we provide specialized engineering for high-throughput LLM architectures. Our Performance Audit and Specialized Engineering practice is built for Lead MLOps Engineers who need to move past standard tutorials and resolve actual production bottlenecks. We do not provide generic advice; we dissect your compute patterns, isolate your HBM read/write degradations, refactor your config.pbtxt boundaries, and engineer secure, isolated prefix caching topologies customized to your specific tenant skew.

If your infrastructure is triggering artificial OOMs, or if your TTFT metrics degrade under mixed-sequence loads, your architecture is currently paying the Padding Tax.

Would you like to share this article?

Stop Paying the Padding Tax.

Our engineering team specializes in high-throughput LLM architectures optimizations. Let us audit your inference strategy.

Get in TouchAll Categories

Latest Post

- Mitigating Checkpoint Collisions & Write-Skew in LangGraph

- Spring Kafka Exactly-Once: Mitigating the Fencing Avalanche & Zombie Producers

- The Orphaned Thread Crisis: Managing Schema Drift in Suspended LangGraph Workflows

- How to Fix Make.com Webhook Queue Overflows: The DLQ & Redis Strategy

- DuckDB Spill Cascades: Mitigating I/O Thrashing in Out-of-Core SEO Data Pipelines