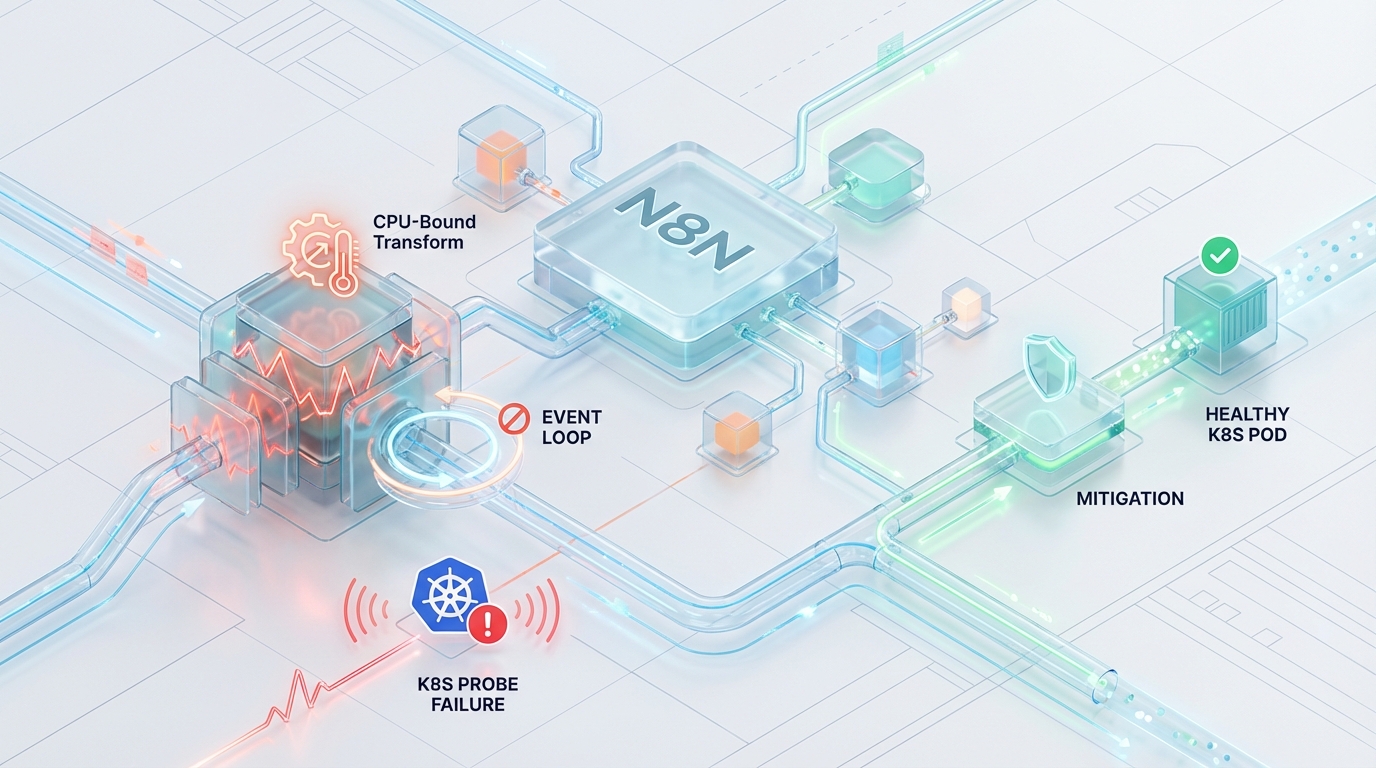

The Event Loop Trap: Mitigating K8s Probe Failures During CPU-Bound Transforms in N8N

As enterprise platform teams scale n8n worker clusters backed by BullMQ and Redis, a distinct class of workloads begins to surface—CPU-bound data transformations that behave very differently from typical I/O-driven API orchestration.

Multi-megabyte payload processing, deep JSON serialization, and large-scale array mutations inside Code nodes introduce synchronous execution patterns within Node.js that stress the V8 engine far beyond routine webhook handling. What appears to be a simple data transformation can silently monopolize the main thread, blocking the libuv event loop and halting all asynchronous I/O.

In a Kubernetes deployment, this temporary event loop starvation can escalate into systemic instability. Liveness probes begin timing out, graceful shutdown handlers never execute, distributed job locks fail to renew, and worker pods are forcefully terminated. The result is a cascading crash loop where stalled jobs are repeatedly re-queued across the worker pool, giving the illusion of infrastructure failure when the root cause lies in synchronous CPU execution.

Eliminating this failure mode requires architectural alignment across infrastructure, workflow design, and workload isolation. By recalibrating probe thresholds, intentionally yielding the event loop through batching strategies, and isolating heavy compute workloads from latency-sensitive workers, platform teams can restore deterministic scaling—even during massive data ingestion pipelines.

Architectural Failure Mode: V8 Event Loop Starvation vs. K8s Probes

To understand the severity of this failure vector, we must examine the intersection of the V8 JavaScript engine, the Kubernetes Kubelet lifecycle, and BullMQ’s distributed state management. When the event loop is blocked by synchronous CPU execution, an n8n worker pod enters a predictable, five-stage cascading failure.

Phase 1: Express Server Starvation

The n8n worker process exposes a /healthz endpoint via an internal Express server, which relies entirely on the main libuv event loop to accept TCP connections and route HTTP requests. When a userland script initiates a massive Array.prototype.map() on a multi-megabyte payload, the main thread halts all async I/O processing. Incoming HTTP requests from the Kubelet liveness probe sit unprocessed in the OS-level socket backlog until they simply time out.

Phase 2: Liveness Probe Failure & Signal Queuing

Kubernetes liveness probes are frequently and dangerously misconfigured with aggressive defaults, such as a 1s timeoutSeconds threshold. Event loop lag during even moderate payload processing will easily breach this 1s latency limit, causing artificial probe failures well before a legitimate Out-Of-Memory (OOM) event or complete process deadlock.

Once the Kubelet records consecutive failures exceeding the configured failureThreshold, it flags the n8n worker pod as Unhealthy and issues a SIGTERM command to the container process.

Phase 3: Signal Handler Paralysis

In UNIX-based systems running Node.js, asynchronous signal handling (like SIGTERM or SIGINT) is brokered through the event loop. While the operating system successfully delivers the signal to the process, the callback mapped via process.on('SIGTERM') cannot be executed immediately. Because the JavaScript main thread remains wholly occupied by the synchronous data transformation, the termination signal remains trapped indefinitely in the libuv pending signal queue. The pod is conceptually dead to Kubernetes, but the V8 engine is entirely unaware, continuing to churn through the payload.

Phase 4: Hard Kill & Stalled Job Locks

Because the n8n worker cannot execute its graceful shutdown sequence, Kubernetes eventually exhausts the defined terminationGracePeriodSeconds and issues a definitive SIGKILL. The process is instantaneously destroyed.

Concurrently, a critical distributed state failure occurs in the background. BullMQ relies on background promises to extend its job lock in Redis. The default lock expiration (lockDuration) is 30s, with the worker typically attempting to renew this lock at an interval of half the duration (15s). If the V8 thread blocks for more than 15s, the worker fails to renew its lock. Furthermore, because the worker was killed via SIGKILL, it never gracefully sends a NACK (Negative Acknowledgement) back to the Redis queue.

Phase 5: Split-Brain & Re-queue Cascade

The failure culminates in a cluster-wide crash loop. BullMQ’s stalled job sweeper process identifies that the job lock has expired without acknowledgement. Acting as designed, the sweeper reclaims the unacknowledged job, removes it from the Active set, and pushes it back into the Wait queue.

Almost immediately, a newly provisioned or idle n8n worker pod picks up this “poisoned” job. The new worker reproduces the exact same synchronous CPU block, starving its own event loop, failing its own health probes, and eventually suffering a SIGKILL. This cycle repeats infinitely, tearing down worker pods as fast as Kubernetes can schedule them.

Edge Cases & Hard Limits: V8 Garbage Collection Thrashing

The duration of the event loop block is not solely determined by the computational complexity of the userland JavaScript. Platform engineers must model the compounding overhead of the V8 Garbage Collector (GC).

V8 utilizes a Mark-and-Sweep garbage collection algorithm that periodically triggers “Stop-the-World” pauses. As an n8n Code node iterates through a massive JSON array, allocating hundreds of thousands of new objects, the process rapidly consumes heap memory. If the payload approaches the standard V8 old space limit—defaulting to roughly 1.4GB via max-old-space-size without explicit tuning—the GC is forced into aggressive overdrive.

Before the process reaches an OOMKilled state, the V8 engine will pause all execution to attempt emergency memory reclamation. This severe CPU GC thrashing compounds the existing synchronous execution, exponentially lengthening the duration of the event loop block and guaranteeing a K8s probe timeout.

Actionable Remediation Strategies

Fixing this architectural flaw requires acknowledging that CPU-bound operations in a single-threaded runtime cannot be “optimized” away—they must be isolated, batched, and decoupled from critical infrastructure lifecycles.

1. Tuning K8s Probes vs. BullMQ Lock Durations

The immediate mitigation is infrastructure alignment. You must resolve the impedance mismatch by decoupling the Kubelet liveness probe from transient event loop lag, and synchronizing Kubernetes termination limits with the BullMQ stalled job configuration.

Kubernetes Deployment Configuration:

2. Event Loop Yielding via Workflow Architecture

While infrastructure tuning treats the symptoms, application-layer refactoring solves the core compute problem. The synchronous CPU block must be fractured to allow libuv to breathe.

Instead of processing a multi-megabyte array entirely within a single Code node execution block, refactor the n8n workflow to utilize the Loop node (formerly Split In Batches).

The Mechanics of Async Boundaries in n8n: Within n8n’s execution engine, the transition phase between nodes leverages Promises and internal equivalents to setImmediate() or process.nextTick(). By slicing a massive array into chunks (e.g., 500 records per iteration) and passing them back through the workflow graph, the worker implicitly yields the main thread back to the libuv event loop at the boundary of every loop iteration.

This architectural yielding allows the Node.js process to:

- Process pending Express HTTP requests, successfully responding to

/healthzprobes. - Execute background BullMQ network promises, successfully renewing the 30s job lock.

- Allow the V8 Garbage Collector to free and sweep memory cleanly between chunks, preventing the 1.4GB heap thrashing.

Code Node Refactoring Example:

3. Advanced Worker Routing (Execution Isolation)

For enterprise multi-tenant environments, relying solely on workflow developers to implement proper batching is a brittle operational strategy. If specific workflows are inherently CPU-bound and batching is not architecturally viable, platform engineers must enforce compute isolation at the infrastructure layer.

By utilizing n8n Advanced Worker Routing (available in v1.x+ Enterprise deployments) in conjunction with Kubernetes Node Affinities, you can provision a dedicated, isolated pool of “Heavy Compute” workers. This guarantees that intensive data transforms never starve the event loops of latency-sensitive Webhook or API worker pods.

n8n Environment Variable Configuration: Instruct specific worker deployments to subscribe exclusively to a dedicated Redis queue.

Kubernetes Isolation (Taints, Tolerations, and Node Affinity): Schedule these isolated workers strictly on dedicated Kubernetes nodes provisioned with a high CPU-to-memory clock ratio (such as AWS c6i instances). This raw clock speed minimizes the absolute duration of the synchronous execution time. Apply taints to these nodes to repel standard API workloads.

The 'Before vs After' Performance Matrix

Relying on out-of-the-box defaults for high-throughput orchestration inevitably leads to systemic fragility. The following matrix illustrates the transition from a default, crash-prone configuration to a resilient, enterprise-grade architecture based on the thresholds detailed in our research.

| System Parameter | Fragile Default Architecture | Resilient "Azguards" Architecture | Engineering Impact |

|---|---|---|---|

| K8s Probe Timeout (timeoutSeconds) | 1s (Often blindly applied) | 10s | Absorbs heavy V8 event loop lag without triggering false-positive Pod failures. |

| K8s Failure Threshold | Standard (e.g., 1-2 attempts) | 4 (Requires 40s of failure) | Validates that the node is truly deadlocked, not just processing a heavy array block. |

| Termination Grace Period | Standard (e.g., 30s) | 60s | Exceeds the 30s BullMQ lock, granting enough time to catch SIGTERM and issue NACKs. |

| Payload Execution Topology | Monolithic Array.map() | Chunked (500 records) via Loop Node | Fractures CPU block; yields loop for /healthz checks, lock renewals, and V8 GC sweeps. |

| V8 Heap Constraint | Thrashing near 1.4GB limit | Controlled via chunking boundaries | Avoids "Stop-the-World" Garbage Collection overhead compounding the loop block. |

| Worker Queue Routing | Global (default) | Isolated (heavy_compute_queue) | Prevents CPU-bound jobs from suffocating fast I/O Webhook and API workers. |

| Hardware Targeting | General Compute (e.g., m5) | Compute Optimized (e.g., c6i) | High CPU-clock ratios minimize the baseline synchronous execution time of JS payloads. |

By explicitly aligning the timing mechanisms across the cluster—from the Kubelet down to the BullMQ Redis state—engineers eliminate the race conditions that cause stalled job re-queuing.

Performance Audits and Specialized Engineering with Azguards Technolabs

Scaling n8n beyond departmental automation into a Tier-1 enterprise middleware layer requires specialized knowledge of Node.js internals, asynchronous state management, and Kubernetes scheduler mechanics. The “Event Loop Trap” is just one example of the complex, distributed systems challenges that emerge when workflow engines process massive data ingestion pipelines.

At Azguards Technolabs, we function as the specialized engineering partner for platform teams dealing with these exact “Hard Parts.” We do not just deploy infrastructure; we conduct deep-dive Performance Audits and execute Specialized Engineering interventions. Whether it is profiling V8 heap allocations in your production pods, architecting custom advanced worker routing topologies, or tuning your distributed state stores, Azguards ensures your n8n infrastructure scales with absolute determinism.

We bridge the gap between application-level workflow logic and low-level cluster orchestration, ensuring your mission-critical automations never succumb to architectural failure modes.

Conclusion

The crash loop cascade caused by CPU-bound transforms in n8n is a symptom of a fundamental architectural clash: synchronous userland execution colliding with asynchronous infrastructure probes. By implementing the three layers detailed above—infrastructure timeout tuning, architectural batching to yield libuv, and workload isolation for heavy compute—platform teams can permanently eliminate this split-brain failure vector.

Stop treating workflow orchestration as a black box and start engineering it as a highly tuned distributed system.

Is your cluster suffering from unexplained probe timeouts, stalled jobs, or erratic scaling during heavy data ingestion? Contact the systems engineering experts at Azguards Technolabs for a comprehensive architectural review and specialized enterprise implementation.

Would you like to share this article?

Experiencing Probe Failures or Stalled Jobs in n8n?

Azguards Technolabs conducts deep infrastructure performance audits for enterprise automation platforms. From V8 profiling to Kubernetes scheduling alignment, we eliminate crash loops at the architectural root.

All Categories

Latest Post

- The Event Loop Trap: Mitigating K8s Probe Failures During CPU-Bound Transforms in N8N

- The Checkpoint Bloat: Mitigating Write-Amplification in LangGraph Postgres Savers

- The Query Cost Cliff: Mitigating Storefront API Throttling in Headless Shopify Flash Sales

- Scaling Enterprise SEO Graphs Without OOM Kills: A Polyglot Architecture Approach

- The Orphaned Job Trap: Recovering Stalled BullMQ Executions in Auto-Scaled N8N Clusters