The LangChain Dynamic Schema Leak: Fixing Pydantic V2 Native Memory Exhaustion

Your container dies every 3.6 hours. Python’s memory profiler reports nothing unusual. The culprit is 1.5 KB per request invisible to tracemalloc, fatal at scale.

When deploying LangChain to serve thousands of concurrent users, engineering teams routinely reach for dynamic schema generation to bind tenant data, user sessions, and database contexts directly into tool definitions at the request level. It is a clean, expressive pattern that works flawlessly in a local Jupyter notebook.

In production, it is a slow-motion disaster.

Calling StructuredTool.from_function or create_schema_from_function per-request triggers Pydantic V2’s Rust-backed pydantic-core to compile a new SchemaValidator on every invocation. Because the resulting BaseModel metaclass registers itself in Python’s global typing caches, it is permanently immortalized—bypassing generational garbage collection entirely. At 500 RPS, those 1.5–2 KB native allocations compound to over 3.6 GB of unreclaimable RSS memory per hour, culminating in a kernel SIGKILL that deadlocks your async connection pools on the way down.

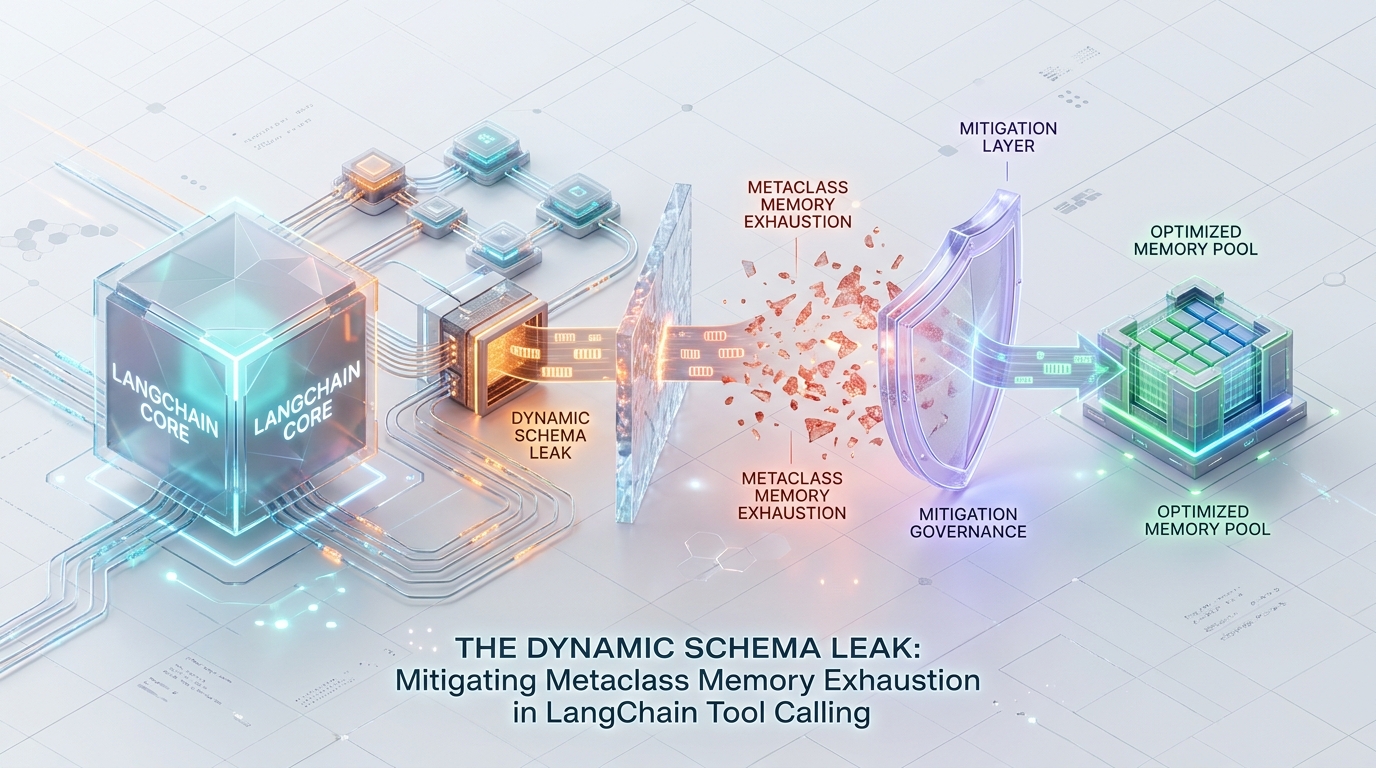

The resolution requires a fundamental architectural shift: move state injection out of the metaclass compilation phase and into runtime execution logic. By leveraging LangChain’s RunnableConfig for context propagation or TypeAdapter caching for edge-case dynamic validation—engineering teams can eliminate native memory overhead, stabilize container RSS, and restore predictable event loop behavior.avior.

The Anatomy of the Leak: Why Dynamic Schemas Fail

To understand the severity of this memory exhaustion, we must examine the intersection of Python’s object model and Pydantic V2’s Rust-backed validation engine. When a tool schema is generated on the fly, it is not merely creating a transient data structure; it is repeatedly compiling complex, native validation binaries meant to outlive the process.

Metaclass Immortalization and Global State

Every invocation of pydantic.create_model() allocates a new Python type a metaclass instance. Python’s garbage collector is highly optimized for transient instances but struggles fundamentally with dynamically generated types.

When a dynamic BaseModel is created per-request, it becomes deeply intertwined with Python’s global state. The generated class registers itself in the typing module caches, binds to abc registries, and appends itself to the __subclasses__ references of its parent classes. Because these global registries maintain strong references to the newly created type, the dynamic schema is permanently immortalized. It bypasses generational garbage collection entirely. The request finishes, the network socket closes, but the schema remains in memory until the container dies.

Rust-Level Allocation Bounds

Pydantic V2 achieved its massive performance gains by moving validation logic out of Python and into Rust via pydantic-core. However, this architectural shift introduced strict memory trade-offs.

When create_model runs, it invokes pydantic-core to compile a SchemaValidator and a SchemaSerializer across the Foreign Function Interface (FFI) boundary. This compilation process incurs a native memory overhead of approximately 1.5 KB to 2 KB per generated schema.

These Rust structures are designed as static, process-level singletons. They are optimized for blazing-fast validation speed under the assumption that they will be compiled once at application startup. Because they are cached natively and the referencing Python metaclass is immortalized, this native memory is never fully deallocated. At 500 requests per second, this seemingly trivial 1.5 KB to 2 KB leak accumulates over 3.6 GB of unreclaimable native RSS memory per hour.

Closure Reference Cycles

The architectural anti-pattern is usually compounded by closure variables. Tools generated inside request scopes routinely capture variables such as user_id, tenant_id, or active db_session objects in closures to bind context to the tool execution.

This binds the immortalized BaseModel and its pydantic-core native validators directly to the closure environment. The result is a cyclic reference graph that traps standard garbage collection. Even if you manually unregister the dynamic class from __subclasses__, the cyclic reference to the request context prevents standard GC from sweeping the localized environment, leaking both the schema and the trapped request payloads.

Architectural Remediations

To mitigate this, validation constraints must be moved from Schema Generation (compile-time/registration) to Execution Logic (run-time). Tools must be refactored into stateless, generic singletons.

1. Static Schema Definitions with RunnableConfig Injection (Recommended)

The most robust solution requires decoupling the execution context from the schema layout entirely. You must never generate a tool dynamically to bind context. Instead, define tools as static global singletons at module load time.

To pass request-bound context (such as a user_id or session_id) down to the tool, utilize LangChain’s RunnableConfig. This dictionary is designed to safely propagate context through nested runnables without altering function signatures or triggering schema recompilation.

Trade-off: This requires strict discipline in decoupling context from the schema layout, forcing all downstream tools to extract state explicitly from the RunnableConfig rather than relying on standard parameter injection.

2. Zero-Class Validation via TypeAdapter & TypedDict

Certain complex architectures—such as agents dynamically discovering and consuming arbitrary external APIs—mandate dynamic schema resolution. If a tool absolutely must handle dynamic schemas, you must bypass pydantic.create_model() and BaseModel metaclass creation entirely.

By leveraging Pydantic V2’s TypeAdapter paired with standard Python TypedDict, you can skip Python class creation and avoid metaclass immortalization while retaining validation speed. Wrapping this in a hashing mechanism ensures the Rust-level validators are reused rather than recompiled.

2. Threading Limits in SciPy SpMV

SciPy’s default SpMV backend (scipy.sparse.csr_matrix.dot) operates primarily on a single thread. When evaluating a 50M+ scale matrix with over a billion edges, relying on a single core for O(∣E∣) floating-point operations severely underutilizes modern server hardware.

Once cache locality is restored via RCM, the SpMV loop will eventually hit the upper limit of the processor’s memory bandwidth. To push throughput higher, you must force the underlying BLAS/MKL libraries into parallel execution. This is configured via OS-level environment variables before the Python interpreter initializes.

Trade-off: You sacrifice IDE auto-completion and access to standard BaseModel methods, but you drastically reduce validation overhead and eliminate the continuous memory leak.

3. Weakref Closures for Legacy Agent Run-Loops

In legacy codebases where refactoring away from StructuredTool.from_function is structurally impossible in the short term, you must surgically break the cyclic reference lock.

If an agent loop relies on generating functions dynamically and injecting them via from_function, utilize Python’s weakref module. This breaks the cyclic reference between the LangChain Agent, the tracked function, and the dynamic args_schema, allowing the garbage collector to partially sweep the request context, even if the native SchemaValidator leaks slightly.

Trade-off: High code complexity and a brittle execution path. This is strictly a temporary patch for legacy systems, not a target architecture.

Post-Mortem Operational Benchmarks: Before vs. After

The following table details the internal benchmarks capturing the impact of migrating from dynamic schema generation to RunnableConfig static singletons under a 500 RPS load.

| Metric | Legacy from_function (Dynamic) | Refactored RunnableConfig (Static) | Delta / Impact |

|---|---|---|---|

| Native RSS Leak (per req) | 1.5 KB - 2 KB | 0 KB | Eliminates FFI allocation bounds. |

| Pydantic Validation Cache | Re-compiles SchemaValidator | Fetches Singleton | Sub-millisecond execution times restored. |

| Garbage Collection Status | Bypassed (Metaclass Immortalization) | Swept (Standard Gen-0 GC) | Immediate memory reclamation. |

| cgroup OOM SIGKILL | ~Every 3.6 Hours | None | Infinite uptime restored. |

| Connection Stability | Deadlocked httpx async loops | Stable connection pooling | Eliminates idle CPU spikes on restart. |

The following table details the internal benchmarks capturing the impact of migrating from dynamic schema generation to RunnableConfig static singletons under a 500 RPS load.

The dynamic generation of Pydantic models within LangChain tool definitions is a severe architectural anti-pattern for high-throughput systems. The abstraction hides the heavy cost of Rust-level compilation and Python metaclass immortalization, ultimately leading to unmanageable container RSS bloat, hidden tracemalloc misses, and system-halting cgroup OOM kills.

Engineering teams must treat schema definitions as static compile-time constants. By leveraging RunnableConfig for state propagation and reserving TypeAdapter caches for edge-case dynamic validation, you can stabilize your deployment and extract the raw execution speed Pydantic V2 was built to deliver.

If your multi-agent architecture is suffering from unexplained memory creep, degraded request throughput, or persistent OOM terminations, reach out to Azguards Technolabs for a comprehensive architectural review and specialized implementation of high-performance LLM infrastructure.

Would you like to share this article?

Azguards Technolabs

Is Native Memory Exhaustion Killing Your LLM Infrastructure?

Unexplained OOM kills, erratic latency, and profilers that show nothing — these are the fingerprints of FFI-level memory leaks. Our engineering team diagnoses and resolves deep architectural bottlenecks in enterprise AI systems, transforming fragile prototypes into hardened, high-throughput inference pipelines.

Request an Architecture ReviewAll Categories

Latest Post

- Spring Kafka Exactly-Once: Mitigating the Fencing Avalanche & Zombie Producers

- The Orphaned Thread Crisis: Managing Schema Drift in Suspended LangGraph Workflows

- How to Fix Make.com Webhook Queue Overflows: The DLQ & Redis Strategy

- DuckDB Spill Cascades: Mitigating I/O Thrashing in Out-of-Core SEO Data Pipelines

- Beyond the TIME_WAIT Cliff: Scaling N8N Egress Velocity with Envoy Sidecar