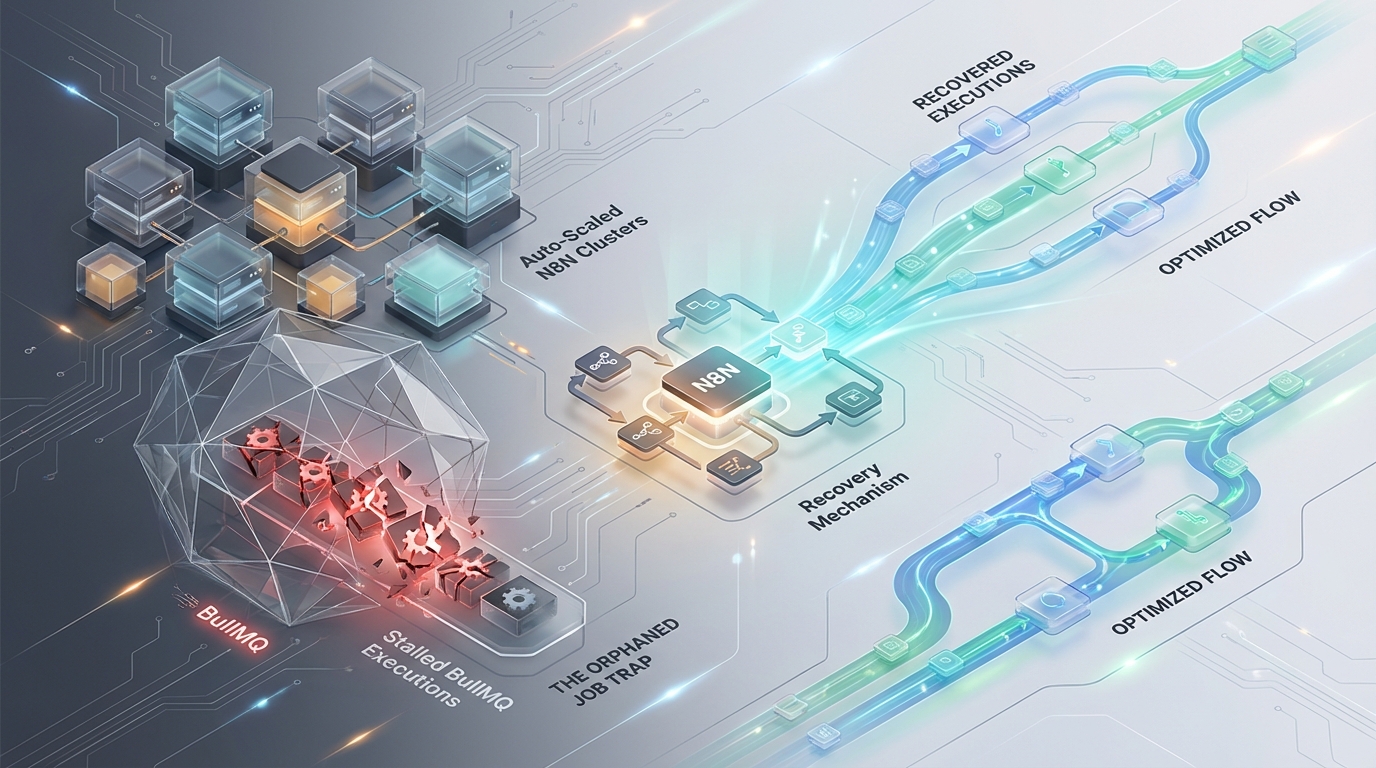

The Orphaned Job Trap: Recovering Stalled BullMQ Executions in Auto-Scaled N8N Clusters

Distributed task queues operate on a spectrum between throughput and reliability. When deploying N8N in distributed queue mode to handle enterprise workflow automation, platform engineers rely on a Redis-backed BullMQ architecture to distribute execution load across scalable worker nodes.

This architecture performs predictably under static loads. However, introduce Kubernetes Horizontal Pod Autoscaling (HPA), Spot Instance preemption, and high-CPU Node.js workloads, and a critical failure mode emerges: the orphaned job trap.

Abrupt process terminations and Node.js event loop starvation strip away BullMQ’s execution guarantees. Downstream APIs suffer concurrent double-executions, queue sweepers fall behind, and executions remain trapped in a zombie state. Resolving this requires synchronizing Kubernetes lifecycle hooks with application-level graceful shutdown windows, tuning internal Redis lock mechanics, and enforcing strict idempotency boundaries.

This engineering brief dissects the state machine mechanics behind BullMQ stalled jobs in N8N and provides the exact architectural configurations required to harden the deployment against distributed failure.

The Mechanics of a Stalled Job in BullMQ

To engineer a resilient N8N cluster, we must first establish the precise lifecycle of a distributed job within the BullMQ state machine. Out-of-the-box, N8N does not guarantee exactly-once processing when node processes terminate unexpectedly.

The active to stalled State Transition

BullMQ relies on a combination of Redis lists, sets, and key expiry mechanisms to track state. The transition of a job from ingestion to an orphaned or stalled state follows a strict chronological path:

- Job Ingestion via Atomic Transfer: An N8N worker polls the queue by executing a blocking Redis

BRPOPLPUSHoperation. This command atomically pops a job from thebull:n8n:jobs:waitlist and pushes it into thebull:n8n:jobs:activelist. The atomic nature ensures that if the worker crashes milliseconds later, the job is not lost in transit. - Lock Acquisition: Upon moving the job to the

activelist, the worker immediately sets a Redis string key (bull:n8n:jobs:lock:). The Time-To-Live (TTL) of this key is defined by theQUEUE_WORKER_LOCK_DURATIONenvironment variable (defaulting to 60000ms). - Heartbeat Maintenance: To signal that the worker is actively processing the job, the Node.js event loop spawns a background

setIntervaltimer. This timer executes a Redis command to renew the lock’s TTL everyQUEUE_WORKER_LOCK_RENEW_TIME(defaulting to 10000ms). - The Stall Event: If the heartbeat timer fails to fire, the lock TTL continuously decays. Once it burns to zero, Redis automatically evicts the lock key. The job remains in the

activelist, but it is now effectively orphaned. - Recovery Check via Sweeper: A secondary process—either another worker or the main N8N instance—maintains a sweeping routine triggered by its

QUEUE_WORKER_STALLED_INTERVALtimer. When this sweeper scans theactivelist, it correlates job IDs against existing lock keys. Any job ID lacking a corresponding lock key is classified as “stalled.” - Re-Queueing and Failure Routing: The stalled job is forcibly bumped back to the

waitlist, and its internal stalled counter increments. If this cycle repeats and the counter exceeds themaxStalledCountthreshold, BullMQ permanently banishes the execution to thefailedlist, appending the stack trace error:"job stalled more than max Stalled Count".

The Node.js Event Loop Trap

A localized failure mode frequently observed in N8N occurs entirely independently of Kubernetes pod scaling. It originates from the architectural design of Node.js itself.

Because Node.js is single-threaded, CPU-bound workflow executions monopolize the main thread. When an N8N worker processes heavy JSON payload transformations, intensive regex parsing, or local image manipulations, it causes a synchronous CPU spike. If this synchronous execution block exceeds the QUEUE_WORKER_LOCK_DURATION threshold, the V8 engine is physically unable to yield control back to the event loop.

Consequently, the background lock renewal timer remains blocked in the callback queue. BullMQ’s remote sweeper observes the expired lock, declares the job stalled, and re-queues it for another worker. When the original worker finally finishes its synchronous processing and regains the event loop, it continues executing the job. This directly results in concurrent double-execution of the identical N8N workflow by two distinct worker nodes.

Kubernetes Preemption Failure Modes

Beyond the application layer, the infrastructure itself actively contributes to the orphaned job trap. When the Kubernetes HPA scales down N8N workers based on depleted queue metrics, or when cloud providers reclaim Spot Instances, the K8s control plane initiates a teardown sequence by issuing a SIGTERM signal to the pod.

The Mismatch Limit

The primary infrastructure failure stems from a race condition between Kubernetes and the N8N application process.

Kubernetes governs pod termination via the terminationGracePeriodSeconds directive. If this grace period is shorter than or strictly equal to N8N’s internal N8N_GRACEFUL_SHUTDOWN_TIMEOUT (default: 30s), a severe mismatch occurs. N8N receives the SIGTERM and attempts to gracefully finish processing its current batch of active workflows. However, before N8N can complete the work or cleanly release the Redis lock, Kubernetes reaches the end of its grace period and ruthlessly issues a SIGKILL.

The Result: The worker process terminates abruptly at the operating system level. The Redis lock is neither explicitly released nor renewed. The in-flight workflow execution becomes an “Orphaned Job,” trapped indefinitely in the active list until its remaining lockDuration TTL decays naturally and the stalledInterval sweeper recovers it.

Remediation Strategy 1: Synchronizing Kubernetes and Node Lifecycle Hooks

To prevent ungraceful termination and hardware-level interruption of active executions, the Kubernetes shutdown window must entirely encapsulate the N8N application-level shutdown window. We must enforce a state where N8N stops polling for new jobs immediately upon receiving a SIGTERM, while possessing guaranteed, uninterrupted compute time to flush its existing active executions.

This configuration introduces a strict hierarchy of timeouts. The preStop hook forces a 5-second buffer before the SIGTERM is even propagated to the Node.js process. This buffer is critical: it provides time for kube-proxy and the ingress controllers to detach network routes, ensuring no new HTTP webhook traffic is routed to a pod that is shutting down. N8N is then granted 45 seconds to finish its work, well within the absolute 65-second K8s kill boundary.

Remediation Strategy 2: Tuning BullMQ Lock Configuration

The default BullMQ settings inside N8N heavily favor stability over aggressive recovery. They are tuned for environments where jobs run for extended periods without interruption. However, in auto-scaled environments experiencing high preemption rates, these defaults inflate your Mean Time To Recovery (MTTR) for orphaned jobs.

You must tune the queue internals to balance rapid recovery against the risk of double-processing.

Architectural Trade-off: By aggressively lowering QUEUE_WORKER_LOCK_DURATION from 60,000ms to 30,000ms, you drastically decrease the time a killed job sits idle before the sweeper picks it up.

However, reducing this threshold below 30,000ms sharply increases the probability of false-positive stalled jobs. If the worker’s Node.js event loop blocks under high CPU load for just a few seconds, it will miss its 5,000ms renewal window. Only reduce the lock duration to highly aggressive levels if your N8N deployment runs strictly high-I/O, low-CPU workflows (e.g., standard API chaining and routing without heavy payload parsing).

Remediation Strategy 3: Enforcing Workflow Idempotency via Distributed CAS Locks

Modifying Kubernetes and BullMQ settings mitigates the frequency and duration of orphaned jobs, but it does not alter BullMQ’s core recovery behavior. When BullMQ natively recovers a stalled job, it replays that job from the start of the execution state.

If an N8N workflow executed a downstream API mutation (e.g., a POST request to charge a Stripe customer or a database INSERT) before the SIGKILL interrupted the subsequent steps, that mutation will be repeated when the job is replayed.

To achieve exactly-once mutation guarantees in a distributed, at-least-once delivery queue, you must bypass N8N’s internal state mechanism entirely. The solution is enforcing a distributed “Check-and-Set” (CAS) lock using an external Redis architecture.

Implementation Note: Do not mix your queue state with your application state. Utilize a dedicated Redis instance or, at a minimum, a logically separated database index (e.g., setting QUEUE_BULL_REDIS_DB=0 for the BullMQ backend, and using DB=1 for external caching and idempotency locks) to isolate these keys from volatile queue operations.

Before vs. After: Recovery Metrics & Configuration Benchmarks

To quantify the impact of these architectural shifts, we evaluate the baseline N8N deployment against the optimized, HPA-aware configuration. The data below isolates the specific metric improvements related to lock eviction, termination thresholds, and MTTR.

| System Parameter / Metric | Default Infrastructure State | Optimized Auto-Scaled State | Engineering Impact |

|---|---|---|---|

| K8s Termination Grace Period | 30 seconds (Default) | 65 seconds | Eliminates SIGKILL race condition; guarantees app-level flush. |

| N8N Graceful Shutdown Timeout | 30 seconds (SIGKILL risk) |

45 seconds | Secures 15-second buffer against K8s hard-kill boundary. |

| PreStop Network Buffer | None | 5 second sleep (kube-proxy) |

Prevents ingress routing to terminating pods during iptables sync. |

| BullMQ Lock Duration | 60000ms | 30000ms | Halves the maximum time a node can block the event loop. |

| BullMQ Lock Renew Time | 10000ms | 5000ms | Increases heartbeat frequency, tightening cluster visibility. |

| Stalled Job Sweep Interval | 30000ms (Default) | 15000ms | Doubles the frequency of zombie job detection. |

| Mean Time To Recovery (MTTR) | ~90 seconds (Lock + Sweep delay) | ~45 seconds | Reduces total downtime for stalled workflow re-queueing by 50%. |

By aligning these metrics, the cluster transitions from a fragile state susceptible to double-processing under heavy load, to a deterministic system that handles node preemption gracefully while strictly bounding the maximum MTTR for any interrupted workflow.

Azguards Technolabs: Performance Audit & Specialized Engineering

Designing fault-tolerant queues is not about configuring software to run perfectly; it is about engineering the system to fail predictably. When enterprise N8N clusters begin dropping executions or duplicating database records, the root cause rarely lies in the workflow logic itself. It is embedded deeply within the infrastructure orchestration, event loop starvation, and distributed state management.

Azguards Technolabs provides specialized engineering and performance audits for high-throughput automation infrastructure. We partner with enterprise platform teams to dissect their underlying architecture, untangle misaligned lifecycle hooks, and re-architect Redis caching layers to ensure that horizontal scaling actually delivers stability, rather than compounding failure modes. We bridge the gap between application logic and low-level infrastructure constraints.

Conclusion

The orphaned job trap in N8N is an inevitable consequence of operating distributed Node.js applications under elastic compute paradigms. BullMQ provides the primitives for resilience, but it relies on the infrastructure engineer to enforce the boundaries. By purposefully mismatching Kubernetes grace periods in favor of the application, halving BullMQ lock durations, and abstracting mutation state into external Check-and-Set Redis keys, you neutralize the threat of double-execution.

Stop accepting stalled queues as a cost of doing business. If your N8N infrastructure is scaling into unreliability, contact Azguards Technolabs for an architectural review and complex implementation audit. Build systems that scale without breaking.

Would you like to share this article?

Need Help Scaling Your Infrastructure?

Stop accepting stalled queues and double-processing as a cost of doing business. Get in touch with our engineering team today to harden your automation infrastructure.

Get in Touch →All Categories

Latest Post

- The Orphaned Job Trap: Recovering Stalled BullMQ Executions in Auto-Scaled N8N Clusters

- The Delegation Ping-Pong: Breaking Infinite Handoff Loops in CrewAI Hierarchical Topologies

- Mitigating Redis Lock Contention: Decoupling Shopware Cart Calculations from Session Mutexes

- The Situation: The Silent Failure of Hydration Mismatch

- The Suspension Trap: Preventing HikariCP Deadlocks in Nested Spring Transactions