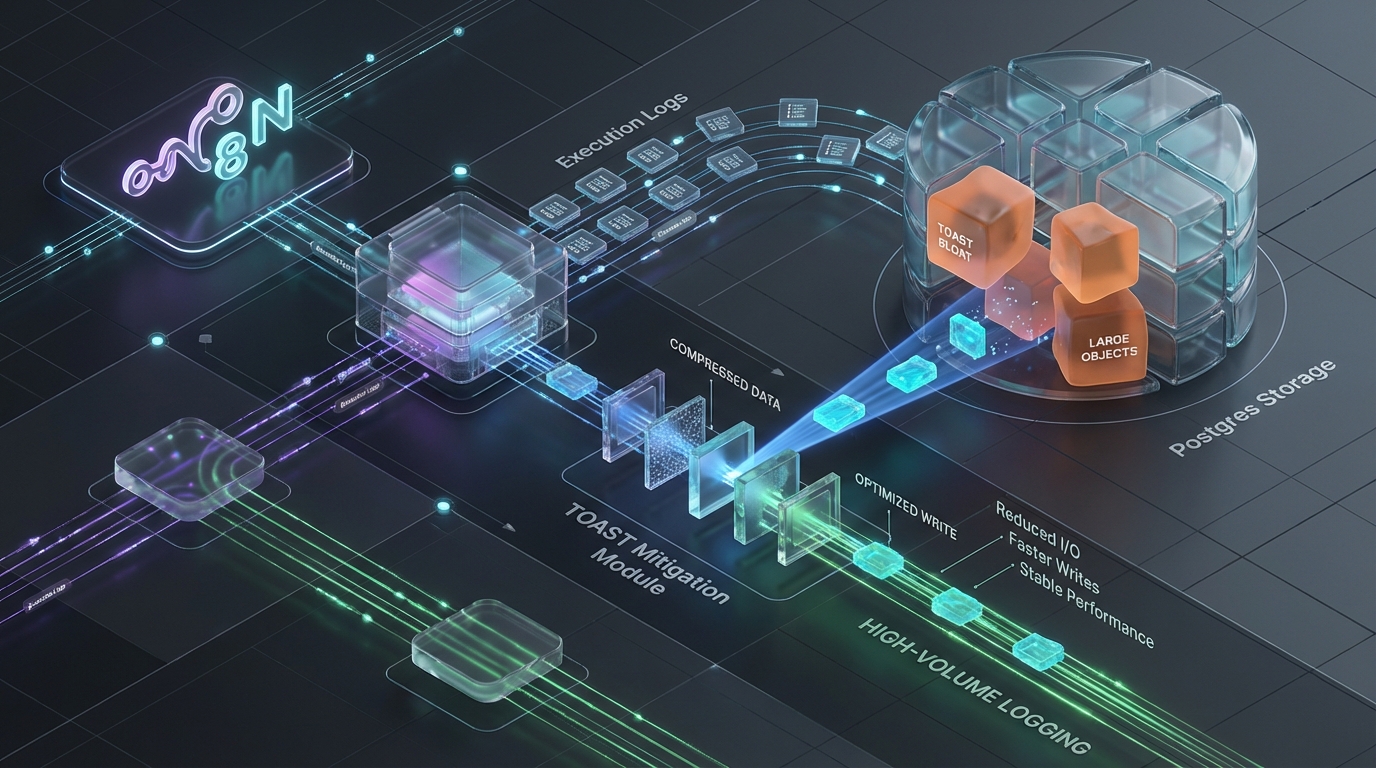

The TOAST Bloat: Mitigating Postgres Write Degradation in High-Volume N8N Execution Logging

Scaling N8N from a localized automation utility to an enterprise-grade, high-throughput orchestrator exposes a fundamental bottleneck in its default state management architecture. The issue stems from a structural misalignment between N8N’s Pass-by-Value persistence mechanics and the internal memory architecture of PostgreSQL.

When you introduce high-velocity concurrency, complex workflows with Wait nodes, heavy ETL payload transformations, and stateless worker auto-scaling, this misalignment manifests as severe database write-amplification, unpredictable I/O saturation, and sudden Node.js V8 worker death.

The root cause of this degradation cascade is isolated to a single, often overlooked mechanism: TOAST bloat within the execution_entity table.

Pass-by-Value in Stateless Orchestration

In standard N8N architectures, state is preserved via a Pass-by-Value mechanism. To allow distributed worker nodes to pick up paused or delayed executions, the orchestrator must serialize the entire execution context into the PostgreSQL backend. This payload includes IExecuteFunctions, extensive node configurations, and critically, the raw JSON payload arrays representing the data moving through the graph.

For low-throughput systems, dumping serialized JSON to a relational database is trivial. At scale, where thousands of executions per minute generate megabytes of state transition data per node, the database layer becomes a hostile environment.

Autovacuum Blindness and the TOAST Cascade

PostgreSQL operates on a hard 8KB page size limit. It is not designed to natively store massive JSON/JSONB documents inline. When the serialized output of a node exceeds the toast_tuple_target (which defaults to a mere 2KB), PostgreSQL engages TOAST (The Oversized-Attribute Storage Technique).

The mechanical flow is rigid:

- The oversized payload is compressed.

- If the compressed payload still exceeds the 2KB threshold, PostgreSQL strips it from the main table, chunks it, and stores it out-of-line in a hidden

pg_toast_table. - The main

execution_entitytable retains only an OID (a TOAST pointer) referencing the chunked data.

Under high-velocity loads, N8N’s continuous INSERT operations (spawning new executions) and DELETE operations (background pruning) cause massive dead tuple accumulation. Because the bulk of the data lives out-of-line, this accumulation happens predominantly in the hidden TOAST table.

Autovacuum Blindness and I/O Saturation

By default, PostgreSQL triggers an autovacuum on a table when dead tuples exceed 20% of the active rows (autovacuum_vacuum_scale_factor = 0.2). This is a catastrophic default for high-churn orchestration states.

If your execution_entity TOAST table scales to 50GB, a full 10GB of dead tuples must accumulate before the background vacuuming process even notices the bloat. When the autovacuum finally triggers on this massive hidden table, it induces a crippling I/O penalty. The vacuum process consumes available IOPS, forces aggressive sequential scans, and severely pollutes shared_buffers. This evicts hot data from the page cache, instantly degrading the cache hit ratios for active execution queries and slowing the entire cluster to a crawl.

Rehydration OOMs (SIGKILL 9)

The database degradation is only half the cascade; the other half destroys the application layer. When a stateless N8N worker node attempts to resume a paused execution, it queries the bloated execution_entity table.

Retrieving and reconstructing a heavily TOASTed 100MB+ JSON string directly into the Node.js V8 engine forces a sudden, contiguous memory allocation. V8 enforces strict memory limits for heap allocations and string lengths. Forcing V8 to deserialize a massive, fragmented payload from a chunked TOAST table directly bypasses generational garbage collection optimizations. The result is an immediate “JavaScript heap out of memory” exception. The host operating system issues a SIGKILL 9, terminating the worker instantly. The orchestrator loses the heartbeat, the execution state is left in limbo, and zombie queues begin to form.

The Resolution: A Three-Tiered Mitigation Strategy

Relying on standard managed PostgreSQL configurations to handle N8N’s state churn is an architectural anti-pattern. Stabilizing the cluster requires explicit intervention at the database parameter level, the application orchestration level, and the overarching architectural topology.

Tier 1: Postgres Parameter Tuning (Database Layer)

To prevent TOAST bloat, you must decouple the autovacuum thresholds of the execution_entity TOAST table from global database defaults. The objective is to force highly aggressive, high-frequency micro-vacuums that clean up dead chunks before they can trigger I/O saturation.

Execute the following DDL directly against the N8N PostgreSQL database to overwrite table-level pragmas:

Architectural Context: Dropping the TOAST scale factor to 0.01 (1%) ensures that a 50GB table will vacuum after just 500MB of dead tuple accumulation, rather than 10GB. Furthermore, overriding the autovacuum_vacuum_cost_limit to 3000 (up from the default of 200) and nullifying the cost_delay prevents the PostgreSQL vacuum worker from intentionally sleeping. It provides the worker the immediate I/O bandwidth necessary to clear the TOAST bloat quickly and exit.

Tier 2: Aggressive N8N Pruning Configurations (Application Layer)

PostgreSQL parameter tuning treats the symptom; application-layer throttling treats the disease. You must throttle data ingest at the N8N orchestrator level by tuning the pruning lifecycle.

Inject the following environment variables into your N8N container orchestration manifests (Kubernetes ConfigMaps or Docker Compose):

Architectural Context: Enforcing EXECUTIONS_DATA_SAVE_ON_SUCCESS=none is the single most effective switch for reducing database churn. In a high-throughput environment, tracking the successful state transitions of millions of nodes is wasted I/O. By discarding success data, you reduce TOAST INSERT volume by upwards of 95%, ensuring PostgreSQL only stores the state of actively running, paused, or failed executions. Decreasing the MAX_AGE and HARD_DELETE_INTERVAL ensures that when failures do occur, their bloated payloads are purged from the database rapidly.

Tier 3: Advanced Architectural Topologies

For organizations running heavy ETL pipelines, manipulating raw files, or dealing with multi-megabyte API responses, parameter tuning is not enough. You must re-architect the data flow.

1. The Claim-Check Pattern (Pass-by-Reference)

To permanently eradicate V8 OOMs and TOAST bloat on payload-heavy workflows, you must abandon N8N’s default Pass-by-Value architecture and implement a Pass-by-Reference model.

The Mechanism: Before a payload enters a Wait node or a complex sub-workflow, intercept the JSON array. Offload this payload to an external, high-performance key-value store (e.g., a Redis cluster via the Redis node) or an object store (S3/MinIO).

The Result: Generate a UUID during the offload. The N8N orchestrator now only passes a 50-byte UUID through the execution graph. When the workflow resumes on a disparate worker node, the node uses the UUID to pull the payload directly from Redis back into active memory. This bypasses the PostgreSQL execution_entity TOAST table entirely, guaranteeing that state rehydration involves minimal database I/O and zero V8 deserialization shock.

2. Binary Offloading (N8N_DEFAULT_BINARY_DATA_MODE)

Never store binary files within standard execution data. By default, N8N handles binary streams by converting files into Base64 strings. Storing Base64 strings in a relational database guarantees immediate TOASTing, extreme write-amplification, and rapid disk exhaustion.

Force binary payloads out of PostgreSQL and into an S3-compatible object store using native environment variables:

3. Externalized ELK / Prometheus Logging

Once you enforce EXECUTIONS_DATA_SAVE_ON_SUCCESS=none, you lose granular workflow auditability within the native N8N UI. To satisfy compliance, observability, and debugging requirements without taxing the primary database, you must externalize your logging.

Metrics: Utilize the N8N Prometheus integration. Export aggregate telemetry (P99 execution times, failure rates, queue depths) to Prometheus and visualize via Grafana. Do not query PostgreSQL for operational metrics.

Audit Logging: Implement a standardized Error Trigger workflow. Append an ELK or Datadog webhook node at the end of critical pipelines to push execution metadata, stack traces, and failure states directly into Elasticsearch. This isolates your observability data, allowing PostgreSQL to function strictly as a high-performance state machine rather than an archival logging database.

Before vs. After: Analyzing the Benchmark Data

Implementing this three-tiered mitigation strategy drastically alters the operational profile of the cluster. Based on the performance benchmarks of scaling N8N environments, the shift from default behavior to a defensively engineered state is stark.

| Telemetry / Metric | N8N Default Architecture | Azguards Optimized State |

|---|---|---|

| TOAST Autovacuum Trigger | 10GB+ Dead Tuples (on a 50GB table) | ~500MB Dead Tuples (1% Micro-vacuums) |

| Database I/O Saturation | Severe; shared_buffers routinely evicted |

Negligible; Autovacuum throttling bypassed |

| V8 Rehydration Heap Spikes | 100MB+ (Immediate contiguous allocation) | < 1MB (Pass-by-Reference UUID) |

| Worker SIGKILL 9 (OOMs) | Frequent during high-load Wait node resumes |

0 (Memory limits respected) |

| Binary Payload Footprint | Massive Base64 TOAST bloat | 0 bytes in Postgres (Direct S3 Offload) |

The architectural pivot transforms PostgreSQL from a struggling data lake back into what it is designed to be: an ACID-compliant, highly consistent transaction coordinator.

Partnering with Azguards Technolabs

Executing these architectural shifts requires precise tuning, particularly when migrating active production clusters without downtime. The implementation of the Claim-Check pattern, the recalibration of PostgreSQL’s background workers, and the deployment of externalized ELK logging demand deep domain expertise in both infrastructure engineering and database internals.

At Azguards Technolabs, we specialize in Performance Audits and Specialized Engineering for enterprise automation infrastructure. We do not just deploy orchestrators; we re-engineer them to withstand extreme concurrency, decoupling monolithic state constraints to ensure high availability. When out-of-the-box configurations hit their limits, we build the custom topologies required to scale safely.

Would you like to share this article?

Scaling Complex Automation Architectures?

Don't let database bottlenecks and worker crashes limit your orchestration capabilities. Our specialized engineering team can audit your n8n and PostgreSQL topologies to unlock true enterprise scalability.

Get In TouchAll Categories

Latest Post

- The TOAST Bloat: Mitigating Postgres Write Degradation in High-Volume N8N Execution Logging

- HPOS Migration Under Fire: Eliminating WooCommerce Dual-Write IOPS Bottlenecks at Scale

- The Alignment Cliff: Why Massive Python Time-Series Joins Trigger OOMs — and How to Fix Them

- The Carrier Pinning Trap: Diagnosing Virtual Thread Starvation in Spring Boot 3 Migrations

- The Event Loop Trap: Mitigating K8s Probe Failures During CPU-Bound Transforms in N8N