The Bloated Context: Mitigating Worker OOMs in Resumable N8N Pipelines

Situation: Distributed N8N architectures relying on stateless worker nodes are highly effective for scaling complex automation and ETL pipelines. When operating at enterprise scale, pipelines inevitably require asynchronous pauses—waiting for webhooks, manual human-in-the-loop approvals, or polling delays. In N8N, this is handled by the Wait node, which immediately halts the execution to free up the Node.js thread pool for other tasks.

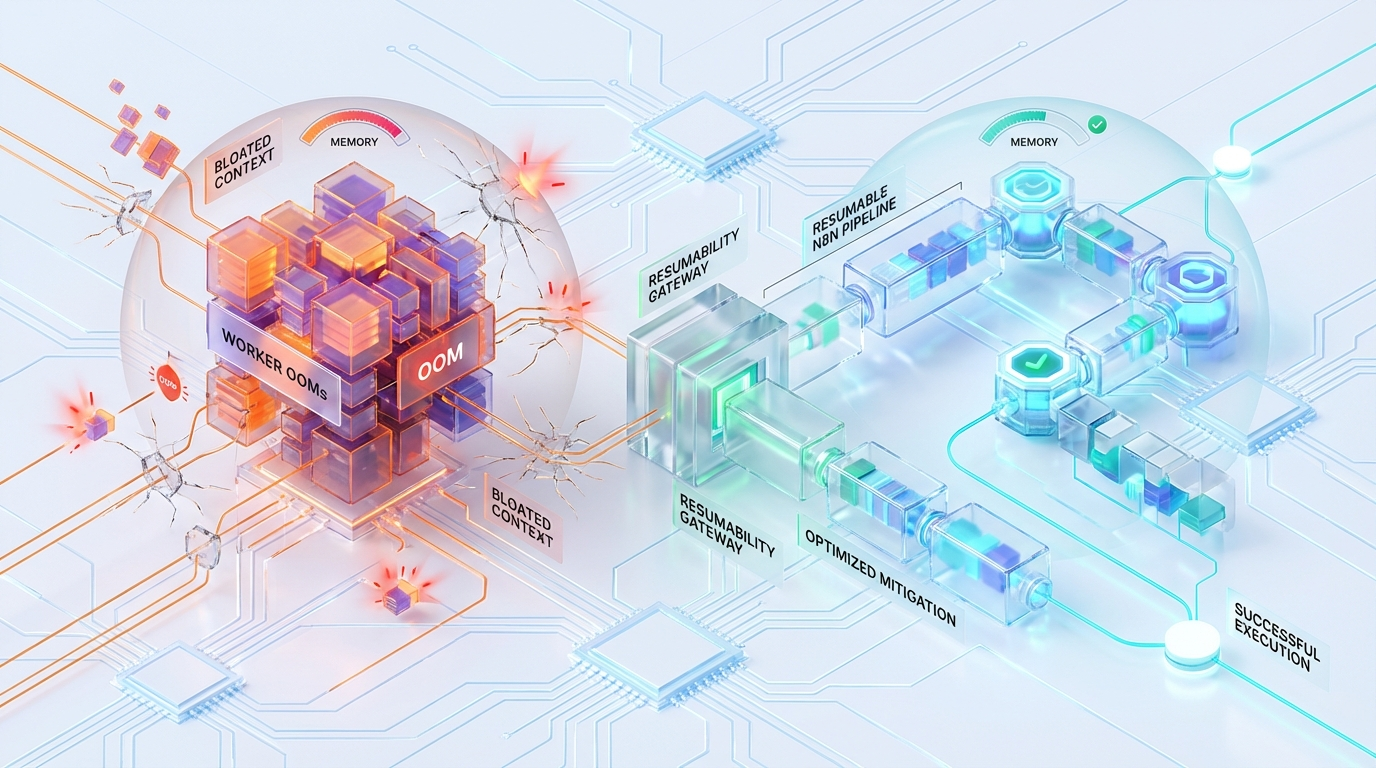

Complication: N8N’s stateless architecture demands that the entire execution state be persisted to the PostgreSQL database prior to this pause. When the wait condition resolves, a worker node must rehydrate that state. If the pipeline is processing heavy payloads, this rehydration event triggers massive, sudden spikes in V8 memory allocation. The result is cascading “JavaScript heap out of memory” errors, undetected worker death (SIGKILL 9), and degraded PostgreSQL I/O.

Resolution: Achieving high concurrency with resumable pipelines requires fundamentally shifting the orchestrator’s data handling from a Pass-by-Value architecture to a Pass-by-Reference architecture. By implementing the Claim Check Pattern—offloading the payload to an external key-value store like Redis or object storage like S3 before the Wait node—we can shrink the execution context payload from hundreds of megabytes down to a 50-byte UUID, entirely eliminating V8 heap explosions.

Here is the engineering breakdown of why worker OOMs occur during resumable N8N workflows, why scaling hardware is a trap, and how to architect the Claim Check Pattern.

The Root Cause: Wait Node Persistence & The Rehydration Event

N8N workers do not hold active state in RAM while a workflow is sleeping. When an execution encounters a Wait node, the workflow-runner initiates a highly I/O-intensive teardown process.

The Serialization Phase

To ensure that any available worker can resume the job later, N8N takes the entire IRun object and bundles it into a monolithic JSON payload. This object is not lightweight. It includes IExecuteFunctions, complete node configurations, the workflow graph state, and crucially, the executionData—the actual raw arrays of JSON data passed between nodes.

This monolithic state is committed to the database via a blocking operation:

For heavy ETL workloads, $1 can easily be a 100MB+ JSON string. Storing this in PostgreSQL creates row bloat, degrades table performance, and consumes significant network bandwidth. But the database write is only the precursor to the real failure.

The Rehydration Event

When the wait condition is met (e.g., a webhook is received or a timer expires), a worker picks up the resumption job from the Redis queue. The ensuing memory crisis occurs during the Rehydration Event:

- The worker queries the database:

SELECT data FROM execution_entity WHERE id = $1. - The Node.js worker pulls this payload into memory and executes

JSON.parse(data)to reconstruct theIRunobject and rebuild the execution graph. - V8 Heap Explosion: A 100MB JSON payload stored in PostgreSQL does not equal 100MB in RAM.

To understand this explosion, you must understand V8 heap mechanics. The V8 JavaScript engine represents strings internally as UTF-16, meaning every character consumes 2 bytes. Furthermore, when JSON.parse instantiates JavaScript objects, V8 adds hidden classes (Shapes) and pointer metadata to map the object’s properties in memory.

Because of this hidden overhead, a heavily nested 100MB JSON payload will routinely expand to 300MB–400MB of Old Space heap allocation during instantiation.

The Band-Aid Fallacy: NODE_OPTIONS=--max-old-space-size

When Platform Engineers spot a JavaScript heap out of memory trace in their N8N worker logs, the standard reflex is to increase the V8 memory limit globally:

For enterprise-grade concurrency, modifying --max-old-space-size to massive limits is an architectural anti-pattern that virtually guarantees cascading system failures. Expanding the heap does not solve the memory bloat; it simply delays the failure and changes its presentation.

1. The V8 Mark-Sweep Penalty

Node.js relies on a single-threaded event loop. Its garbage collector (GC) utilizes a Mark-Sweep algorithm for the Old Generation heap, where long-lived objects (like massive execution payloads) reside. The larger the heap boundary you configure, the longer the GC takes to traverse the object graph to mark live references and sweep dead ones.

Under heavy object churn—such as constantly serializing and deserializing 400MB JSON structures—a 8GB heap limit results in “Stop-the-World” GC pauses exceeding 2–5 seconds. During this time, the Node.js thread is entirely frozen.

2. Zombie Queues (Heartbeat Failure)

Because the thread is frozen during a massive GC pause, the worker node cannot respond to Redis health checks or orchestrator heartbeats. The main N8N instance, receiving no ping back, assumes the worker node has died.

The orchestrator then re-queues the execution for another worker. Meanwhile, the original worker eventually finishes its GC cycle and continues executing. This leads to duplicate executions, severe database locking on the execution_entity table, and “Zombie Processes” that silently consume resources while corrupting data consistency.

3. The Concurrency Multiplier

Consider a standard deployment where N8N_WORKER_CONCURRENCY=10.

If 10 concurrent webhook events trigger the rehydration of 400MB execution states simultaneously, the single Node.js worker process instantly requests 4GB of RAM. If this spike pushes the Docker container or Kubernetes pod past its configured cgroup memory limits, the Linux Kernel OOM Killer immediately intervenes.

The kernel issues a SIGKILL 9, which terminates the worker instantly. There is no stack trace. There is no graceful shutdown. The orchestrator simply loses the worker, and any in-flight jobs that were not at a persistence boundary are destroyed.

Architectural Solution: Off-Graph Storage (The Claim Check Pattern)

To achieve high concurrency without volatile heap spikes, you must fundamentally decouple the payload from the control plane. This is achieved by shifting from a Pass-by-Value architecture (where arrays of heavy JSON objects travel through the execution wire and into PostgreSQL) to a Pass-by-Reference architecture.

By utilizing the Claim Check Pattern, large execution payloads are systematically offloaded to an external key-value store (like Redis) or an object storage system (like S3/MinIO) immediately prior to the Wait node.

The orchestrator only maintains a lightweight pointer (the “claim check”), allowing the workflow state to be paused and resumed without dragging megabytes of data through the database and V8 engine.

Implementation Logic (Pseudocode Pattern)

Instead of passing a 100,000-item array directly into a Wait node, we intercept the data flow using a Code node. This node offloads the massive data array to an external store and passes forward a lightweight reference pointer.

Pre-Wait Node (Offload Phase):

When the execution proceeds to the Wait node, the workflow-runner only serializes and stores a ~50-byte UUID reference to PostgreSQL. The database query takes less than a millisecond, and the row size is negligible.

Post-Wait Node (Rehydration Phase):

Theoretical Engineering Model: Pass-by-Value vs. Pass-by-Reference

To quantify the architectural impact, consider a production scenario involving 10 Concurrent Resumptions of a workflow processing 50MB of API data per run.

| Metric | Pass-by-Value (Default N8N) | Pass-by-Reference (Claim Check Pattern) |

|---|---|---|

| Data Serialized to Postgres | 50 MB per execution | ~100 Bytes per execution |

| DB I/O during Rehydration | 500 MB Read burst (degrades DB) | < 1 KB Read (unnoticeable) |

| V8 Heap Spikes (Rehydration) | ~1.5 GB to 2.5 GB (causes OOM or GC Thrashing) | < 5 MB (instant rehydration) |

| Worker CPU Utilization | High (Deserializing deep object trees synchronously during init) | Low (JSON stream handled predictably post-wake) |

| Risk of Zombie Executions | Critical (GC Pauses > Redis Timeout) | Near Zero |

By removing the 500MB database read burst, we eliminate I/O blocking on the PostgreSQL instance, freeing it to handle operational queries. Furthermore, by ensuring the worker’s V8 heap only spikes by a few megabytes during initialization, we maintain a predictable, flat memory footprint, completely avoiding the Linux OOM Killer.

Critical Limits & Configuration Overrides

Code-level patterns must be reinforced by strict infrastructure guardrails. To harden the N8N worker node deployment against runaway memory allocations, apply the following system limits at the environment level:

1. Execution Data Retention & Pruning

A bloated execution_entity table degrades PostgreSQL query planning and slows down the Rehydration Event’s SELECT queries. Prevent this table from ballooning:

Engineering Note: If compliance requires audit logs of successful executions, stream those logs to Datadog, Elasticsearch, or an external data warehouse. Do not use the orchestrator’s operational database as a permanent system of record.

2. Binary Data Offloading

Never store binary files within the standard execution data. By default, binary data stored in PostgreSQL is converted to Base64 strings. Because Base64 uses 8 bits to represent 6 bits of underlying data, it inherently adds a 33% bloat to memory size—compounding the V8 string allocation crisis.

Force all binary storage to the filesystem or an external S3 provider:

3. Worker Concurrency & Memory Limits

You must tune concurrency tightly against the available physical RAM. Over-provisioning N8N_WORKER_CONCURRENCY under the assumption that Node.js will “figure it out” guarantees failure.

Use this formula to calculate your limits: (Max Expected Heap per Job * Concurrency) + Node Core Overhead < Container RAM Limit

Keep the V8 heap cap restrictive to ensure GC cycles are fast and frequent, rather than delayed and monolithic.

The Azguards Approach to Automation Architecture

At Azguards Technolabs, we view orchestration tools not as magic black boxes, but as specific combinations of execution engines, I/O streams, and memory managers. Tools like N8N are incredibly powerful, but scaling them past the prototype phase requires treating them like distributed microservices.

We partner with enterprise teams to provide rigorous Performance Audits and Specialized Engineering. Whether you are experiencing silent worker deaths, database query bottlenecks, or complex state management failures, Azguards implements the architectural patterns—like the Claim Check Pattern, custom queuing layers, and aggressive V8 memory profiling—necessary to stabilize your infrastructure.

We don’t just increase server sizes; we rewrite the data flow.

Conclusion

Out of Memory errors in resumable N8N pipelines are rarely the result of a memory leak; they are the mechanical reality of pushing large datasets through a relational database and into a V8 JavaScript heap via synchronous serialization.

Relying on --max-old-space-size is a fragile band-aid that leads to Stop-the-World GC pauses, missed heartbeats, and Zombie Queues. By explicitly offloading payload state to Redis or S3 using the Claim Check Pattern, Platform Engineers can reduce PostgreSQL I/O to near zero, maintain flat V8 memory profiles, and scale worker node concurrency with absolute predictability.

Is your automation infrastructure buckling under enterprise workloads? Stop fighting the OOM Killer. Contact Azguards Technolabs today for a comprehensive architectural review and specialized implementation of resilient, high-throughput orchestration patterns.

Would you like to share this article?

Experiencing silent worker crashes?

All Categories

Latest Post

- Beyond the TIME_WAIT Cliff: Scaling N8N Egress Velocity with Envoy Sidecar

- Mastering Distributed Rate Limiting: Eliminating the 429 Thundering Herd in Shopify K8s Topologies

- The LangChain Dynamic Schema Leak: Fixing Pydantic V2 Native Memory Exhaustion

- How Graph Reordering Eliminates L1 Cache Misses in SciPy PageRank at Scale

- Race Conditions in Make.com: Eliminating the Dirty Write Cliff with Distributed Mutexes