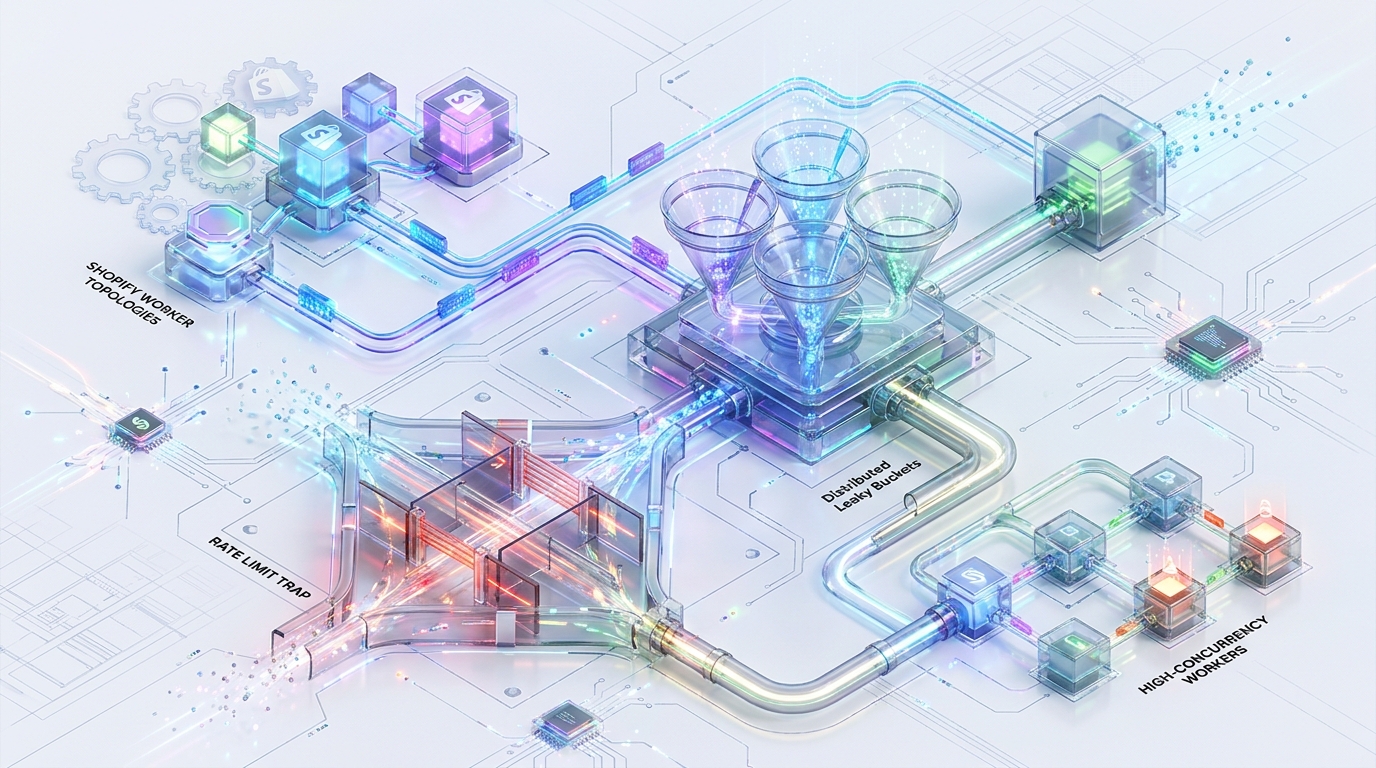

Mastering Distributed Rate Limiting: Eliminating the 429 Thundering Herd in Shopify K8s Topologies

In high-concurrency Kubernetes (K8s) environments, scaling Shopify integration architectures is a precision balancing act. Local-memory rate limiters fragment state across ephemeral pods, inevitably leading to catastrophic 429 thundering herds and OOM evictions. To achieve resilient, elastic scale, engineering teams must transition from fragmented telemetry to a centralized, atomic rate-limiting authority that remains clock-drift immune and computationally efficient.

You are operating a high-concurrency worker topology consuming from Kafka or BullMQ and interacting with the 2026 Shopify Engine (both REST and GraphQL). To maintain throughput, your workers dynamically autoscale based on queue depth.

Implementing naive rate limiting via local-memory primitives (such as Node.js bottleneck or p-limit) fragments the rate limit state across K8s replicas. If 10 autoscaled pods concurrently read an X-Shopify-Shop-Api-Call-Limit: 30/40 header, all 10 independently assume they have 10 tokens remaining. When they fire simultaneously, they unleash an aggregate burst of 100 requests against an actual capacity of 10. The result is a cascading 429 storm, worker exhaustion, and catastrophic K8s out-of-memory (OOM) evictions.

K8s topologies require a centralized, clock-drift-immune, atomic leaky bucket via Redis Lua scripts. By coupling pessimistic token reservations with high-water mark telemetry reconciliation and OOM-safe backoff queues, engineering teams can achieve maximum API throughput without triggering systemic application failure.

1. Architectural Failure States in Auto-Scaled K8s Clusters

Understanding the mechanics of failure is a prerequisite for designing resilient topologies. When distributed state is ignored, failure compounds exponentially.

The 429 Thundering Herd

The core issue in local-memory rate limiters is telemetry fragmentation. Local stores are entirely unaware of concurrent peer operations. In an active Shopify integration, telemetry is delivered synchronously via HTTP response headers or payload extensions. By the time a pod receives a capacity update, that data is already stale.

Consider a scenario where 10 autoscaled K8s pods are pulling jobs from a high-velocity queue. They each execute a request and receive the X-Shopify-Shop-Api-Call-Limit: 30/40 header. Each pod’s isolated state manager calculates 10 available tokens. The K8s cluster then dispatches 100 simultaneous requests. Shopify’s gateway absorbs the first 10, then aggressively throttles the remaining 90 with 429 Too Many Requests. This triggers the thundering herd problem, where isolated components continually retry, permanently saturating the API limits and creating a denial-of-service condition against your own infrastructure.

OOM Cascades and the Event Loop Trap

When workers encounter these prolonged 429 blocks, local delay queues inevitably fail under load. Relying on bounded memory structures or unbounded setTimeout retries traps pending jobs inside the application’s event loop (in Node.js) or goroutine scheduler (in Go).

As the throughput of incoming queue messages remains constant but the outbound API requests are stalled by 429s, the application continuously inflates heap memory. The garbage collector cannot free these objects because the timers maintain active references to the job payloads. Eventually, the RSS memory breaches the K8s container limits.memory spec. The Kubelet intervenes, issuing a SIGKILL and logging an OOMKilled eviction. The pod crashes, its workload is abandoned, and the horizontal pod autoscaler (HPA) spins up replacement pods—which immediately fall into the exact same trap.

2. Hard Limits & Capacity Thresholds (2026 Shopify Engine)

Designing the enforcement mechanism requires a precise mapping of Shopify’s 2026 capacity boundaries. Shopify employs two distinct algorithmic paradigms depending on the API surface.

REST Admin API (The Leaky Bucket Model)

The REST API operates on a standard Leaky Bucket algorithm. Telemetry is sourced purely via the HTTP header: X-Shopify-Shop-Api-Call-Limit: /.

Standard Plan: Capacity limit 40, Leak rate 2 req/sec.

Shopify Plus: Capacity limit 400, Leak rate 20 req/sec.

GraphQL Admin API (Calculated Query Cost)

GraphQL rejects the 1:1 request-to-token ratio. Instead, it enforces a Calculated Query Cost model based on the complexity and graph depth of the requested data.

Hard Ceiling: The maximum cost for a single query is 1000 points. Complex queries exceeding this limit will hard-fail with a MAX_COST_EXCEEDED extension, regardless of the available bucket size. Broad pagination deep into arrays (e.g., >25,000 items) is severely restricted.

Restore Rates: Standard (100 pts/sec), Advanced (200 pts/sec), Plus (1000 pts/sec), Enterprise (2000 pts/sec).

Telemetry: Sourced dynamically from the response payload under

extensions.cost, which containsmaximumAvailable,currentlyAvailable, andrestoreRate.

3. Distributed Leaky Bucket: Atomic Token Reservation via Redis Lua

To enforce strict serialization without relying on database locking (which introduces unacceptable latency), K8s workers must interact with a centralized Redis cluster using a pessimistic token reservation script.

Overcoming K8s NTP Drift

A common flaw in distributed rate limiters is relying on the client’s system clock to calculate the elapsed time and leak rate. In K8s clusters, worker node NTP (Network Time Protocol) drift is a given. If Pod A’s clock is 500ms ahead of Pod B’s, TTL calculations and leak math will permanently desynchronize, causing intermittent token overallocation. The solution is to force the Lua script to execute redis.call('TIME'), establishing the Redis cluster master as the absolute, single source of monotonic truth.

Execution Atomicity and O(1) Performance

Redis executes Lua scripts atomically; no other script or Redis command will run while EVAL is executing. However, executing raw Lua text on every worker cycle saturates network bandwidth and burns Redis CPU cycles on AST parsing. The script must be strictly O(1) and loaded into the Redis cache via SCRIPT LOAD. Workers then execute it using EVALSHA, passing only the cryptographic hash and arguments.

4. Reconciling Distributed State: Header Parsing & Network Jitter

While Shopify’s response payload is the ultimate ground truth, network latency and jitter ensure that any received telemetry is inherently state-delayed. Redis knows what we have requested, but it does not inherently know what Shopify has processed.

The In-Flight Race Condition

If a worker processes a response, reads X-Shopify-Shop-Api-Call-Limit: 15/40, and naively overrides the Redis level to 15, it destroys the state. Why? Because concurrent K8s workers may have already reserved tokens for requests that are authorized but still in-flight. Overwriting the global state resets their reservations to zero, guaranteeing a 429 collision on the very next cycle.

The High-Water Mark Reconciliation Pattern

To solve this, we must deploy a secondary Reconcile script. You only override the Redis state if Shopify’s reported usage is higher than the local state. This acts as a high-water mark. It perfectly synchronizes “phantom” requests API calls made by third-party apps sharing the exact same Shopify token quota without masking the in-flight reservations of your own K8s pods.

The GraphQL Cost Refund Pattern

Because GraphQL actual costs aren’t finalized until query execution resolves, you must pre-flight your request by aggressively reserving the requestedQueryCost (the worst-case execution cost) using the primary reservation script. Upon HTTP success, parse the extensions.cost.actualQueryCost telemetry. You then trigger a tertiary “Refund” script to securely subtract the delta (the unspent tokens) from the global Redis level. Failing to refund the delta results in artificial self-throttling, severely degrading consumer throughput.

5. Worker Topology & OOM-Safe Backoff Queues

When the atomic reservation script returns Allowed = 0, the worker must yield immediately. Blocking the thread or spinning in a while loop compromises compute integrity. Backoff strategies must be delegated to the infrastructural layer.

Message Broker Pausing (Kafka)

If your architecture relies on sequentially processing messages from an event log like Kafka, the K8s pod must never busy-wait. Doing so blocks the consumer’s polling loop, resulting in a max.poll.interval.ms timeout violation. The Kafka coordinator will assume the consumer is dead, triggering a catastrophic consumer group rebalancing that halts all processing.

Instead, immediately fire consumer.pause(topicPartition) precisely for the RetryAfter duration computed by the Redis Lua script, appended with a 10-15% execution jitter to prevent thundering wake-ups. This keeps the background heartbeat active while gracefully suspending consumption.

Distributed Queue Re-Routing (BullMQ / Celery)

In discrete worker queue architectures, you must intercept the Lua script rejection before initiating the HTTP request.

Actionable Setup: In Node.js via BullMQ, throw a custom RateLimitError immediately upon rejection. Utilize BullMQ’s native delayed job functionality via the Worker.add options (or the internal backoff settings). Dynamically inject the Redis-supplied RetryAfter integer to reschedule the job’s execution at the exact millisecond capacity returns, ensuring zero node memory is held in the interim.

Circuit Breaker Hooks: In severe throttling events (e.g., if the bucket is fully depleted and requires > 15 seconds to leak), trigger a momentary “Circuit Open” flag attached to the K8s Readiness Probe. This automatically detaches the pod from active K8s service endpoints, halting upstream traffic routing until the shared bucket successfully leaks capacity.

6. Before vs. After: Performance Benchmarks

Migrating from fragmented local state to an atomic, distributed leaky bucket fundamentally alters the stability and throughput of your worker topologies. The following benchmarks illustrate the systemic recovery of an autoscaled integration layer under peak load.

| Benchmark Metric | Fragmented Local State (p-limit) | Distributed Leaky Bucket (Redis Lua) | Impact / Resolution |

|---|---|---|---|

| 429 Thundering Herds | Constant (10x multiplier on 30/40 header) | Eliminated (Strict atomic reservation) | Avoids Shopify API saturation and IP penalization. |

| K8s Pod Stability | High OOMKilled Rate (Event loop bloat) | Zero Evictions (OOM-safe backoffs) | Memory usage remains flat regardless of queue depth. |

| Kafka Group Status | Frequent Rebalancing (max.poll breaches) | Stable (Precise consumer.pause()) | Prevents stop-the-world reassignments during backoff. |

| Third-Party App Collisions | Blind (Overrides state downward) | Synchronized (High-water mark reconcile) | Safely shares standard Shopify API quotas. |

| GraphQL Throughput | Artificially Throttled (Assumes max cost) | Optimized (Delta Cost Refund Pattern) | Maximizes actual point burn per second. |

Performance Audit and Specialized Engineering

Solving distributed state is not a matter of adding more compute; it is a matter of architectural precision. The boundaries of the Shopify API require exact, mathematical synchronization across your worker topologies. Building this out while managing K8s limits, Redis optimization, and Kafka rebalancing is the definition of the “Hard Parts” of backend engineering.

This is where Azguards Technolabs excels. We are the partner for specialized engineering and performance audits. We don’t just build software; we optimize enterprise infrastructure to withstand maximum concurrency without breaking. We analyze your Kafka partitions, audit your K8s scaling parameters, and implement robust, atomic backoff solutions tailored precisely to Shopify’s limits.

Relying on localized state in an autoscaled world is a trap. By shifting rate limit authority to a centralized Redis cluster, leveraging atomic O(1) Lua scripts, mitigating K8s clock drift, and enforcing OOM-safe queue backoffs, you eliminate the 429 thundering herd. Your system scales linearly, yielding predictably under load instead of fracturing.

Would you like to share this article?

Azguards Technolabs

Audit Your Scalability

Stop the 429 storms and OOM evictions. Our specialized engineering team can audit your architecture and implement robust, atomic rate-limiting solutions built for enterprise scale.

Consult an ArchitectAll Categories

Latest Post

- Mitigating Checkpoint Collisions & Write-Skew in LangGraph

- Spring Kafka Exactly-Once: Mitigating the Fencing Avalanche & Zombie Producers

- The Orphaned Thread Crisis: Managing Schema Drift in Suspended LangGraph Workflows

- How to Fix Make.com Webhook Queue Overflows: The DLQ & Redis Strategy

- DuckDB Spill Cascades: Mitigating I/O Thrashing in Out-of-Core SEO Data Pipelines