Race Conditions in Make.com: Eliminating the Dirty Write Cliff with Distributed Mutexes

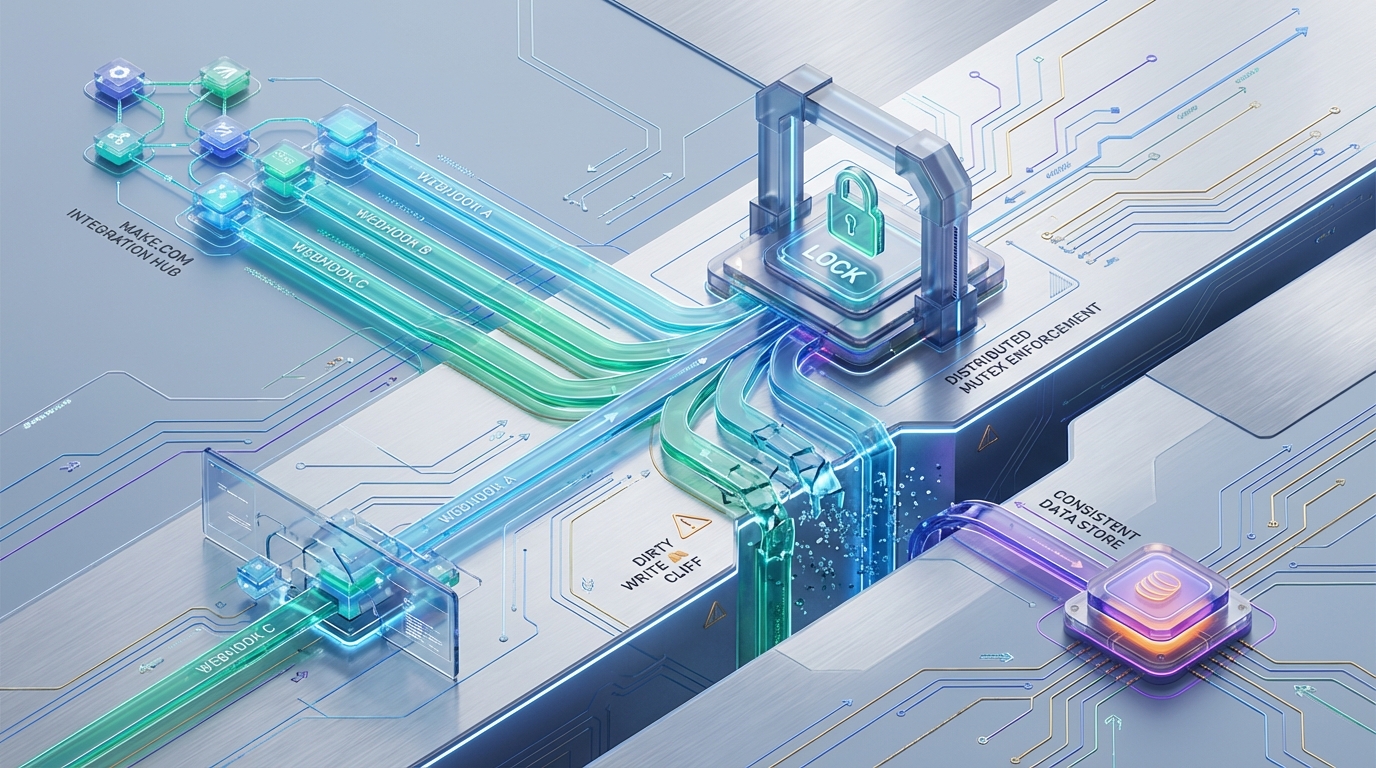

Scaling serverless automations in Make.com often leads to a silent, catastrophic failure mode: the “Dirty Write Cliff.” When parallel webhook bursts—such as a sudden influx of Stripe payment payloads—collide with Make’s optimistic state management, the lack of native row-level locking results in lost data and corrupted execution states. To enforce integrity without sacrificing throughput, we implement a REST-driven distributed mutex utilizing an ephemeral Redis layer—strictly enforcing atomic execution across isolated worker threads.

Vulnerability Analysis: Dissecting the Dirty Write

The fundamental flaw surfaces when multiple parallel workers target the same underlying data record during a burst event. The inherent race condition maps directly to how Make.com manages state across isolated execution threads.

Consider a scenario where two parallel workers receive payloads targeting the same internal customer record:

- T0: Worker A reads the target Data Store row into memory.

- T1: Worker B reads the exact same Data Store row into memory.

- T2: Worker A computes mutations in memory and writes the commit back to the Data Store.

- T3: Worker B computes its mutations based on the stale T1T1 read, and commits the write.

The T3 write blindly clobbers the T2 commit. The delta calculated by Worker A is permanently lost.

This data loss is compounded by Make.com’s native webhook acknowledgment behavior. Upon ingesting a payload, Make.com implicitly returns a 200 OK to the origin webhook provider instantly. (The only exception is if a Custom Webhook Response module is utilized and placed synchronously in the execution path). This auto-acknowledgment offloads the entire retry and failure-handling responsibility onto Make’s internal execution queue. Because the origin provider (e.g., Stripe) assumes the delivery was successful, any dirty write occurring downstream is catastrophic; the provider will not retransmit the payload, and the failed mutation is swallowed by the execution engine.

Architectural Solution: The REST-Driven Distributed Mutex

Because Make.com operates via stateless containerized executions, it cannot maintain persistent, stateful TCP connections. This immediately rules out the use of native Redis client libraries or standard connection pooling protocols.

To bridge this, we implement our distributed locking mechanism using a REST-accessible Redis layer (such as Upstash). This provides the latency benefits of in-memory data structures while conforming to Make.com’s HTTP-only outbound constraints.

We guarantee atomicity by leveraging the Redis SET command explicitly coupled with the NX (Not eXists) and PX (Milliseconds TTL) arguments. This ensures that across NN concurrent workers, only a single Make.com thread can successfully acquire the lock for a highly specific resource (e.g., lock:stripe_cus_123).

Redis Lock Acquisition Payload (Upstash REST API)

To initiate the lock, the Make.com worker executes the following HTTP request:

Engineering Rationale:

Thread Identity ({{sys.IMTID}}): The lock’s value is bound directly to Make’s internal execution ID (sys.IMTID). This explicitly binds the mutex to the specific worker thread attempting the mutation, ensuring traceability and preventing rogue unlocking.

Deadlock Prevention (PX: 15000): The PX argument (15,000ms) acts as a hard, non-negotiable safety net. If a Make scenario hits an Out-Of-Memory (OOM) exception, strikes its 40-minute hard execution timeout limit, or encounters an unhandled runtime error before explicitly releasing the lock, the Redis instance will automatically purge the key after 15 seconds. This ensures the resource is never permanently deadlocked.

Make.com Topology & Execution Flow

Implementing a distributed mutex within a visually programmed automation platform requires rigid topological control. If implemented incorrectly, the lock checks will saturate the execution engine and trigger unnecessary API rate limits. The scenario topology must explicitly segregate the lock check, the execution path, and the backoff queue.

Module Configuration Sequence

- Trigger: Custom Webhook configured with Parallel Processing Enabled.

- Lock Acquisition: An HTTP “Make a Request” module executes the

SET NXREST call to the Redis cluster. - Router Evaluation: A standard Router module evaluates the Upstash HTTP response payload to determine concurrency states.

Route A: Lock Acquired (Proceed)

Filter Condition: HTTP Status Code == 200 AND Body == "OK".

Execution: The worker enters the critical section (e.g., querying the Data Store, calculating the delta, and writing the DB mutation).

Mutex Release: Immediately upon exiting the critical section, a secondary HTTP “Make a Request” module executes DEL lock:{{webhook.customer_id}}. This explicitly frees the queue for pending executions before the 15-second TTL natively expires. Route B: Lock Denied (Backoff)

Filter Condition: Body == null. (Upstash returns a null payload when the NX condition fails).

Fault Injection: The worker intentionally throws an error to halt the current execution thread. We force this by routing the payload into a purposefully misconfigured module (e.g., a JSON Parse module fed invalid syntax, or a dummy API call configured to fail).

Error Handling: We attach a Break Directive directly to the failing module.

Break Directive Configuration for Controlled Backoff

The Make.com Break error handler natively intercepts the intentional fault and dumps the execution payload directly into Make.com’s “Incomplete Executions” queue.

To govern the retry logic, the Break directive is configured with the following parameters:

Number of retries: 10

Interval: 1 minute

Sequential processing of retries: Yes The Exponential Backoff Constraint: Make.com imposes a hard minimum limit of a 1-minute interval on the Break module. This renders true, sub-minute exponential backoff architectures (e.g., retrying at 500ms, 1s, 2s) natively impossible. Consequently, the Redis TTL (PX) must be mathematically tuned to the maximum expected execution time of the critical section. By setting the PX to 10-15 seconds, we ensure the lock is predictably cleared well before the 1-minute Break retry attempts its first execution.

Latency Trade-offs & Theoretical Engineering Model

To justify the architectural overhead of injecting HTTP-based mutexes into a low-latency webhook ingestion flow, we must model the throughput of Make Sequential Processing against Parallel Execution + REST Mutex.

Hard Limits & Baseline Assumptions

Webhook Burst Arrival: 100 requests arriving at t=1st=1s.

Make Sequential Limit: 1 execution processed at a time.

Make Parallel Worker Limit: N=40N=40 concurrent workers (typical for Enterprise tiers).

Critical Section Execution Time (ScSc): 2.0s2.0s.

REST Redis Latency Overhead (LrLr): 45ms45ms per HTTP call (Acquire + Release = 90ms90ms total penalty per worker).

Scenario A: Make.com Sequential Processing

Relying on default constraints, Make.com pushes all 100 webhooks into its internal message broker (which supports a maximum queue size of 100,000 payloads at 5MB per payload). The engine processes them strictly sequentially.

Throughput Calculation: 1 worker/2.0s=0.5 req/sec1 worker/2.0s=0.5 req/sec.

Total Burst Clearance Time: 100 req×2.0s=200 seconds100 req×2.0s=200 seconds.

Trade-off: While this guarantees zero race conditions and eliminates the dirty write cliff, the latency cost is extreme. Furthermore, it introduces severe head-of-line blocking. Subsequent, unrelated webhook events (e.g., a payment event for Customer B) are blocked entirely by the queue clearing Customer A’s payloads.

Scenario B: Parallel Processing + REST Mutex

Make.com spins up 40 concurrent workers. Each incurs a 45ms45ms penalty to evaluate lock state.

Throughput (Different Mutex Keys): If the 100 webhooks map to different entities (e.g., 100 different Stripe customers), all locks are acquired simultaneously without contention.

Clearance Time: Processing requires ≈⌈100/40⌉=3≈⌈100/40⌉=3 discrete execution batches. 3 batches×(2.0s+0.09s)=6.27 seconds3 batches×(2.0s+0.09s)=6.27 seconds.

Result: A 3,000% throughput increase over native sequential processing, maintaining mathematical certainty over data integrity. Throughput (Competing for the Same Key): If 10 of those webhooks compete for the exact same customer lock, 1 worker successfully acquires it while the remaining 9 hit the Break module via Route B.

Result: The 9 failed payloads are shunted into the 1-minute Incomplete Executions retry queue. While the short-term throughput for that specific entity is intentionally delayed, the parallel execution engine is instantly freed to process the remaining webhooks. This architecture entirely eliminates the head-of-line blocking that plagues Scenario A.

Performance Benchmark Comparison

| Metric | Scenario A: Native Sequential Processing | Scenario B: Parallel + REST Mutex |

|---|---|---|

| Data Integrity | Guaranteed | Guaranteed |

| Concurrency Limit | 1 Worker | 40 Workers (N) |

| Total Clearance Time (100 distinct reqs) | 200.0 seconds | 6.27 seconds |

| Throughput (req/sec) | 0.5 req/sec | 15.9 req/sec |

| Head-of-Line Blocking | Severe | Eliminated |

| Added Latency Penalty | 0ms | 90ms |

Azguards Technolabs: Performance Audit and Specialized Engineering

Default platform configurations fail at scale. When transitioning from linear automation to enterprise-grade event-driven architectures, the limitations of optimistic state management become immediate production bottlenecks. Designing around the constraints of platform execution engines requires more than standard API integrations; it demands rigorous distributed systems engineering.

At Azguards Technolabs, we serve as the specialized engineering partner for enterprise teams scaling their operational infrastructure. We conduct deep-dive Performance Audits and architect specialized, high-concurrency topologies that bypass the hard limits of platforms like Make.com. We don’t just build workflows—we engineer resilient integration infrastructure designed to survive high-velocity data bursts.

Relying on Make.com’s native sequential processing to avoid data corruption is an unacceptable compromise for high-throughput environments. By deploying a REST-driven Redis mutex, engineering teams can entirely resolve the platform’s dirty write vulnerability without sacrificing the horizontal scalability of the parallel execution engine.

The 90ms90ms total HTTP overhead introduced by the external lock evaluation is mathematically negligible when measured against the 190+ second latency penalty native sequential queuing forces upon burst workloads. State management in serverless environments is hard, but it is a solved problem.

Ready to eliminate race conditions in your integration pipelines? Contact Azguards Technolabs today for an architectural review or complex implementation partnership. Let us solve the hard parts of your engineering stack.

Would you like to share this article?

Scale Your Integration

Efficiency.

Off-the-shelf automation fails under high-velocity data bursts. At Azguards Technolabs, we engineer resilient, high-concurrency infrastructure that bypasses platform limitations.

All Categories

Latest Post

- Mitigating LSH Index Bloat: Bypassing Python Dictionary Overhead in Billion-URL Duplicate Content Pipelines

- The Desync Trap: Solving Postgres Read-After-Write Anomalies in AI Agent Workflows

- Mitigating Checkpoint Collisions & Write-Skew in LangGraph

- Spring Kafka Exactly-Once: Mitigating the Fencing Avalanche & Zombie Producers

- The Orphaned Thread Crisis: Managing Schema Drift in Suspended LangGraph Workflows