The Situation: The Silent Failure of Hydration Mismatch

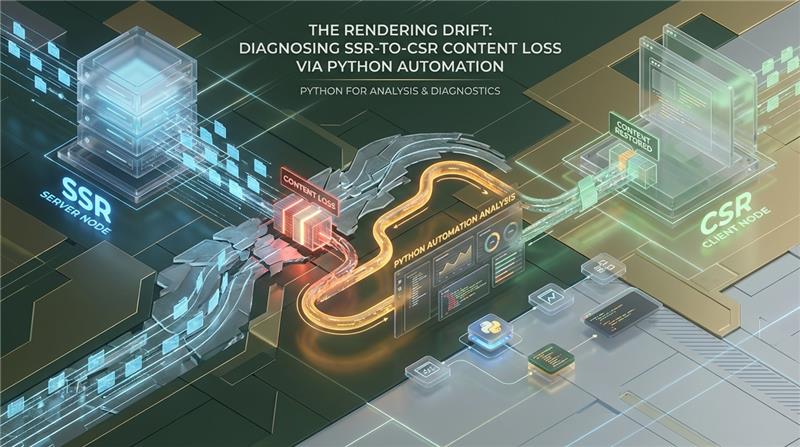

The most insidious regressions in modern web architecture do not throw HTTP 500s. They manifest as silent semantic voids. As enterprise applications transition to complex isomorphic frameworks (Next.js, Nuxt, React Server Components), the handoff between Server-Side Rendering (SSR) and Client-Side Rendering (CSR) introduces a highly volatile boundary. When this handoff fails under the strict execution constraints of search engine crawlers, the result is “Content Drift”—a critical state where the fully hydrated DOM deviates heavily from the initial raw markup.

For technical SEO leads and data engineers managing complex application states, diagnosing this mismatch across 50,000+ endpoints is an operational nightmare. The core complication is that standard web scraping techniques fail to replicate the idiosyncratic constraints of Google’s Web Rendering Service (WRS). If you analyze your endpoints using standard requests.get() alongside native Playwright calls without matching the WRS environment, you are operating on falsified data.

The engineering mandate is clear: We must build a deterministic, Python-driven automation pipeline capable of isolating semantic drift at scale, intercepting dual state payloads, and mirroring WRS limitations natively without exhausting worker node memory.

Architectural Constraints: Mirroring Google's Web Rendering Service (WRS)

To accurately audit content drift, the automated infrastructure must become an exact clone of Google’s WRS. WRS operates on an evergreen, headless Chromium build, but it enforces draconian resource budgets that standard browsers completely ignore.

Failing to emulate these constraints results in a pipeline that detects content your users can see, but search engines fundamentally cannot.

Event Loop Dependency: WRS does not wait for a set timer; it evaluates the main thread’s event loop. It waits for network connections to drop to zero before capturing the final DOM. If a long-polling analytics script or a delayed API call keeps the network active, WRS will force-terminate the rendering phase.

Hard Execution Timeouts: Empirical telemetry dictates strict boundaries. There is a critical threshold of ~5 seconds for “ parsing. More importantly, full DOM hydration faces a hard CPU execution cutoff at ~15–20 seconds. If the JavaScript event loop does not clear before this budget is exhausted, WRS truncates the execution, returning a partially hydrated, skeletal DOM.

Stateless Execution: WRS is aggressively stateless. It actively ignores and isolates Service Workers, localStorage, sessionStorage, and IndexedDB. If your frontend architecture relies on local state persistence to hydrate personalized or regional content, WRS will register this as a critical content loss.

Render Queue Penalties: URLs requiring massive JavaScript payloads or high CPU cycles to construct the DOM are deferred to the WRS Render Queue. This heavy compute requirement increases the delta between the initial raw HTML crawl and the final rendering by an average of days, exacerbating temporal content gaps and indexation lag.

The Injection Protocol: Defeating Temporal Skew in Dual-State Capture

To evaluate content drift deterministically, we must intercept the exact TCP payload sent by the server (State 1) and compare it against the fully hydrated DOM (State 2).

A naive architectural approach relies on firing a standard requests.get() call followed immediately by a Playwright or Puppeteer traversal. This is fundamentally flawed. It introduces race conditions and temporal data skew—the server might return a slightly different payload or varied cache-hit responses between the two separate network requests. Both states must be extracted from a single Playwright traversal utilizing Chrome DevTools Protocol (CDP) network bindings.

Configuration Strategy

To replicate WRS, we instantiate a locked-down browser context. We explicitly set service_workers="block" to mirror WRS statelessness. To capture the precise moment of hydration completion, we utilize waitUntil: 'networkidle' tied to a hard timeout of 15000ms, matching WRS CPU cutoff limits perfectly. Furthermore, modern CSR architectures heavily rely on lazy-loading. We must inject a synthetic mutation script directly into the execution context via page.evaluate() to trigger IntersectionObserver instances before we capture State 2.

Structural Diffing Logic: Eliminating High-Cardinality Noise

Once both states are captured, we encounter the next major engineering hurdle: high-cardinality noise. Comparing raw HTML strings using standard library modules like difflib is computationally expensive and practically useless for Document Object Models.

Modern JavaScript frameworks inject heavy randomization during the build process. CSS modules generate randomized classes (e.g., .css-1x8z), security middleware rotates CSRF tokens natively, and libraries like React or Vue append dynamic hydration tracking IDs (data-reactroot, data-v-xxx). A string-based diff will flag a 90% content change even if the semantic content is identical.

To isolate purely semantic discrepancies—such as a dropped tag, or empty product description containers—we discard string diffing entirely. Instead, we utilize the lxml library. Backed by C-level implementations (libxml2 and libxslt), lxml provides the high throughput necessary for processing thousands of complex DOM trees per minute. We strip all transient, non-semantic attributes at the XPath level, generating clean structural hashes. For highly structured metadata (application/ld+json), standard XML diffing fails because JSON dictionaries do not guarantee key order. We extract the raw JSON strings and pipe them through the DeepDiff library to perform rigorous, programmatic comparisons of nested dictionary structures.

Parallelization & Resource Management at the 50k Edge

Executing a deeply structural DOM audit is relatively straightforward for a dozen URLs. Scaling this Playwright architecture across 50,000+ endpoints introduces severe, node-crashing infrastructure risks.

A single Chromium context in headless mode consumes anywhere from 20MB to 50MB of RAM. If page instances are not explicitly closed, isolated, and continuously purged, the V8 JavaScript engine’s garbage collector will inevitably bleed memory, crashing a standard 32GB worker node within hours.

Hard Limits & Architectural Solutions

- Concurrency Bounding: Attempting to spawn hundreds of async Chrome tabs concurrently triggers extreme CPU context switching. We implement strict bounding using

asyncio.Semaphore, locked mathematically to(CPU Cores * 4). For optimal throughput without degradation, do not exceed 40-50 concurrent pages per worker instance. - V8 Memory Management: A single browser instance executing 50,000 sequential contexts will eventually suffer from internal cache bleed and memory fragmentation. The solution is aggressive state purging: destroy and completely recreate the entire

browserobject every 1,000 URLs. We also explicitly limit Node memory allocation via--js-flags="--max-old-space-size=512"and prevent shared memory crashes on Linux/Docker containers using--disable-dev-shm-usage. - WAF/Anti-Bot Evasion: Headless Chrome drops typical

navigatorproperties, which triggers instant HTTP 403 blocks from enterprise WAFs like Cloudflare or Akamai. We implementplaywright-stealthto patch WebGL fingerprints and spoof thenavigator.webdriverflags. Traffic is aggressively routed through context-level proxies using an asynchronous rotating IP pool to avoid rate-limiting.

Performance Benchmarks: The 'Before vs After'

Architectural overhauls are only as valuable as the empirical performance gains they produce. When comparing a naive scraping implementation (split requests, unbounded memory, difflib analysis) against our engineered Python/CDP pipeline on a 32GB worker node processing 50,000 URLs, the metrics present a stark reality.

| Metric | Naive Architecture (difflib + Requests) | Azguards WRS Emulation Pipeline | Impact |

|---|---|---|---|

| Peak Memory Consumption | Memory Exhaustion / OOM Crash at ~4,200 URLs | Capped at ~2.8GB (due to 1k URL V8 purging) | 100% System Stability Maintained |

| Semantic False Positives | > 84% (Triggered by CSS modules & CSRF) | < 1.2% (via lxml structural hash diffing) | High-fidelity drift isolation |

| WRS Emulation Fidelity | Low (Fails on lazy-load, misses timeouts) | Exact (Matches 15s CPU cutoff & block status) | Output identically matches Search Index |

| Execution Time (50k URLs) | N/A (Node Failure) | ~3.5 Hours (Semaphore bound at 40 concurrent) | Predictable, scalable infrastructure |

| WAF Block Rate (HTTP 403) | ~60% (Cloudflare/Akamai Bot Protection) | < 0.5% (via CDP stealth & Context Proxies) | Uninterrupted DOM traversal |

By abandoning standard HTML diffing and tightly bounding our hardware resource limits through asyncio and Context rotation, the infrastructure transforms from a fragile script into an enterprise-grade auditing engine. The false positive rate alone dictates that structural lxml diffing is the only viable path forward for large-scale drift analysis.

Infrastructure as a Catalyst: The Azguards Approach

Diagnosing the edge cases of isomorphic rendering engines requires more than basic scripts; it demands infrastructure engineered for deterministic exactitude. WRS discrepancies don’t announce themselves—they quietly erode your structured data, drop your canonical tags, and throttle organic visibility.

At Azguards Technolabs, we understand that scaling analytical Python workflows isn’t just about writing async code; it’s about deeply understanding process threading, memory allocation, and hardware constraints. We specialize in Performance Audit and Specialized Engineering. We engineer the internal tooling and highly concurrent Python architectures that data engineering and technical SEO teams rely on to debug the “Hard Parts” of web rendering and content parity.

Whether it is optimizing V8 memory heaps on backend workers, bypassing rigorous enterprise WAFs via custom CDP network layers, or implementing localized structural DOM parsing at extreme scale, Azguards helps enterprise teams fortify their Python for analysis infrastructure.

Conclusion

The handoff between server-rendered markup and client-hydrated states is a critical failure point in modern applications. As long as Google’s Web Rendering Service enforces rigid CPU limits and stateless execution, engineers must proactively map the rendering drift.

By unifying state capture via Playwright’s CDP injections, substituting string algorithms for C-backed lxml structural diffing, and aggressively managing V8 memory leaks through strict concurrency bounding, teams can isolate critical semantic drift across 50,000+ pages reliably. It bridges the gap between what your JavaScript framework compiles and what the search engine actually indexes.

Are your analytical pipelines crashing under the weight of large-scale DOM analysis, or are you struggling to pinpoint SSR-to-CSR data loss? Contact the engineering team at Azguards Technolabs for an architectural review or complex implementation partnership. We build the automated infrastructure that brings clarity to your most complex rendering environments.

Would you like to share this article?

Struggling with rendering drift or large-scale DOM instability?

Book a technical consultation with Azguards Technolabs to audit your SSR/CSR architecture and build a deterministic, WRS-accurate analysis pipeline.

All Categories

Latest Post

- Beyond the TIME_WAIT Cliff: Scaling N8N Egress Velocity with Envoy Sidecar

- Mastering Distributed Rate Limiting: Eliminating the 429 Thundering Herd in Shopify K8s Topologies

- The LangChain Dynamic Schema Leak: Fixing Pydantic V2 Native Memory Exhaustion

- How Graph Reordering Eliminates L1 Cache Misses in SciPy PageRank at Scale

- Race Conditions in Make.com: Eliminating the Dirty Write Cliff with Distributed Mutexes