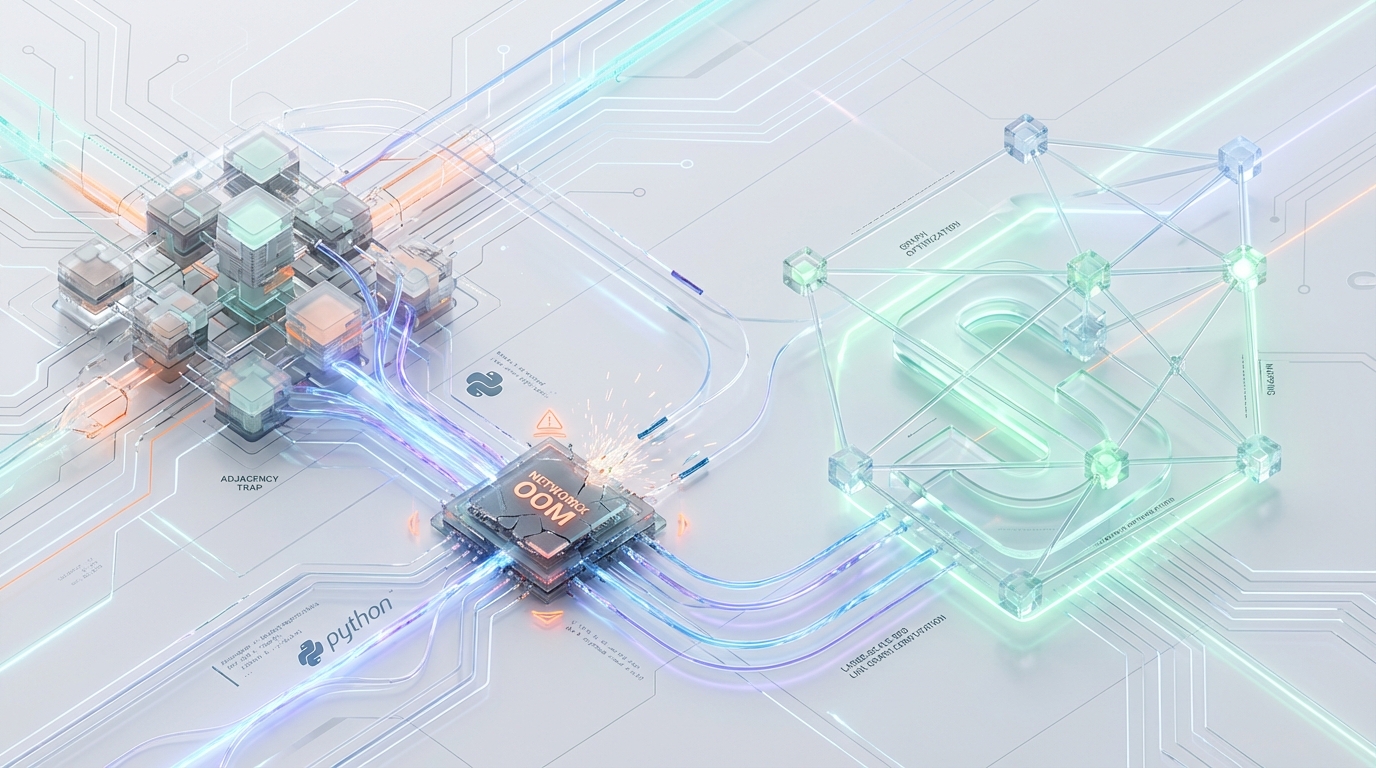

Scaling Enterprise SEO Graphs Without OOM Kills: A Polyglot Architecture Approach

Analyzing massive SEO link graphs at scale presents a unique class of computational challenges. When processing internal linking structures from high-throughput enterprise crawls, the topological complexity scales non-linearly. A site with a few million pages can easily generate tens of millions of directed edges.

The standard analytical approach relies on Python’s NetworkX. However, as link volume grows, data science teams inevitably encounter sudden, catastrophic pipeline failures. A Kubernetes container provisioned with a 16 GB memory limit (limits.memory: 16Gi) running a straightforward PageRank computation will abruptly terminate. The diagnostic logs show a cgroup OOMKilled signal right around the 35M–40M edges mark, specifically during the add_edges_from() ingestion phase.

The complication is not the size of the data—it is the memory representation of the data structure itself. NetworkX is structurally incapable of scaling to enterprise graph dimensions within reasonable memory bounds.

The resolution requires divorcing topological graph processing from algebraic computations. By migrating to a polyglot graph computation architecture—leveraging SciPy’s Compressed Sparse Row (CSR) matrices for spectral algorithms and Rustworkx for topological traversal—engineering teams can drop memory consumption by ~30x and eliminate OOM kills entirely.

Here is the deep-dive into the adjacency trap, and the precise engineering strategy to bypass it.

1. Architectural Diagnostics: NetworkX Memory Scaling Limitations

The primary scaling bottleneck in Python analytical graph pipelines originates from NetworkX’s native representation of directed graphs (DiGraph and MultiDiGraph). NetworkX does not utilize contiguous memory arrays; instead, it relies on a heavily nested, dynamically allocated dict-of-dicts-of-dicts structure.

While this architecture provides O(1)O(1) arbitrary node insertion and high mutability, it is aggressively hostile to the CPython memory model at scale.

Core Memory Bottlenecks & Hard Limits

2. Theoretical Engineering Model & Benchmarks

To quantify the architectural deficit of NetworkX, we benchmarked the library against low-level, memory-contiguous alternatives.

Note: The following benchmarks represent theoretical execution models on standard analytical compute instances (e.g., AWS ml.t3.2xlarge or m5.2xlarge, utilizing 32GB RAM and 8 vCPUs).

| Metric / Operation | NetworkX (DiGraph) | SciPy (csr_matrix) | Rustworkx (PyDiGraph) |

|---|---|---|---|

| Data Structure | Hash Table (dict-of-dicts) | Contiguous Arrays (COO → CSR) | Adjacency List (Rust petgraph) |

| Memory per 1M Nodes, 10M Edges | ~3,500 MB | ~120 MB (float32) | ~180 MB |

| Edge Insertion Latency (10M Edges) | ~14.2s (Heavy GC cycles) | < 0.5s (NumPy array concat) | ~1.5s (Vec push operations) |

| PageRank (10M Edges, 100 iterations) | ~130.0s | ~1.5s (Power Method) | N/A (Algebraic operations sub-optimal) |

| SCC / Cycle Detection Time | ~45.0s | N/A | < 1.0s (Tarjan's Algorithm in C/Rust) |

| Graph Mutability | High (O(1) node insertion) | Low (O(N) re-allocation) | Medium (Append-only optimized) |

The benchmark data highlights a fundamental engineering truth: Hash tables are fundamentally the wrong data structure for static, large-scale graph analysis. Moving to contiguous data structures drops memory consumption from ~3,500 MB to ~120 MB, while slashing PageRank execution time from over two minutes down to ~1.5s.

3. Engineering Transition Strategy: Polyglot Graph Computation

To compute PageRank, Strongly Connected Components (SCC), and Eigenvector centrality without triggering container OOMs, the architecture must specialize. We cannot rely on a single library. Instead, we implement a polyglot graph framework that routes mathematical algorithms to algebraic matrix backends, and traversal algorithms to compiled memory-safe adjacency lists.

Phase A: Spectral / Algebraic Algorithms via SciPy CSR

NetworkX’s internal nx.pagerank() is inherently flawed in execution. Under the hood, it actually delegates the mathematical computation to SciPy. However, it suffers from a catastrophic translation bottleneck: it must convert its massive dict representation into a SciPy CSR matrix single-threadedly before computation can begin.

Bypassing NetworkX entirely and natively constructing a Compressed Sparse Row (CSR) matrix drops memory consumption radically.

The Markov Transition Matrix & Dangling Nodes When calculating PageRank in a raw algebraic format, one must explicitly handle the edge cases that NetworkX usually abstracts away. Dangling nodes—nodes with a zero out-degree—act as equity sinks. In SEO graphs, these are often dead-end pages or heavily restricted paginated states. They absorb PageRank and trap it, preventing the iterative probability distribution from converging. The Markov transition matrix must inject a uniform probability jump for these specific nodes to prevent stochastic matrix collapse.

Phase B: Topological Algorithms via Rustworkx

Algebraic matrices are highly efficient for power iteration, but they are incredibly inefficient for deep path traversal. For cycle detection (identifying massive redirect loops) and isolating orphaned clusters, the pipeline must utilize a topological representation.

rustworkx handles this seamlessly by wrapping Rust’s highly optimized petgraph crate.

The Integer Trade-off Rustworkx operates strictly on integer-indexed nodes. Because we are stripping out the string-based dictionary wrappers, the pipeline must maintain an external, highly-optimized Hash Map to translate URL strings to integer IDs. Utilizing Rust’s hashbrown via Python bindings, or a carefully managed integer-mapped Python dict, is required to track URL-to-ID mappings before passing the raw integers to the Rustworkx graph builder.

4. Headless Crawl Pipeline Integration Patterns

To ingest high-throughput crawl logs (e.g., >5,000 URLs/sec) into an SEO intelligence pipeline, we must implement an in-memory streaming reduction directly into CSR and Rustworkx.

Data Ingestion & Bypassing NetworkX

Do not stream edges iteratively into a graph object. Stream raw integer tuples into chunked arrays, utilizing Polars for zero-copy memory efficiency, and perform bulk graph compilation at the end of the ingestion phase.

Exposing Hidden SEO Equity Sinks & Orphaned Clusters

Once the graphs are compiled in their respective memory-safe backends, the pipeline can execute analytical queries to flag severe architectural SEO defects at speeds impossible in standard Python graph environments.

A. Eigenvector Centrality & PageRank Sinks (SciPy)

The goal of this algebraic query is to find URLs with massive internal linking (high In-degree) but low actual Equity (PageRank). These structural traps dilute link equity across the site.

B. Orphaned Clusters & Deep Cycles (Rustworkx)

The goal of this topological query is to identify isolated subgraphs that are functionally unreachable from the Homepage seed. Tarjan’s Strongly Connected Components algorithm resolves this mathematically.

5. Tuning Container Runtime Configurations

Transitioning the data structures resolves the core memory bloat, but high-performance analytics within tightly constrained Kubernetes environments requires direct intervention at the OS and runtime level.

export OPENBLAS_NUM_THREADS=4 and export OMP_NUM_THREADS=4.

Structural Optimization vs. Horizontal Scaling

Memory optimization at this level is not a simple library replacement. It is an architectural refactor. Swapping NetworkX for another import without redesigning ingestion patterns, memory layout, and algorithm routing will only shift the bottleneck—not remove it.

Enterprise-scale graph processing demands intentional separation of concerns: contiguous algebraic computation for spectral methods, compiled adjacency structures for traversal, and disciplined runtime configuration at the container layer. Without that structural alignment, scaling compute horizontally becomes an expensive bandage rather than a permanent solution.

This is where specialized engineering intervention becomes critical.

Performance Audit and Specialized Engineering

Migrating an enterprise analytical pipeline off a legacy framework like NetworkX requires more than just replacing library imports; it necessitates a fundamental restructuring of how memory is allocated, serialized, and computed. Azguards Technolabs partners with enterprise engineering and data science teams to execute these exact transitions.

We provide Performance Audits and Specialized Engineering to directly address deep infrastructure bottlenecks. We architect Python analytical pipelines that rely on contiguous memory spaces, zero-copy serialization, and compiled backend extensions to bypass the limitations of the Global Interpreter Lock (GIL) and standard heap management.

When your data volume outstrips your foundational stack, scaling compute horizontally is an expensive, temporary bandage. The permanent solution is structural memory optimization.

If your team is experiencing arbitrary pipeline failures, cgroup OOM limits on critical analytical workloads, or unacceptable latencies in SEO logic processing, contact Azguards Technolabs for an architectural review and complex system implementation.

Would you like to share this article?

Is your analytical pipeline failing under memory pressure?

Azguards Technolabs specializes in performance audits, memory-optimized Python architectures, and enterprise graph computation redesign

All Categories

Latest Post

- Mitigating Checkpoint Collisions & Write-Skew in LangGraph

- Spring Kafka Exactly-Once: Mitigating the Fencing Avalanche & Zombie Producers

- The Orphaned Thread Crisis: Managing Schema Drift in Suspended LangGraph Workflows

- How to Fix Make.com Webhook Queue Overflows: The DLQ & Redis Strategy

- DuckDB Spill Cascades: Mitigating I/O Thrashing in Out-of-Core SEO Data Pipelines