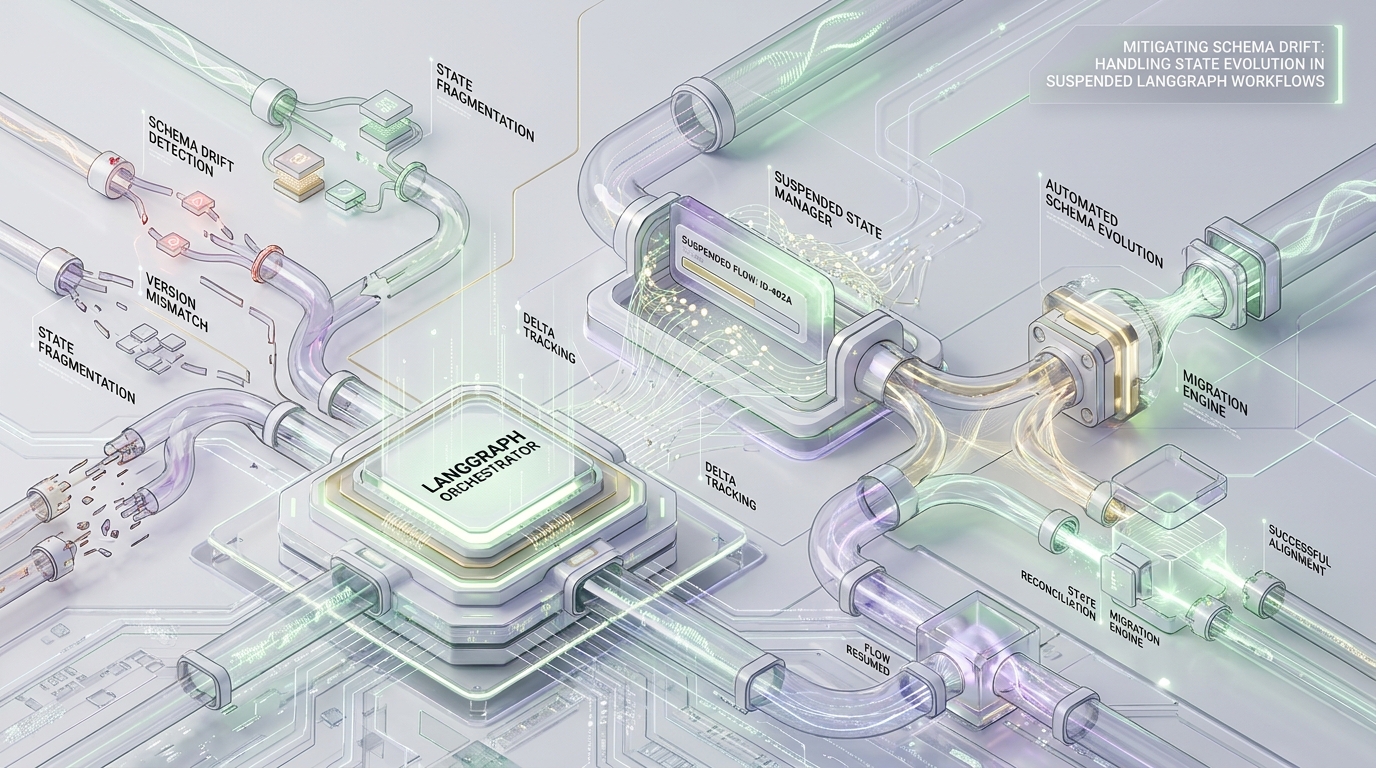

The Orphaned Thread Crisis: Managing Schema Drift in Suspended LangGraph Workflows

In modern Human-in-the-Loop (HITL) architectural patterns, deterministic workflow execution relies on the ability to suspend operations indefinitely. When a LangGraph thread yields to await human input or external triggers, the underlying Pregel engine executes a critical operation: it persists the graph’s StateSnapshot via a checkpointer (like PostgresSaver). This snapshot is serialized and written into binary storage—typically a PostgreSQL bytea column.

Under static conditions, this persistence mechanism is rock-solid. But enterprise environments are dynamic. The “Orphaned Thread Crisis” occurs when a CI/CD pipeline ships an updated Pydantic State schema while workflows are still suspended in the database. When the system attempts to hydrate legacy bytes into a newly deployed model, validation fails, and the thread is permanently orphaned.

Here is how to decouple deserialization from strict type-checking and engineer resilient state migrations that keep your agentic workflows alive through aggressive deployment cycles.

Execution Crash Profile & Hard Limits

Understanding the exact anatomy of the schema drift crash is non-negotiable. When drift occurs during state hydration, the Pregel engine terminates execution with a highly specific, determinable Pydantic validation trace.

Exception Trace:

The most dangerous aspect of this failure is not the error itself, but where it occurs. Notice that the traceback originates in langgraph/pregel/__init__.py during the get_state phase. Because this exception happens fundamentally during graph initialization and state reconstruction—rather than during active node execution—this trace is completely unhandled by LangGraph’s default node-level retry policies. The system will not back off; it will simply crash immediately upon every resumption attempt.

System Constraints & Operational Boundaries

Before engineering a mitigation strategy, you must design around the hard boundaries of the underlying persistence and serialization libraries.

OOM (Out of Memory) Amplification: While a PostgreSQL bytea column enforces a massive 1GB hard limit, your practical limits are significantly lower. A dense legacy checkpoint (for example, 20MB of raw ormsgpack bytes containing a massive multi-agent message history) will routinely trigger a memory spike of 10x-15x (200MB-300MB). This occurs due to Python dictionary pointer overhead during deserialization. If your schema migration logic requires duplicating this dictionary in memory to remap keys, you risk immediate container OOM termination under concurrent resumption loads.

Buffer Depth Limits: The underlying JsonPlusSerializer heavily relies on ormsgpack for byte translation. Deeply nested legacy states—such as recursive JSON scratchpads or highly recursive agent reasoning trees—that exceed a hard nesting depth of 512 will fail violently during serialization and deserialization. State migrations must not flatten and rebuild objects that exceed this depth without custom parsing.

Security Strictness: In hardened environments, setting LANGGRAPH_STRICT_MSGPACK=true is standard practice to prevent Remote Code Execution (RCE) attacks via arbitrary object injection during hydration. This variable forces the system to strictly adhere to a built-in allowlist of safe types.

Consequently, if your schema drift involves renaming custom classes, legacy objects will forcefully reject deserialization at the

ormsgpacklevel before Pydantic is even invoked.

Mitigation Strategy I: Dual-Read/Single-Write Pydantic Hydration (Application Layer)

When your schema drift is localized to field additions, subtractions, or type coercions within the same class structures, the most resilient pattern is managing it at the application layer. Instead of writing custom database migration scripts, we leverage Pydantic’s @model_validator(mode='before').

This interceptor acts directly on the raw dictionary emitted by JsonPlusSerializer.loads_typed before the Abstract Syntax Tree (AST) enforces strict type-checking. This facilitates Just-In-Time (JIT) state migrations. We define this as a Dual-Read/Single-Write architecture: the validator is capable of reading both legacy (v1) and current (v2) dictionary structures, mutates the legacy payload into the modern schema in memory, and guarantees that the subsequent checkpoint saved to the persistence layer only contains the modern schema.

By intercepting the payload at mode='before', we sidestep the ValidationError. The thread successfully hydrates, resumes execution, and upon the next node completion, writes a clean v2 bytea snapshot back to the database.

Mitigation Strategy II: Custom SerializerProtocol Interceptor (Persistence Layer)

Application-layer validation is highly effective for inner-model field drift, but it is entirely insufficient for profound structural drift. If your deployment changes core channel names, removes deprecated channels entirely, or migrates from legacy TypedDict architectures to Pydantic generic types, the graph engine’s internal channel-mapping will fail before your model validators are ever triggered.

In these severe cases, you must intercept the persistence payload at the lowest possible level: the SerializerProtocol.

A LangGraph Checkpoint object is not just raw user state; it is a complex dictionary containing system structural metadata: v (version), ts (timestamp), id, channel_values, and channel_versions. Wrapping the native JsonPlusSerializer allows you to target and mutate channel_values safely before LangGraph attempts channel-routing and validation.

To apply this, inject the custom protocol directly into your checkpointer initialization: PostgresSaver(conn, serde=MigratingSerializer(target_version=2)). This strategy guarantees that the Pregel engine only ever sees state objects structured for the currently deployed graph topology.

Mitigation Strategy III: Forward-Compatible Reducers for pending_writes

The final vector for schema drift crashes occurs within the execution graph’s intermediate layers. When a workflow is suspended mid-super-step (for example, triggering a branching HITL execution path), LangGraph cannot save a finalized state. Instead, it saves uncommitted state updates to a separate checkpoint_writes collection in the database.

If a CI/CD schema update occurs while these intermediate writes are pending, these legacy updates will be immediately passed to your Reducer functions upon thread resumption. If your newly deployed Reducer has a strict new signature (e.g., expecting a string rather than a dictionary), the execution will crash instantly.

Reducers must be architected to be forward-compatible. You must apply defensive type-checking to incoming put_writes payloads to handle orphaned fragments of legacy state.

By ensuring your reducers natively absorb deprecated payload structures, you secure the graph against edge-case crashes that occur strictly between node step executions.

Performance & Boundary Benchmarks: Before vs After

Implementing these JIT interception strategies alters the operational profile of your state hydration. Based on the system constraints and memory amplification data analyzed in the research, the performance characteristics of unmanaged drift versus our engineered mitigation approach break down as follows:

| Metric / Constraint | Unmanaged Hydration (Default) | JIT Migration Strategy (Intercepted) |

|---|---|---|

| Recovery from Field Changes | 0% (Fatal Pydantic Crash) | 100% via @model_validator(mode='before') |

| Recovery from Channel Renames | 0% (Graph Initialization Crash) | 100% via SerializerProtocol Intercept |

| OOM Amplification Risk | High (20MB bytes -> 200MB-300MB overhead) | Controlled (Direct mutation avoids deep-copy duplication) |

| Depth Limit Support | Capped at 512 recursive nodes | Retains 512 hard limit via ormsgpack |

| Security Adherence | Fails on renamed objects if LANGGRAPH_STRICT_MSGPACK=true |

Compliant (Intercepts schema before AST strictness) |

The architectural takeaway is clear: executing migrations strictly at the dictionary parsing layer (loads_typed or mode=’before’) bypasses the fatal errors of strict type-checking while mitigating the massive Python pointer overhead associated with deep-copying large state histories.

Would you like to share this article?

Azguards Technolabs

Tired of Abandoned Agentic Workflows?

Stop losing suspended threads to schema drift. Let's architect a resilient, zero-downtime infrastructure for your enterprise AI. Our team is ready to help you navigate complex state migrations and scale with precision.

GET AN ARCHITECTURAL REVIEWAll Categories

Latest Post

- The Desync Trap: Solving Postgres Read-After-Write Anomalies in AI Agent Workflows

- Mitigating Checkpoint Collisions & Write-Skew in LangGraph

- Spring Kafka Exactly-Once: Mitigating the Fencing Avalanche & Zombie Producers

- The Orphaned Thread Crisis: Managing Schema Drift in Suspended LangGraph Workflows

- How to Fix Make.com Webhook Queue Overflows: The DLQ & Redis Strategy