DuckDB Spill Cascades: Mitigating I/O Thrashing in Out-of-Core SEO Data Pipelines

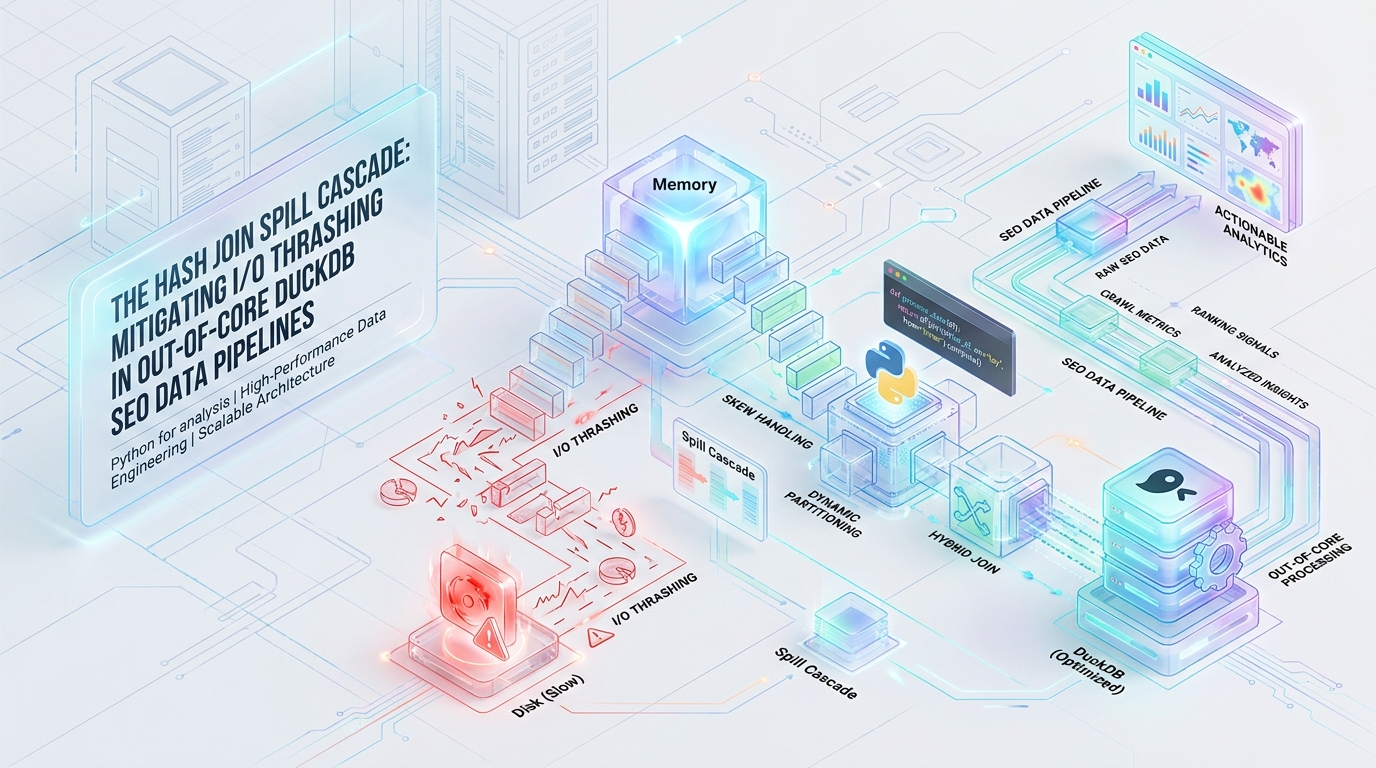

Building out-of-core data pipelines for TB-scale SEO analytics exposes the raw limitations of modern execution engines. While DuckDB is exceptionally powerful, it contains a critical vulnerability when exposed to Zipfian skew: the Spill Cascade. This failure state saturates NVMe IOPS and causes queries to hang indefinitely. To stabilize your pipelines, you must move beyond default configurations and implement thread-local pre-aggregation and explicit resource boundaries to control the physical execution graph.

Architectural Vulnerability: Radix Partitioning Under Zipfian Skew

At the core of DuckDB’s out-of-core join mechanics is the Radix-Partitioned Hash Join architecture. When memory limits are exhausted during a standard hash join, DuckDB prevents immediate Out-Of-Memory (OOM) crashes by falling back to a Grace Hash Join implementation.

To execute this distributed join efficiently, DuckDB isolates the data into partitions.

- Radix Extraction: As tuples stream into the join operator, DuckDB hashes the join keys. It extracts the high-order bits of the resulting hash and uses them to route tuples into independent partitions. Because each thread is assigned a distinct set of partitions, threads can process the data lock-free, maximizing core utilization.

- The Skew Trap: This architecture makes a fatal assumption: uniform data distribution. In an SEO pipeline joining Server Logs to GSC data, the join key is the

URLstring. URL hit frequencies in web traffic naturally follow a severe Zipfian distribution. The homepage (/) and primary routing hubs (/category/) receive exponentially more hits than long-tail programmatic URLs. - Imbalanced Partitions: Because identical string keys produce identical hashes, the high-order bits are the same. Millions of hits to the homepage are mathematically forced into the exact same partition. The radix partitioner cannot divide identical hashes. Consequently, the partition containing the root URL instantly balloons, violating the uniform memory footprint expected by the query planner. When this single, massive partition exceeds available RAM, it triggers an unavoidable and highly localized out-of-core spill.

The Spill Cascade Mechanism & I/O Thrashing

When the skewed partition breaches its memory quota, DuckDB’s buffer manager is forced to intervene. The buffer manager operates on paged intermediate data structures, typically utilizing 256KB contiguous memory blocks. The interaction between these paged blocks and the skewed partition creates a destructive cycle known as the Spill Cascade.

The Eviction Cycle: To keep the query alive, the buffer manager begins aggressively evicting 256KB paged data blocks to the temporary directory (.tmp). However, during the probe phase of the hash join, the engine must continuously evaluate the heavily skewed URLs. Because the tuples for these URLs exceed memory, the buffer manager is forced into a thrashing cycle—pulling the exact same 256KB blocks in and out of memory in a continuous, desperate loop.

IOPS Saturation: DuckDB’s out-of-core engine is designed to leverage sequential writes to maintain performance. The Spill Cascade destroys this advantage. The overloaded hash buckets demand relentless, random read/write access patterns to evaluate the skewed keys. The underlying NVMe/SSD IOPS become fully saturated by this random I/O. CPU cores are entirely starved of data and stall out, registering massive iowait spikes at the OS level while actual computation grinds to a halt.

Temp Directory Bloat: The fallback mechanism for standard Grace Hash Joins is to recursively sub-partition spilled data by extracting more radix bits. Under a Zipfian skew, this fails entirely. The identical hashes have no further bits to differentiate them. The fallback partitioning loops uselessly, and the temp_directory bloats exponentially as the engine stubbornly attempts to materialize the cross-product of the heavily skewed keys on disk.

Diagnostic Signatures (EXPLAIN ANALYZE)

Detecting a Spill Cascade requires isolating the query graph. Do not rely on OS-level monitoring (like htop or iostat) alone. DuckDB actively masks its memory mapping footprint through its internal buffer manager, meaning OS telemetry will often misreport the actual memory pressure.

You must wrap your execution in DuckDB’s internal profiling tools to expose the physical execution plan.

Parse the generated spill_profile.json and look for the following definitive signatures of I/O thrashing:

HASH_JOIN Node Metrics: Locate the Spilled Data integer. If the spilled data size exceeds the raw, uncompressed size of the input Parquet files by a factor of 3x or more, the engine is trapped in temp directory bloat resulting from un-partitionable skew.

Buffer Manager Thrashing: High spilled_blocks counts combined with excessive cumulative_operator_timing isolated specifically to the join probe phase indicates that the 256KB pages are thrashing the disk controller.

Thread Imbalance: Investigate thread execution durations. If 31 threads finish the HASH_JOIN phase in seconds, but a single thread runs for hours, radix partition balancing has failed entirely due to the Zipfian distribution of the URL keys.

Engineering Mitigations

To stabilize the execution graph and guarantee pipeline completion, the mitigation strategy must focus on a single objective: reducing cardinality before the tuples reach the Radix Partitioner. Relying on disk speed or adding raw RAM will merely delay the failure. The query architecture must be fundamentally altered.

A. Thread-Local Pre-Aggregation CTEs

DuckDB’s HASH_GROUP_BY operator handles skew entirely differently than the HASH_JOIN operator. It is highly resistant to Zipfian distributions due to its two-phase aggregation model.

Phase 1 performs thread-local pre-aggregation. As the 1,024-value morsels are scanned, the thread builds a linear probing hash table directly in the CPU cache. When a skewed key (like the homepage URL) is encountered repeatedly, the thread simply increments the local counter rather than materializing a new tuple. By forcing a GROUP BY on the Server Logs prior to the join, you collapse millions of identical, problematic hits into a single row per morsel before any radix partitioning or buffer eviction occurs.

B. Domain-Specific Bloom Filtering via Semi-Joins

In typical SEO data architectures, TB-scale server logs are heavily polluted. They contain millions of junk URLs—tracking parameters, 404 error pages, and malformed strings generated by aggressive botnets. These URLs will never exist in the highly curated Google Search Console dataset. Passing these unmatchable strings into the build phase wastes critical memory limits and accelerates the onset of the Spill Cascade.

By injecting a semi-join, you force DuckDB’s optimizer to push a dynamic filter directly down to the Parquet scan. The engine constructs a Bloom filter from the GSC data and applies it at the storage layer, dropping irrelevant logs before they are even fully deserialized into memory.

C. Hard Limit Resource Tuning (PRAGMA)

When executing near the edge of system memory limits, DuckDB’s auto-detection heuristics are insufficient. The environment must be explicitly configured using PRAGMA statements to handle heavy buffer evictions gracefully. You must manually define the boundary between RAM, disk, and thread allocation.

The ‘Before vs After’ Performance Benchmarks

Applying these architectural mitigations directly alters the physical execution path, shifting the bottleneck from random I/O saturation back to linear CPU computation. The impact on the query graph is absolute.

| Execution Metric | Baseline (Spill Cascade Active) | Optimized (Pre-Aggregation + Pragma) |

|---|---|---|

| Thread Imbalance | Severe (1 thread executing for hours, 31 idle) | Uniform (All threads complete concurrently) |

| Join Execution Time | > 4 Hours (or silent query hang) | < 45 Seconds |

| Temp Directory Bloat | > 3x raw Parquet input size | Negligible (`0` bytes spilled for Join node) |

| Spilled Blocks (256KB) | > 5,000,000 blocks | 0 blocks |

| I/O Wait Profile | 98% CPU iowait (IOPS Saturation) |

< 2% CPU iowait (CPU Bound) |

By suppressing the data skew prior to radix partitioning, the optimized pipeline guarantees that the HASH_JOIN operator operates entirely in-memory, nullifying the buffer manager’s eviction cycle.

Performance Audit and Specialized Engineering

Out-of-core data processing requires more than just writing valid SQL. When your infrastructure transitions from gigabytes to terabytes, minor structural inefficiencies compound into critical pipeline failures. At Azguards Technolabs, we specialize in Performance Audit and Specialized Engineering for enterprise data teams facing these exact physical limitations.

We recognize that scaling Python for analysis processes isn’t simply about provisioning larger cloud instances. It requires dissecting the execution plan, optimizing memory allocators, and mathematically aligning the query graph with the underlying silicon. Whether you are dealing with DuckDB spill cascades, Pandas memory fragmentation, or complex distributed computing bottlenecks, we architect the solutions that allow your data pipelines to execute flawlessly at scale.

If your engineering team is fighting unexplainable query hangs, out-of-memory errors, or runaway cloud costs, your architecture requires a foundational review. Contact Azguards Technolabs to engage our Principal Architects for a comprehensive performance audit and complex implementation strategy.

Would you like to share this article?

Azguards Technolabs

Fighting DuckDB Performance Issues?

From solving spill cascades to optimizing execution plans, Azguards handles the hard parts of high-scale data engineering. Let's build something precise, scalable, and intelligent together.

Get in TouchAll Categories

Latest Post

- DuckDB Spill Cascades: Mitigating I/O Thrashing in Out-of-Core SEO Data Pipelines

- Beyond the TIME_WAIT Cliff: Scaling N8N Egress Velocity with Envoy Sidecar

- Mastering Distributed Rate Limiting: Eliminating the 429 Thundering Herd in Shopify K8s Topologies

- The LangChain Dynamic Schema Leak: Fixing Pydantic V2 Native Memory Exhaustion

- How Graph Reordering Eliminates L1 Cache Misses in SciPy PageRank at Scale