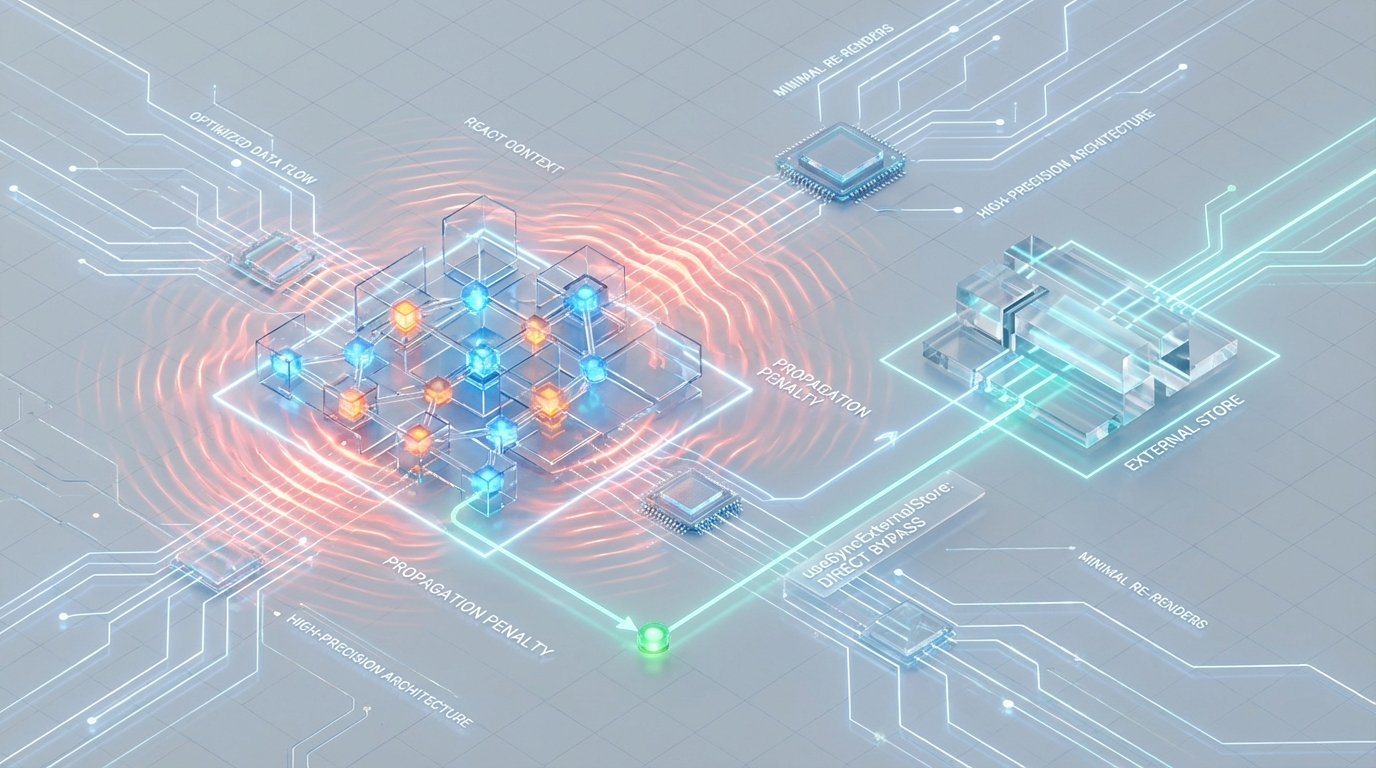

The Propagation Penalty: Bypassing React Context Re-renders via useSyncExternalStore

1. The Situation: Context as the De Facto Store

In modern React architecture, the Context API has been misappropriated. Originally designed for dependency injection passing stable references like themes, localization strings, or authentication tokens down the component tree, now it has morphed into a default engine for global state management.

For low-velocity data, this pattern is acceptable. However, for high-frequency updates telemetry dashboards, real-time collaboration tools, or stock tickers Context introduces a catastrophic performance bottleneck known as the Propagation Penalty.

As a Staff Engineer or Architect, you have likely observed the symptoms: input delay on form fields, dropped frames during animations, and heavy main-thread congestion, all while the DOM updates appear minimal. The culprit is not the Commit Phase; it is the Render Phase.

This article dissects the mechanical failure of React Context for high-frequency state and details the architectural migration to useSyncExternalStore (uSES), the pattern mandated by React 18+ for tearing-free, O(1) concurrent state management.

2. The Mechanics of Failure: Context Thrashing

To understand why Context fails at scale, we must look at the Fiber reconciler.

When a Context Provider receives a new value prop (assuming reference inequality), React initiates a traversal algorithm that is distinctly different from standard prop propagation. Context updates set a specific “propagation bit” (mapped to changedBits or specific Lanes in fiber internals) on every consumer in the subtree.

The Bypass Mechanism

Normally, a component wrapped in React.memo will bail out of rendering if its props are referentially stable. Context bypasses this protection.

When the reconciler encounters a fiber with a context dependency, it marks that fiber as having a “pending update.” This forces the component to re-render, effectively ignoring the shouldComponentUpdate or memo bailout strategy.

The “Everything” Problem

Native Context lacks a selector API. Consider a context value shaped like this:

If tickerValue updates every 50ms, React creates a new object reference for AppState. Consequently, a component that strictly consumes currentUser—and should remain static is forced to re-render.

The Cost of O(N) Traversal

In a subtree with 1,000 consumers, a single context update triggers an O(N) traversal. Even if 999 of those components result in a “no-change” diff and do not touch the DOM, the CPU cost of the Render Phase is incurred.

React must build the work-in-progress tree, run diffing logic, and execute functional component bodies. During this time, the main thread is blocked. At 50ms intervals, this results in “Context Thrashing,” leaving zero idle time for user interaction.

If your dashboard or real-time application is experiencing input lag, dropped frames, or heavy CPU usage, the root cause is often inefficient state propagation.

Audit Your React Performance3. Concurrent Rendering and the "Tearing" Risk

React 18 introduced concurrent rendering, allowing the “Render Phase” to be interruptible. React can now yield to the main thread to handle urgent user input (like typing) before resuming a render. While this improves responsiveness, it introduces a concurrency hazard known as Visual Tearing.

The Anatomy of a Tear

Imagine a store value that increments from 10 to 11.

- Start: React begins rendering a component tree.

- Read A: Component A reads

store.value(Value = 10). - Yield: React yields to the browser to handle a high-priority event.

- External Event: A WebSocket message arrives, updating

store.valueto11. - Resume: React resumes rendering.

- Read B: Component B (sibling to A) reads

store.value(Value = 11). - Commit: React commits the tree to the DOM.

The Result: The UI displays 10 and 11 simultaneously in the same frame. This inconsistency is unacceptable in financial or engineering applications.

Why useEffect is Unsafe

Legacy patterns often used useEffect to subscribe to external stores. This is architecturally unsound in React 18+.

Subscriptions inside useEffect fire after the commit phase. There is a temporal gap between the initial render and the effect execution where the store might change. If the store updates during this gap, the component renders with stale data, mounts, subscribes, detects the change, and forces a second “fixing” render. This causes layout thrashing and visual artifacts.

The useSyncExternalStore Guarantee

useSyncExternalStore (uSES) solves this by enforcing atomicity during the render.

- Synchronous Reads: It forces React to treat store updates as synchronous during the render phase.

- Consistency Check: It utilizes a

getSnapshotmethod. If the store changes during a concurrent render, React detects the mismatch between the start-of-render snapshot and the current snapshot. It then forces a synchronous restart of the render to ensure consistency.

4. Architecture: Implementing the Selector-Based Store

To achieve O(1) performance where an update triggers a re-render only for components listening to that specific slice of state we must decouple state from the React tree entirely.

We need a singleton store architecture managed via useSyncExternalStore.

Step 1: The Vanilla Store Factory

First, we build a store that has zero dependencies on React. This allows the state to exist outside the component lifecycle, making it immune to unmounts/remounts and accessible to non-React utilities.

Step 2: The Custom Hook with Selector Support

A naive implementation of useSyncExternalStore will trigger a re-render on every store update. To solve the “Everything Problem,” we must implement a selector pattern.

Critical Engineering Note: The native useSyncExternalStore uses Object.is equality on the result of getSnapshot. If your selector returns a derived object (e.g., state => ({ a: state.a })), it creates a new reference every render, causing an infinite loop.

For production implementations, we recommend using the useSyncExternalStoreWithSelector shim or a manual ref-based memoization.

Usage Example

Component A subscribes only to ticker, ignoring user updates.

5. Benchmark Comparison: The "50ms Tick"

At Azguards Technolabs, we profiled this architecture against native Context in a telemetry dashboard scenario.

Scenario: A dashboard with 50 widgets. One widget updates every 50ms. Metric: Main Thread Blocking Time & Render Counts.

1. Context Implementation

Behavior: The Root Context Object reference updates. React flags all 50 consumers.

Render Phase: 50 Components enter the render phase.

Wasted Work: 49 Components render, run diffing logic, and bailout (if memoized) or commit (if not).

Performance: Significant CPU congestion. Input delay increases linearly with the number of consumers.

2. uSES (Selector) Implementation

Behavior: The Store updates. Listeners fire. 50 Selectors run purely (JavaScript execution, no React Fiber overhead).

Render Phase: 49 Selectors return true (equality). 1 Selector returns false.

Result: Only 1 Component enters the React Render Phase.

Performance: Zero wasted reconciliation work. The Main Thread remains idle.

Bundle Size & Overhead Analysis

| Solution | Bundle Size (Gzipped) | Dependency Risk | Complexity |

|---|---|---|---|

| Native Context | 0 KB | None | Low (until optimized) |

| Custom uSES | < 1 KB | Low (React Native Hook) | High (Manual Maint.) |

| Zustand (v4+) | ~1.2 KB | Very Low | Low (Abstraction) |

| Redux Toolkit | ~12 KB | Medium | Medium/High |

Our engineers help teams migrate large React applications from inefficient Context-based state management to selector-driven architectures using useSyncExternalStore, Zustand, and modern concurrency-safe patterns.

Talk to a React Architecture Expert6. Strategic Action Plan

As systems scale, “works on my machine” is replaced by “latency at the 99th percentile.” React Context is a dependency injection tool, not a high-frequency state manager.

Recommendation 1: Audit Your State Velocity

Classify your application state into two buckets:

- Low-Frequency/Global: Theme, User Auth, Locale.

- Solution: Stick with React Context. The propagation cost is negligible here.

- High-Frequency/Atomic: Data grids, Forms, Real-time feeds, Mouse coordinates.

- Solution: Migrate to uSES.

Recommendation 2: Don’t Reinvent the Wheel (Usually)

While the custom implementation above validates the architecture, maintaining your own selector memoization logic is prone to edge cases.

For 90% of enterprise applications, Zustand is the correct engineering choice. It is essentially a battle-tested wrapper around useSyncExternalStore that handles selector memoization and transient updates for ~1.2KB.

Adopt Custom uSES Only If:

You have strict zero-dependency requirements.

You are integrating with a non-React engine (e.g., WebGL, Canvas, Legacy Systems) where you need absolute control over the subscription lifecycle.

Recommendation 3: Stop Patching Context

We frequently see teams attempting to optimize Context by splitting it into multiple providers (DispatchContext, StateContext) or wrapping every consumer in useMemo. This is technical debt. When state velocity increases, the overhead of context propagation cannot be memoized away. You must bypass the tree.

The shift from Context to useSyncExternalStore represents a maturation in React architecture. It acknowledges that while React’s render cycle is powerful, it is too expensive for granular, high-velocity data streams. By moving state outside the component tree and bridging it back via atomic, selector-based subscriptions, we reclaim the main thread and eliminate the propagation penalty.

Azguards Technolabs: Performance Audit & Specialized Engineering Optimizing high-scale React applications requires more than just code; it requires architectural precision. At Azguards, we specialize in solving the “Hard Parts” of engineering—from eliminating reconciliation thrashing to architecting micro-frontends.

If your platform is suffering from rendering bottlenecks or technical debt, contact our engineering team for a comprehensive Performance Architecture Review. We don’t just patch bugs; we re-engineer for scale.

Would you like to share this article?

Eliminate React Performance Bottlenecks

At Azguards Technolabs, we specialize in solving the hard engineering problems—React rendering bottlenecks, real-time data architecture, and large-scale frontend performance optimization.

Whether you're building dashboards, collaboration tools, or high-frequency data interfaces, we can help you architect systems that scale without sacrificing performance

Talk to an EngineerAll Categories

Latest Post

- The Orphaned Thread Crisis: Managing Schema Drift in Suspended LangGraph Workflows

- How to Fix Make.com Webhook Queue Overflows: The DLQ & Redis Strategy

- DuckDB Spill Cascades: Mitigating I/O Thrashing in Out-of-Core SEO Data Pipelines

- Beyond the TIME_WAIT Cliff: Scaling N8N Egress Velocity with Envoy Sidecar

- Mastering Distributed Rate Limiting: Eliminating the 429 Thundering Herd in Shopify K8s Topologies