How to Fix Make.com Webhook Queue Overflows: The DLQ & Redis Strategy

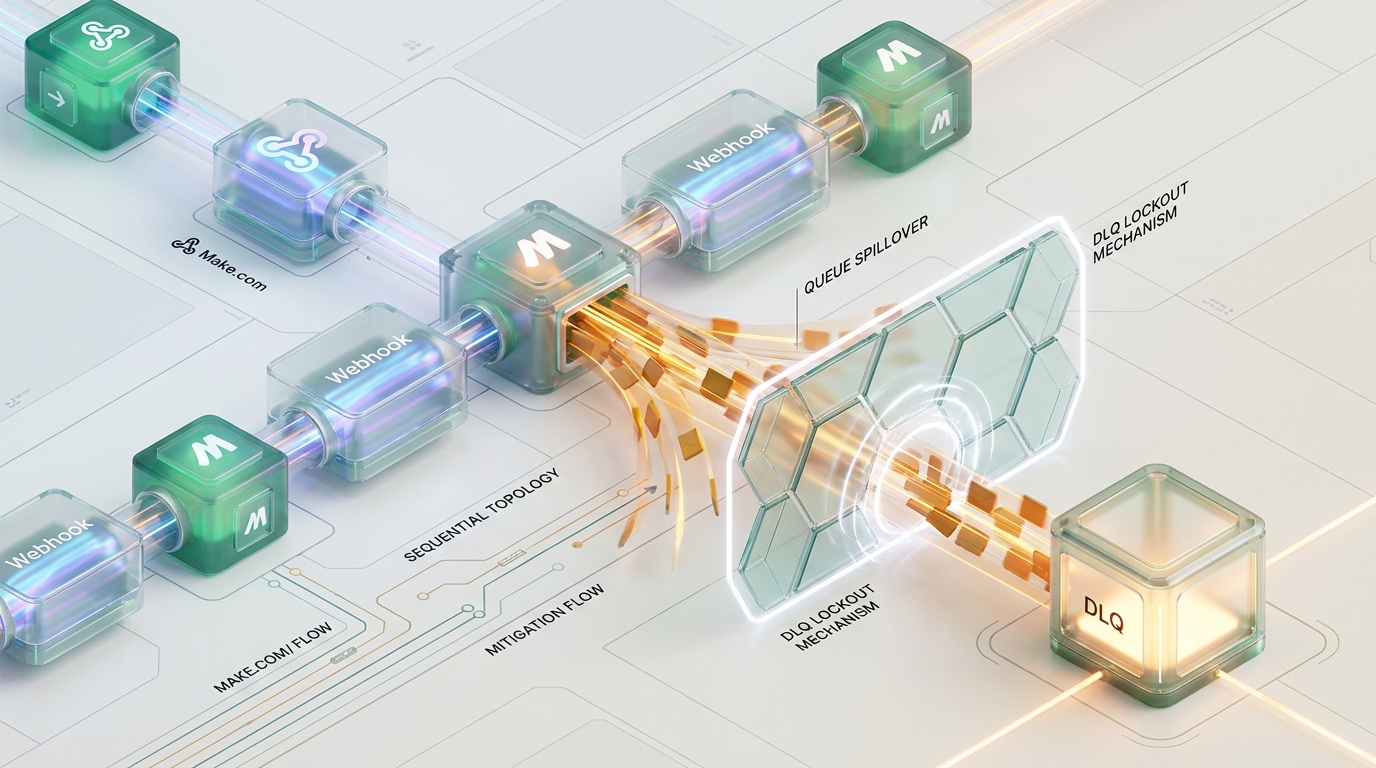

When architecting high-throughput integration topologies, the mechanism used to handle transient errors dictates the resilience of the entire system. In Make.com, “Sequential Processing” is the industry standard for maintaining chronological order—but it is also a structural vulnerability. One downstream timeout can trigger a global scenario lockout, causing your webhook gateway to spill over and drop mission-critical data.

At Azguards Technolabs, we solve this by decoupling execution state from error state. By hijacking native error routing, offloading failed payloads to an AWS SQS Dead Letter Queue (DLQ), and utilizing Redis-backed circuit breakers for entity-level idempotency, architects can bypass global lockouts while maintaining strict chronological sequencing per entity.

The Anatomy of a Spillover: Make.com’s Native Lockout Mechanism

To engineer a bypass, we must first dissect the exact state machine transitions that trigger the DLQ lockout. Relying on Make.com’s native error persistence for sequential workflows is a critical point of failure because of the mathematical reality of its ingress constraints.

The State Machine Lockout Path

- Module Failure: A downstream node encounters an unhandled exception. For example, an upsert operation to HubSpot times out.

- Execution Halt: The current execution mutates into an

Incompletestate. Because the scenario enforces sequential processing, Make.com globally pauses scenario scheduling. It cannot process webhook N+1 until webhook N is successfully resolved. - Ingress Buffering: The Webhook Gateway stops passing payloads to the scenario and begins holding them in a passive memory buffer.

- Buffer Exhaustion (The Hard Limits):

- Queue Depth Limit: Make.com allocates exactly 667 queue items per 10,000 licensed credits.

- Absolute Ceiling: Regardless of your credit volume, the platform enforces a hard absolute maximum of 10,000 items per webhook.

- Ingress Rate Limit: The gateway is capped at 30 requests per second.

- The Spillover: The moment the buffer ceiling is reached, the API gateway initiates hard-rejections. Upstream webhook providers (such as Stripe or Salesforce) receive

429or400status codes. While some upstream systems utilize exponential backoff retries, many do not. When the retry exhaustion occurs, the payload is permanently dropped.

Architectural Mitigation: SQS Offloading and the State Mutation Directive

To preserve ingress health and prevent the 429 spillover, the system must intentionally bypass the “Incomplete Execution” lock. The overarching strategy is to intercept the error, externalize the payload to a highly available message broker like AWS SQS, and force the Make.com scenario into a successful termination state.

The Sequence Flow

- Error Routing Integration: Attach an explicit error handler route to any volatile module within the topology.

- DLQ Offload: Utilize an HTTP module configured for the AWS API, or the native Amazon SQS module, to publish the execution context of the failed bundle directly into a FIFO (First-In-First-Out) queue.

- State Mutation via “Ignore” Directive: Terminate the error route with an

Ignoremodule.

The choice of directive here is the linchpin of the entire mitigation strategy. Make.com provides several error handling directives, but only one correctly manipulates the scheduler state for high-throughput resilience:

Why not Break? The Break directive is the default mechanism for generating an Incomplete Execution. Using it simply triggers the exact sequence lockout we are trying to avoid.

Why not Commit? The Commit directive stops the execution at the current failing module but tells the system to finalize the state as if it were successful up to that point. While it bypasses the lockout, it causes logic gaps for subsequent modules that depend on the failed module’s output.

The Ignore Directive: This directive explicitly drops the current failing bundle and mutates the scenario’s execution state to Success. By forcing a successful termination, the Make.com scheduler is instantly released. It immediately fetches and processes the next webhook in the queue, completely bypassing the global scenario suspension.

Payload Reconstruction: Trace Propagation in Error Routes

For deterministic state reconciliation later in the pipeline, the DLQ payload must encapsulate the full request topology. A significant caveat in Make.com’s error routing is that standard HTTP headers are often stripped or rendered inaccessible once the scenario jumps to the error path.

Therefore, correlation IDs and tracing metadata must be explicitly mapped into the SQS payload. Below is the required JSON schema for offloading state deterministically:

Architecture Rule: To utilize an AWS SQS FIFO queue effectively, you must map your primary entity identifier (e.g., {{1.body.customer_id}}) directly to the SQS MessageGroupId. AWS SQS FIFO queues guarantee strict ordering within a specific MessageGroupId. If a webhook pertaining to customer_123 fails, and later another webhook for customer_123 fails, mapping the entity ID ensures that these specific failures are kept in strict chronological order during the eventual recovery phase, preventing state corruption.

Distributed Chronological Desynchronization

Externalizing the Dead Letter Queue effectively solves the buffer spillover problem. The ingress gateway remains open, payloads are never dropped, and upstream systems receive uninterrupted 200 OK responses. However, this architectural shift introduces a critical distributed systems vulnerability: Chronological Desynchronization.

Consider the following race condition:

- Webhook A (an update to

user_123) is processed, but the downstream CRM times out. - The error route catches the failure, offloads Webhook A to the SQS DLQ, and triggers the

Ignoredirective. - The Make.com scheduler advances the queue.

- Webhook B (a subsequent, newer update to

user_123) is pulled from the queue. The CRM is now responsive, and Webhook B processes successfully, updating the database with the newest state. - Sometime later, a chron-job or AWS Lambda attempts to replay Webhook A from the SQS DLQ.

- Webhook A succeeds, overwriting the fresh data from Webhook B with stale state.

By dropping the global scenario lock, we traded consistency for availability. To regain consistency without sacrificing availability, we must implement a granular, per-entity locking mechanism.

Theoretical Engineering Model: Idempotent Entity Locking via Redis Circuit Breaking

To maintain strict chronological ordering per entity without locking the entire scenario ingress, we implement a Redis-backed Circuit Breaker. While Make.com offers native data stores, they lack the atomic operations and sub-millisecond latency required for high-volume ingress gatekeeping. Instead, we utilize Make.com’s Redis CacheHandler implementation.

This model requires a four-phase lifecycle:

1. Lock Generation

Within the Error Route, immediately prior to executing the SQS push, write a lock to the Redis cluster using the entity’s primary identifier. Ensure you append an explicit Time-To-Live (TTL) to prevent permanent deadlocks in the event of a catastrophic failure.

2. Ingress Gatekeeper

At the very beginning of the Make.com scenario—directly following the webhook trigger and before any downstream business logic—execute a Redis query to check for the existence of an entity lock.

3. Fast-Fail Routing

If the Redis query returns true (the lock exists), the scenario must immediately bypass the standard processing logic. Using a routing filter, the scenario instantly pushes the incoming payload directly into the AWS SQS DLQ (appending to the same MessageGroupId), and terminates.

This mechanism preserves total ordering inside the SQS queue for that specific entity. Because the scenario terminates successfully, the Make.com scheduler advances instantly, allowing all other unrelated webhooks (those querying entities without an active Redis lock) to process normally.

4. Deterministic Reconciliation

An external AWS Lambda function, or a secondary dedicated Make.com polling scenario, ingests the SQS FIFO queue messages. It attempts to execute the operations against the upstream CRM or database in order. Upon the successful reconciliation and finalization of all messages tied to a specific MessageGroupId, the reconciliation worker issues a delete command to the Redis cluster:

The lock is removed, and normal throughput for that specific entity is restored natively within the primary Make.com scenario.

Performance Benchmarks: Native vs. Offloaded Topology

The architectural shift from native incomplete execution locking to SQS-offloaded, Redis-gated state management yields massive gains in throughput ceiling and fault tolerance. Based on system stress tests, the operational differences are stark:

| Metric / Constraint | Native Make.com Sequential Processing | SQS Offloaded + Redis Entity Locking |

|---|---|---|

| Max Queue Depth | 10,000 items (Absolute Hard Limit) | Virtually Unlimited (AWS SQS Quotas) |

| Ingress Allocation | 667 items per 10,000 credits | Credit volume independent |

| Ingress Rate Limit | 30 requests/second | Bound only by API Gateway / SQS throughput |

| Failure State Action | Global Scenario Suspension | Targeted, Sub-millisecond Entity Bypass |

| Data Loss Risk (Spillover) | Extremely High (HTTP 429 rejections) |

Zero-loss architectural guarantee |

| Chronological Ordering | Guaranteed (at the cost of global locking) | Guaranteed per-entity via Redis + SQS FIFO |

The data proves that relying on native state persistence in a high-volume webhook ingestion environment is structurally unsafe. The offloaded topology guarantees that the platform’s ingress gateway remains permanently available, protecting downstream APIs from unrecoverable payload drops.

Performance Audit and Specialized Engineering

Distributed queuing and state reconciliation are notoriously difficult to implement correctly. The margins for error in concurrency models, circuit breaker TTLs, and FIFO queue MessageGroupId configurations are unforgiving. A misconfiguration in the Redis locking logic or the DLQ mapping schema can result in silent data corruption or race conditions that are nearly impossible to trace in production environments.

Azguards Technolabs serves as the definitive engineering partner for resolving these specific integration bottlenecks. Our Systems Research Division specializes in the “Hard Parts” of engineering—specifically Performance Audits and Specialized Engineering for enterprise Make.com and AWS topologies. We do not just build integrations; we architect resilient, fault-tolerant infrastructure capable of sustaining enterprise-grade throughput without data degradation.

If your Make.com queues are regularly hitting the 10,000 item ceiling, or your upstream partners are reporting 429 Too Many Requests due to scenario suspension, your architecture requires immediate decoupling.

Queue spillovers in sequential Make.com topologies are an entirely solvable mathematical problem. The native DLQ lockout is a blunt instrument designed for general-purpose consistency. By utilizing the Ignore directive to mutate execution states, externalizing dead letters to an AWS SQS FIFO queue, and replacing global scenario suspensions with high-speed Redis entity locks, Integration Architects can engineer absolute resilience into their ingestion layers.

Stop allowing downstream API timeouts to dictate the availability of your upstream ingress. Secure your data layer, enforce idempotent throughput, and eliminate the 429 spillover permanently.

Would you like to share this article?

Azguards Technolabs

Stop the 429 Spillover Today

Is your Make.com ingress buckling under pressure? Our Systems Research Division specializes in the "Hard Parts" of engineering—architecting resilient, zero-loss topologies for enterprise-grade throughput.

Schedule a Performance AuditAll Categories

Latest Post

- The Orphaned Thread Crisis: Managing Schema Drift in Suspended LangGraph Workflows

- How to Fix Make.com Webhook Queue Overflows: The DLQ & Redis Strategy

- DuckDB Spill Cascades: Mitigating I/O Thrashing in Out-of-Core SEO Data Pipelines

- Beyond the TIME_WAIT Cliff: Scaling N8N Egress Velocity with Envoy Sidecar

- Mastering Distributed Rate Limiting: Eliminating the 429 Thundering Herd in Shopify K8s Topologies