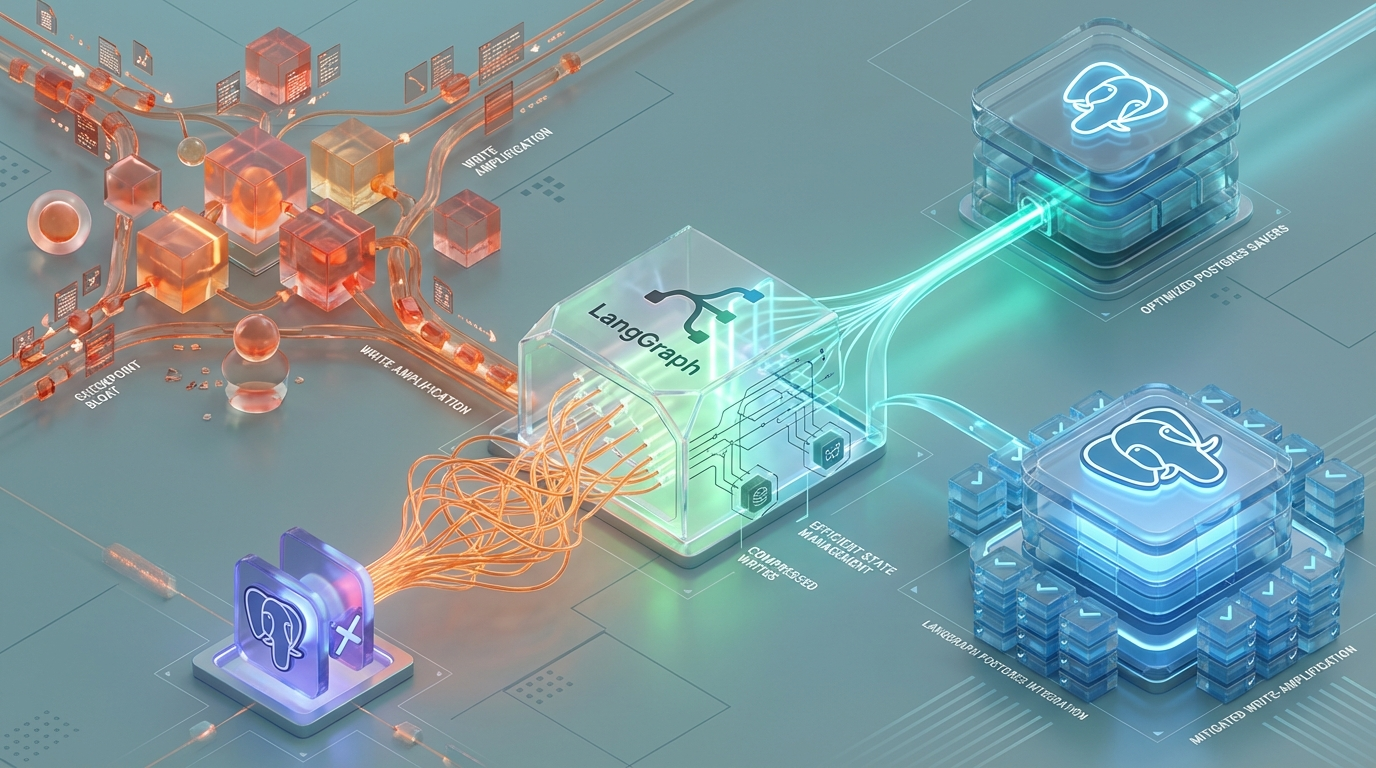

The Checkpoint Bloat: Mitigating Write-Amplification in LangGraph Postgres Savers

LangGraph’s execution model relies on strict snapshot isolation. To guarantee deterministic replayability and support complex, cyclic multi-agent routing, the framework captures the entire state dictionary at the conclusion of every node execution—commonly referred to as a “superstep.”

This state is then serialized and persisted. However, when deployed at scale in production RAG (Retrieval-Augmented Generation) environments, this fundamental persistence mechanic introduces severe database degradation. Lead engineers quickly discover that storing extensive conversational contexts, retrieved document arrays, and raw HTML payloads natively within LangGraph’s default PostgresSaver leads to cascading write-amplification, crippling disk I/O, and replication lag.

The root cause lies at the intersection of LangGraph’s append-only state machine and PostgreSQL’s internal storage architecture. This article dissects the mechanics of LangGraph’s checkpoint bloat and details the implementation of a Pointer State Pattern to decouple control-plane state from heavy data-plane payloads.

1. The Mechanics of LangGraph Checkpointing & State Serialization

To understand the bottleneck, we must first examine how LangGraph handles state persistence before it ever touches the database.

The Serialization Pipeline

By default, LangGraph checkpointers utilize the JsonPlusSerializer. Because AI workflows heavily rely on complex, non-standard types (e.g., Pydantic models, LangChain core primitives, Datetime objects, and memory buffers), a standard JSON encoder is insufficient. The JsonPlusSerializer leverages highly optimized C-based libraries—specifically prioritizing orjson for rapid serialization, with fallbacks to msgpack—to encode these complex object graphs into a standardized binary payload.

The Persistence Strategy

Unlike traditional CRUD applications that mutate existing records, LangGraph enforces immutability. The PostgresSaver does not issue an UPDATE statement to a single state row. Instead, it performs an INSERT into the checkpoints table for every single superstep, identified by a composite primary key of (thread_id, checkpoint_id).

The Payload Mechanics

The inserted record contains the full, serialized snapshot of channel_values and channel_versions. This design is theoretically elegant: it enables arbitrary “time-travel,” allowing developers and agents to branch, debug, or replay executions from any historical checkpoint_id. However, in practice, it aggressively trades database storage capacity and disk I/O for this immutability. Every document retrieved, every string generated, and every embedding array processed is duplicated in the database for every node the graph traverses.

2. The IOPS Penalty & PostgreSQL WAL Bloat

In isolated testing, inserting JSON payloads into PostgreSQL is trivial. In multi-agent RAG workflows, state keys (e.g., retrieved_documents, context_arrays, generation_history) frequently exceed 100KB. When paired with LangGraph’s snapshot-per-superstep mechanic and pushed to high concurrency, this triggers severe database degradation.

The Hard Limits: PostgreSQL and TOAST

PostgreSQL dictates a fixed page size of 8KB, defined by the BLCKSZ parameter. When a serialized LangGraph checkpoint exceeds the TOAST_TUPLE_THRESHOLD (approximately 2KB), PostgreSQL cannot store the data in-line with the standard heap page. It is forced to compress the payload and move the data out-of-line into a TOAST (The Oversized-Attribute Storage Technique) table.

Application-Level Write Amplification

Consider a standard RAG pipeline executing a 15-step graph (e.g., routing -> retrieval -> grading -> generation -> hallucination-checking). If the state payload swells to 100KB after retrieval, LangGraph continues to generate a discrete INSERT for the remaining nodes.

A 15-step graph execution with a 100KB state payload generates 15 discrete INSERT operations. This writes 1.5MB of data per single graph run.

The WAL Multiplier Effect

The true performance killer is not the heap storage, but the Write-Ahead Log (WAL) generation. Each out-of-line TOAST write is split into roughly 2000-byte chunks.

A single 100KB LangGraph payload requires approximately 50 TOAST chunks. Writing these chunks generates independent WAL records and forces sequential updates to the TOAST table’s internal B-Tree index, which tracks the chunk_id and chunk_seq.

The Production Fallout

At a modest scale of 100 concurrent graph executions, this architecture results in hundreds of megabytes of WAL generation per second. The resulting penalty manifests across three vectors:

I/O Bottleneck: Complete disk saturation driven by continuous TOAST index churning.

CPU Spikes: The database engine is locked in continuous pglz or lz4 compression and decompression cycles for massive JSON serialization payloads.

Replication Lag: The sheer volume of WAL records saturates the internal network, causing read-replicas to fall dangerously behind the primary instance.

3. Quantifying the Write Amplification

To baseline the architectural shift required, we must observe the exact differential between the native checkpointer and a decoupled offloading strategy. The following table illustrates the performance benchmarks of a 15-step multi-agent RAG workflow operating at 100 concurrent executions.

| Metric | Native PostgresSaver | PointerPostgresSaver (Redis) | System Impact Delta |

|---|---|---|---|

| Payload Size (Per Superstep) | 100KB | ~150 Bytes | 99.8% Reduction |

| Data Written (Per 15-Step Run) | 1.5MB | ~2.2KB | Eliminates TOAST triggers entirely |

| WAL Generation (100 Concurrent) | ~150 MB/sec | < 1 MB/sec | Prevents disk IOPS saturation |

| Compression Overhead | High (pglz / lz4 churn) | Zero | Drastic reduction in DB CPU usage |

| Replication Lag | 3 - 5 seconds | < 100 milliseconds | Enables immediate read-replica scaling |

4. Architectural Mitigation: The Pointer State Pattern

To resolve this write amplification without fundamentally sacrificing LangGraph’s state machine execution logic, we must deploy a Pointer State Pattern.

The core engineering principle is the strict decoupling of the control-plane state from the data-plane state.

Control-Plane State: Routing flags, agent loop counters, and deterministic metadata. These are lightweight (bytes) and belong in PostgreSQL.

Data-Plane State: Heavy RAG contexts, massive string arrays, and raw HTML dumps. These trigger TOAST and belong in a high-throughput Key-Value store.

Ephemeral KV Offloading

Transient, bulky data is intercepted natively at the Python layer before it reaches the LangGraph serialization pipeline. It is written directly to an in-memory KV store like Redis. Redis is highly optimized for this, capable of microsecond writes for strings up to the 512MB hard limit (though keeping payloads under 1MB is highly recommended to prevent network latency spikes during retrieval).

Pointer Injection

Instead of storing a 100KB array of Document objects in the LangGraph state dictionary, the heavy payload is replaced with a lightweight URI string (e.g., __ptr__:redis:state:uuid). This guarantees the channel_values dictionary passed to PostgresSaver remains well under the 2KB TOAST_TUPLE_THRESHOLD.

JIT Hydration

Upon a get_tuple() read request (which is invoked natively by LangGraph when resuming from an interrupt or traversing an edge), the customized checkpointer detects the URI prefix. It fetches the payload from Redis and rehydrates the state dictionary in memory before passing it back to the graph execution loop.

5. Python Implementation: Overriding the CheckpointSaver

Below is the production-grade implementation required to execute this pattern. We subclass LangGraph’s native PostgresSaver to selectively filter, offload, and route heavy state keys.

Notice the explicit use of a shallow copy (safe_channel_values = dict(...)). Mutating the active state dictionary directly would alter the memory reference used by the active LangGraph runner, causing immediate failures in downstream nodes that expect retrieved_docs to be a list rather than a string pointer.

6. Architectural Trade-offs: Time-Travel vs. Ephemerality

Decoupling the data-plane using the Pointer State Pattern eliminates the PostgreSQL I/O bottleneck, but fundamentally alters LangGraph’s deterministic replay guarantees. Distributed systems design relies heavily on recognizing these trade-offs and architecting defense mechanisms around them.

1. The Time-Travel Window

LangGraph natively supports resuming executions or debugging traces from any historical checkpoint_id. If you rely on Redis with a Time-To-Live (TTL) configuration (e.g., 24 hours to conserve RAM), any time-travel attempts older than the TTL will encounter cache misses. The hydration layer will gracefully return None for the evicted keys, meaning your state will be incomplete for retrospective analysis.

2. Graceful Degradation Design

Because cache eviction is now an intended behavior of the system architecture, nodes relying on offloaded state must implement defensive parsing. If a retrieved_documents key hydrates as None during a resumed thread execution, the consuming node must not fault. Instead, the graph logic must be designed to automatically emit an internal routing edge to re-invoke the retrieval tool, re-fetch the data from the external source, and patch the state back together dynamically.

3. S3/GCS as a Durable Alternative

If strict, long-term time-travel over heavy RAG payloads is a hard compliance requirement for your enterprise (e.g., highly regulated audit trails), Redis must be swapped for Object Storage like Amazon S3 or Google Cloud Storage (GCS).

The Trade-off: Object storage successfully eliminates the PostgreSQL IOPS bottleneck and preserves infinite time-travel persistence without aggressive RAM costs. However, it introduces ~30ms – 80ms of network latency during state hydration at every single superstep. Conversely, an in-VPC Redis instance typically responds in <1ms. You must choose between execution speed and long-term debug persistence.

7.Performance Audit & Specialized Engineering

Designing and scaling multi-agent AI systems requires more than connecting API endpoints. When frameworks like LangGraph hit production traffic limits, the bottlenecks are rarely AI-related; they are deeply rooted in distributed systems architecture, database mechanics, and infrastructure design.

At Azguards Technolabs, we specialize in Performance Audits and Specialized Engineering for enterprise AI workloads. Rather than applying superficial patches, we analyze the underlying I/O metrics, state serialization overhead, and replication constraints to build resilient, high-throughput agentic architectures. Whether you require mitigating TOAST tuple bloat, migrating persistent state layers, or designing highly concurrent RAG systems, our engineering teams provide the technical rigor required to stabilize your infrastructure.

Conclusion

LangGraph is a remarkably powerful framework for cyclical, multi-agent orchestration, but its default state persistence mechanisms treat all data as equal. By failing to separate lightweight control-plane routing flags from massive data-plane context payloads, teams rapidly run into PostgreSQL’s fixed architectural limits.

Implementing the Pointer State Pattern bypasses the devastating write-amplification caused by TOAST out-of-line storage, drastically reducing WAL generation, CPU overhead, and disk IOPS saturation. By treating state persistence as a decoupled, multi-tier systems problem rather than a standard database insert, you ensure your agent infrastructure scales linearly with your production demands.

If your LangGraph deployments are encountering scaling limits, state bloating, or unacceptable latency bottlenecks, it is time for a structural evaluation. Contact Azguards Technolabs today to schedule a comprehensive architectural review and specialized implementation.

Would you like to share this article?

HITTING SCALING LIMITS WITH LANGGRAPH?

Let Azguards Technolabs eliminate checkpoint bloat and restore database performance.

All Categories

Latest Post

- The Orphaned Thread Crisis: Managing Schema Drift in Suspended LangGraph Workflows

- How to Fix Make.com Webhook Queue Overflows: The DLQ & Redis Strategy

- DuckDB Spill Cascades: Mitigating I/O Thrashing in Out-of-Core SEO Data Pipelines

- Beyond the TIME_WAIT Cliff: Scaling N8N Egress Velocity with Envoy Sidecar

- Mastering Distributed Rate Limiting: Eliminating the 429 Thundering Herd in Shopify K8s Topologies