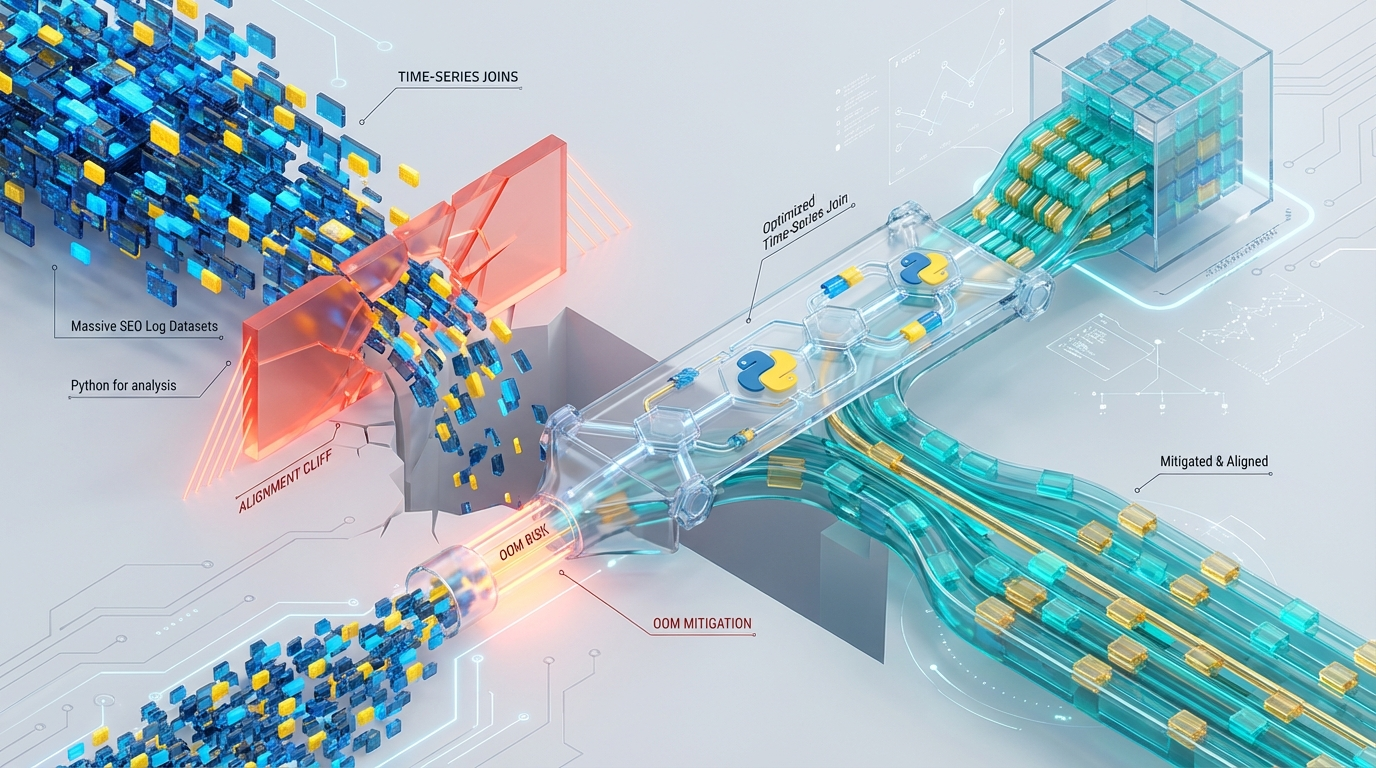

The Alignment Cliff: Why Massive Python Time-Series Joins Trigger OOMs — and How to Fix Them

Time-series alignment of massive datasets is a notoriously hostile operation in Python. When engineering pipelines to merge 500M+ row server logs with Google Search Console (GSC) crawl states, data engineering teams inevitably collide with a systemic failure mode we identify as the “Alignment Cliff.”

The objective is straightforward: execute a tolerance-based time join (merge_asof) to map asynchronous crawler events to specific server log timestamps. However, attempting to execute this on a single node using eager evaluation triggers non-linear memory spikes, instantaneous Out-Of-Memory (OOM) crashes, and severe Global Interpreter Lock (GIL) contention.

This architectural breakdown deconstructs the mechanics of the Alignment Cliff and outlines a bounded-memory, hash-sharded framework utilizing Polars and jemalloc to process arbitrarily large time-series joins safely.

Anatomy of the Alignment Cliff

The Alignment Cliff describes the catastrophic degradation of system stability caused by sorting-induced OOMs during time-series merges. Pandas’ merge_asof demands strictly sorted inputs. If you pass an out-of-order eager DataFrame into df.sort_values('timestamp'), the engine forces a full materialization of intermediate arrays in memory.

The Eager Materialization Multiplier

From a systems perspective, the peak memory footprint (PpeakPpeak) required to successfully execute an eager Pandas merge_asof between Dataset A (Server Logs) and Dataset B (GSC States) scales according to the following theoretical engineering model:

Ppeak≈Mem(A)+Mem(Asorted)+Mem(B)+Mem(Bsorted)+Mem(A⋈B)+Mem(IndexMaps)

This eager materialization is computationally unforgiving. A seemingly manageable 15GB log dataset and 5GB GSC dataset can easily trigger an >80GB RSS memory allocation spike. Because this memory is demanded almost instantaneously during the sort-and-merge phase, it rapidly breaches container limits, immediately triggering the Linux cgroup OOM killer.

Furthermore, memory behavior is dictated by kernel-level overcommit limits. If your infrastructure enforces vm.overcommit_memory=2, the Linux kernel strictly abides by the CommitLimit. Under these constraints, eager pandas joins will not gracefully degrade to disk swapping; they will fail with a MemoryError the exact millisecond the swap-to-RAM ratio is breached.

Execution Bottlenecks: The GIL and glibc Fragmentation

Even if your hardware possesses the RAM overhead to survive the PpeakPpeak multiplier, the execution layer introduces crippling bottlenecks.

Pandas processes the merge_asof loop via single-threaded Cython. In a purely numeric join, Cython can execute bounds checking while releasing the Global Interpreter Lock (GIL). However, SEO log alignment requires cross-grouping via the by="url" parameter.

This specific constraint forces the Cython extension to instantiate Python objects for string equality matching. Object instantiation immediately locks the GIL, hard-capping CPU utilization to a single core. The constant context switching and Python C-API overhead cause severe L3 cache thrashing, rendering high-core-count compute instances entirely useless.

Compounding the GIL bottleneck is the default CPython allocator. Pandas relies heavily on standard memory allocation, but irregularly sized URL strings cause massive contiguous memory fragmentation. Standard glibc is poorly equipped to handle this access pattern. Due to M_ARENA_MAX limits in glibc, intermediate sort buffers that have been technically freed by the Python garbage collector are frequently trapped in fragmented heaps. Instead of returning to the host OS, these dead allocations maintain an artificially high Resident Set Size (RSS), pushing the process closer to the OOM threshold even after intermediate data structures are dereferenced.

Apache Arrow Limits and Polars Constraints

Modernizing the stack by migrating to an Apache Arrow backend (via PyArrow or Polars) introduces a different set of hard limits that must be engineered around.

Standard Apache Arrow enforces a strict 2GB limit on contiguous string buffers because it relies on Int32 offsets for memory addressing. Processing massive log files with heavy text columns (like raw URLs) will instantly breach this limit. While Polars natively mitigates this by defaulting to LargeString (Int64 offsets), failing to explicitly specify this schema in mixed PyArrow/Pandas pipelines will trigger silent data truncation or catastrophic OverflowError exceptions mid-flight.

Additionally, data engineers frequently attempt to solve the Alignment Cliff by leaning blindly on out-of-core streaming engines. Polars provides robust streaming capabilities via collect(streaming=True). However, a fundamental constraint remains: join_asof requires fully sorted datasets.

If the upstream log data exceeds available system RAM, the global sort() operation required prior to the join_asof will trigger heavy disk spilling or OOM. The streaming engine alone cannot magically resolve global time-series alignment on unsorted data; the physics of the O(NlogN) sorting complexity remain.

Architectural Pivot: Bounding the Unbound

To achieve a multi-threaded, memory-safe alignment, we must abandon the global join approach. The solution requires decomposing the global state into bounded, entirely independent operations using a combination of consistent hash sharding, lazy evaluation, and advanced memory allocation control.

1. Pre-computation: Consistent Hash Sharding

Instead of attempting a monolithic join_asof grouped by URL, the architecture must push the complexity upstream. Upon initial ingestion, the raw Parquet files are partitioned into NN deterministic shards using a highly performant, non-cryptographic hashing algorithm such as xxhash or murmur3.

Partition Key: xxhash(url) % NUM_SHARDS

Result: All server logs and GSC stats for any specific URL are cryptographically guaranteed to reside in the exact same physical shard ID. By physically co-locating the data, we eliminate the need for global shuffles during the join phase.

2. Isolated Execution: Polars LazyFrames

With the data sharded by URL hash, the O(NlogN) sorting complexity and memory footprint are strictly bounded to Memtotal/NUM_SHARDS

We leverage Polars LazyFrames to execute the operations per-shard. Lazy evaluation allows the Polars query optimizer to push down predicates—such as filtering out known bot IP ranges or irrelevant status codes—before loading the data into memory. Because the shard is mathematically constrained to fit within system RAM, Polars can execute the localized sort entirely in-memory. Finally, the engine executes the Rust-based multi-threaded join_asof, bypassing the Python GIL entirely.

3. Allocation Control via jemalloc

To resolve the glibc heap fragmentation discussed earlier, we aggressively modify the runtime environment. By forcing the Python interpreter to utilize jemalloc instead of the standard C allocator, we gain explicit control over memory decay.

By configuring aggressive background decay rates in jemalloc, we ensure that the massive string offset buffers generated during the asof join are immediately flushed back to the OS between shard executions, permanently neutralizing the RSS creep.

Implementation Playbook

Implementing this architecture requires strict control over both the OS-level environment variables and the Python execution logic.

System Configuration

Before initializing the Python runtime (whether locally, in a Docker entrypoint, or across a distributed framework like Ray), the following environment variables must be injected to control Rust thread pools and force jemalloc memory allocation:

Python/Polars Execution Logic

Deploy the following isolated worker logic across your distributed compute cluster. This function independently processes the hash-sharded Parquet files, safely executing the time-series alignment without triggering the global eager materialization multiplier.

Notice the critical inclusion of row_group_size=100_000 in the sink_parquet function. Even with a bounded upstream join, writing highly compressed Parquet files with large string columns can trigger an OOM if the row group buffers are allowed to expand infinitely. Constraining the row group size forces the streaming engine to routinely flush the output buffers to disk.

Architectural Benchmarks: Before vs. After

Transitioning from a monolithic eager execution model to a hash-sharded lazy execution model radically alters the telemetry profile of the workload. The table below outlines the architectural shifts observed when benchmarking the two paradigms against a combined 20GB dataset (15GB logs, 5GB GSC states).

| Metric / Constraint | Legacy Architecture (Pandas merge_asof) | Optimized Architecture (Sharded Polars) |

|---|---|---|

| Peak RAM (RSS) | >80GB (Spikes of 3×–5× dataset size) | Strictly Bounded (Mem_total / NUM_SHARDS) |

| CPU Utilization | 1 Core (Locked by Python GIL) | Multi-Core (Scales linearly via Rust threads) |

| Sort Complexity | Global O(N log N) | Localized O(N log N) per shard |

| Memory Allocator | glibc (High fragmentation, trapped arenas) | jemalloc (Aggressive 2000ms dirty decay) |

| String Offsets | Int32 (Silent truncation at 2GB limit) | LargeString / Int64 (Effectively infinite) |

| Disk Spill / OOM | Instant MemoryError via CommitLimit | Managed disk buffering via sink_parquet |

By replacing global pandas.merge_asof with URL-Hash Sharding combined with Polars sink_parquet, the computational time complexity shifts from being bottlenecked by a single CPU core locked by the Python GIL, to scaling predictably across horizontally distributed Rust threads.

Most crucially for production stability, the memory footprint transitions from unpredictable, volatile 3×−5× spikes to a strictly bounded, deterministic ceiling defined entirely by the 1/N shard size you configure upstream.

Engineering Resiliency with Azguards Technolabs

Migrating from fragile data scripts to highly resilient, bounded-memory infrastructure requires specialized expertise. Standard documentation rarely covers the hostile intersection of Python C-extensions, kernel-level memory limits, and localized L3 cache thrashing.

At Azguards Technolabs, we function as your partner for deep Performance Audits and Specialized Engineering. We do not just implement modern frameworks; we audit the underlying computational physics of your pipelines. Whether it involves debugging complex OOM killers, re-architecting data ingestion for deterministic sharding, or configuring custom memory allocators like jemalloc across distributed clusters, we help enterprise teams harden their Python-based analytics infrastructure.

If your data engineering org is fighting unexplainable OOMs, silent data truncation, or stalled pipelines struggling to scale beyond single-node compute limits, the architecture requires an overhaul, not just a larger cloud instance.

Contact Azguards Technolabs for an architectural review, and let our Principal Content Architects and Senior Engineers transition your complex, high-volume workloads into predictable, linearly scalable systems.

Would you like to share this article?

Engineering Pipelines That Don’t Collapse at Scale

If your team is fighting unexplained OOM crashes, GIL bottlenecks, or unpredictable RSS spikes, the problem isn’t RAM—it’s architectural design.Azguards Technolabs specializes in bounded-memory system design, performance audits, allocator optimization, and large-scale Python infrastructure hardening.

All Categories

Latest Post

- The Desync Trap: Solving Postgres Read-After-Write Anomalies in AI Agent Workflows

- Mitigating Checkpoint Collisions & Write-Skew in LangGraph

- Spring Kafka Exactly-Once: Mitigating the Fencing Avalanche & Zombie Producers

- The Orphaned Thread Crisis: Managing Schema Drift in Suspended LangGraph Workflows

- How to Fix Make.com Webhook Queue Overflows: The DLQ & Redis Strategy